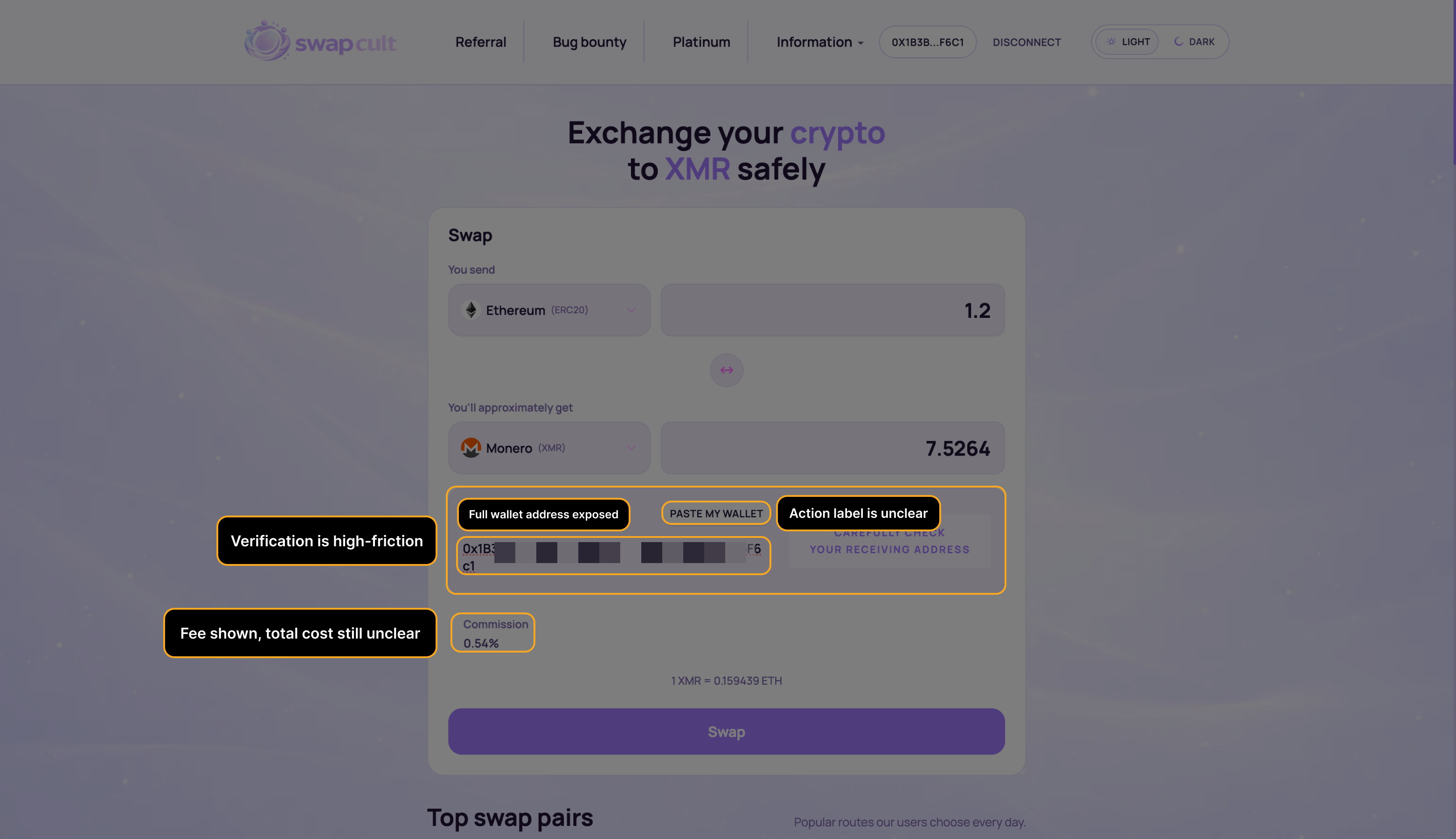

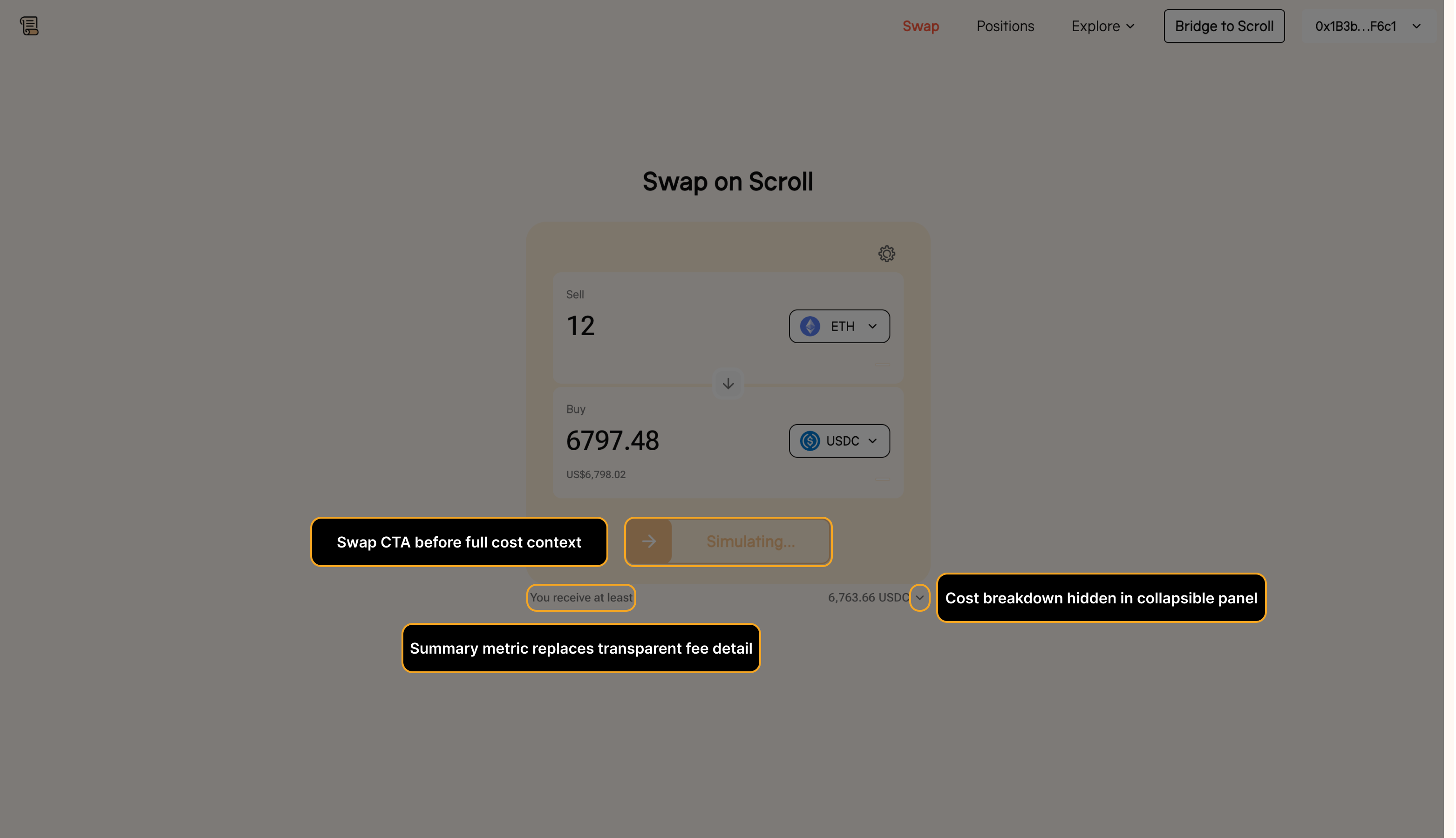

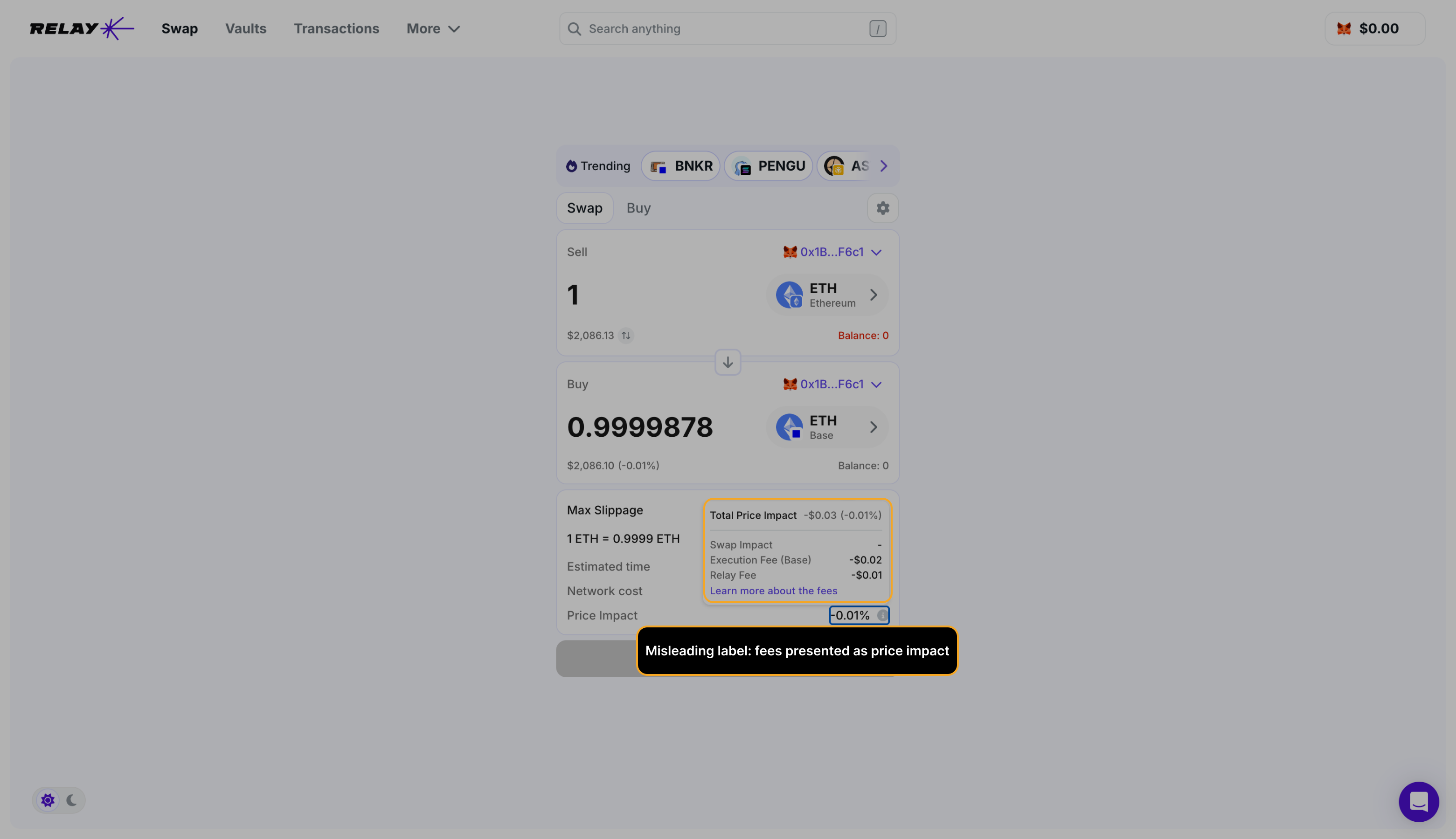

Swap confirmation

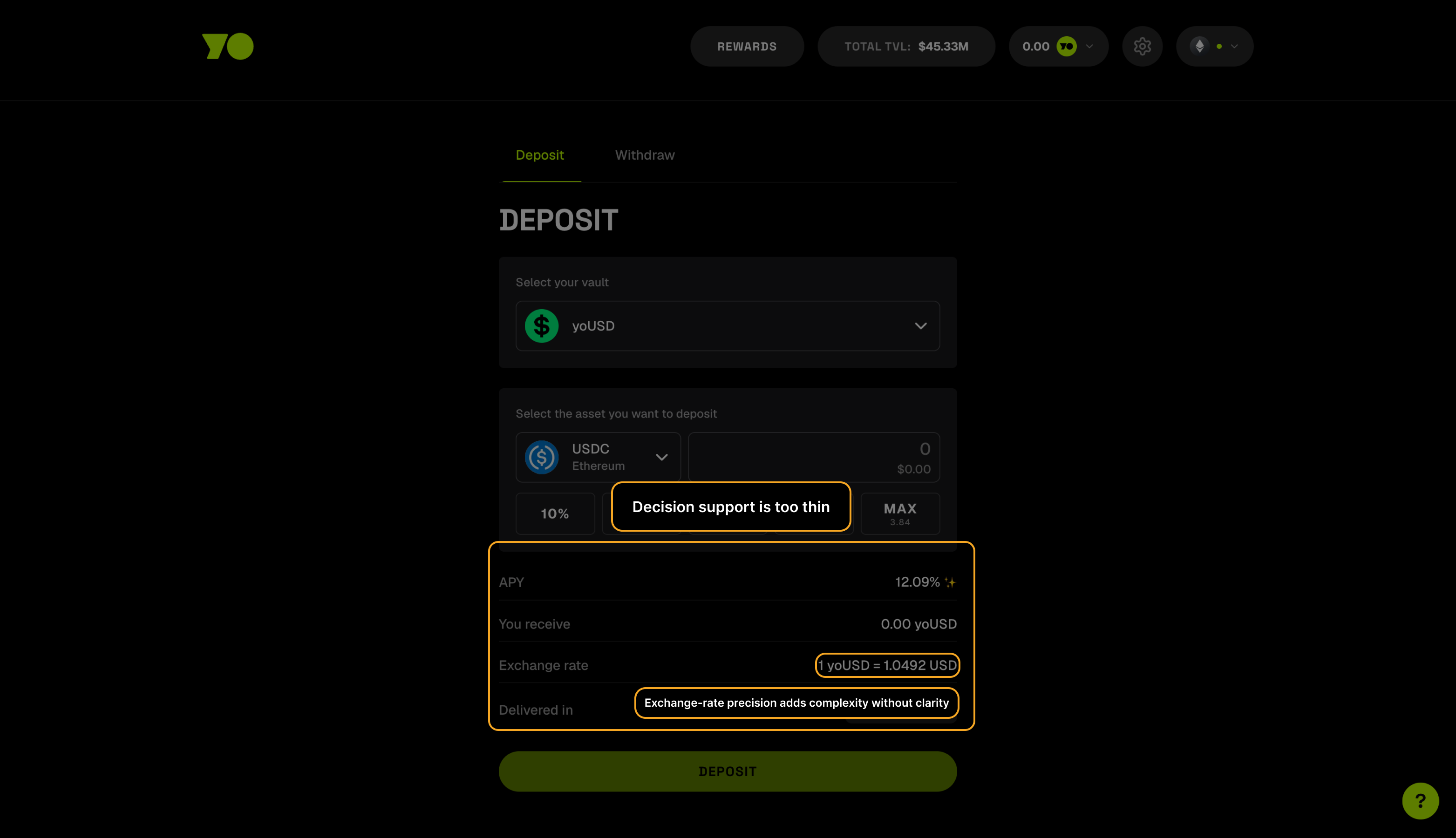

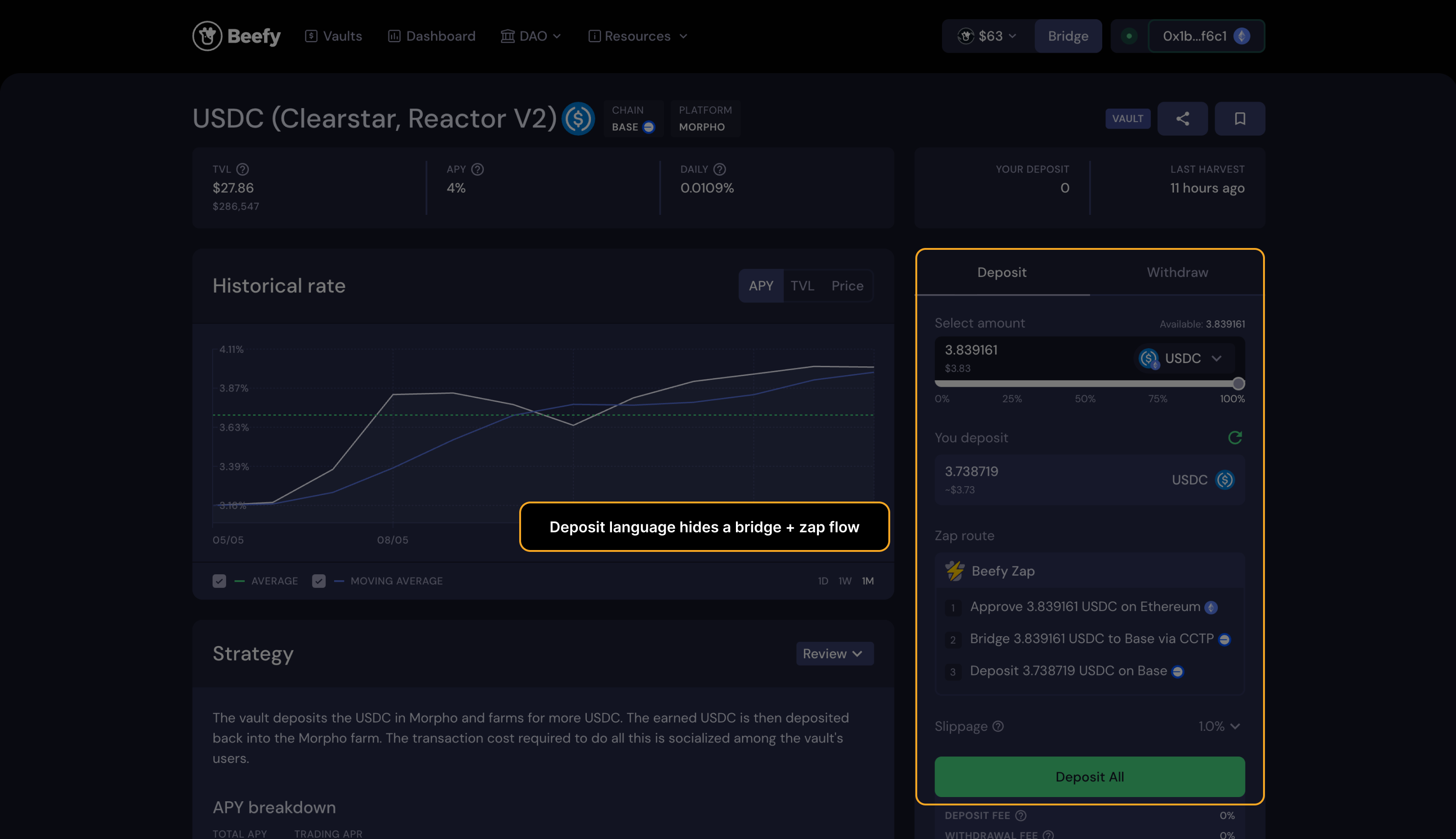

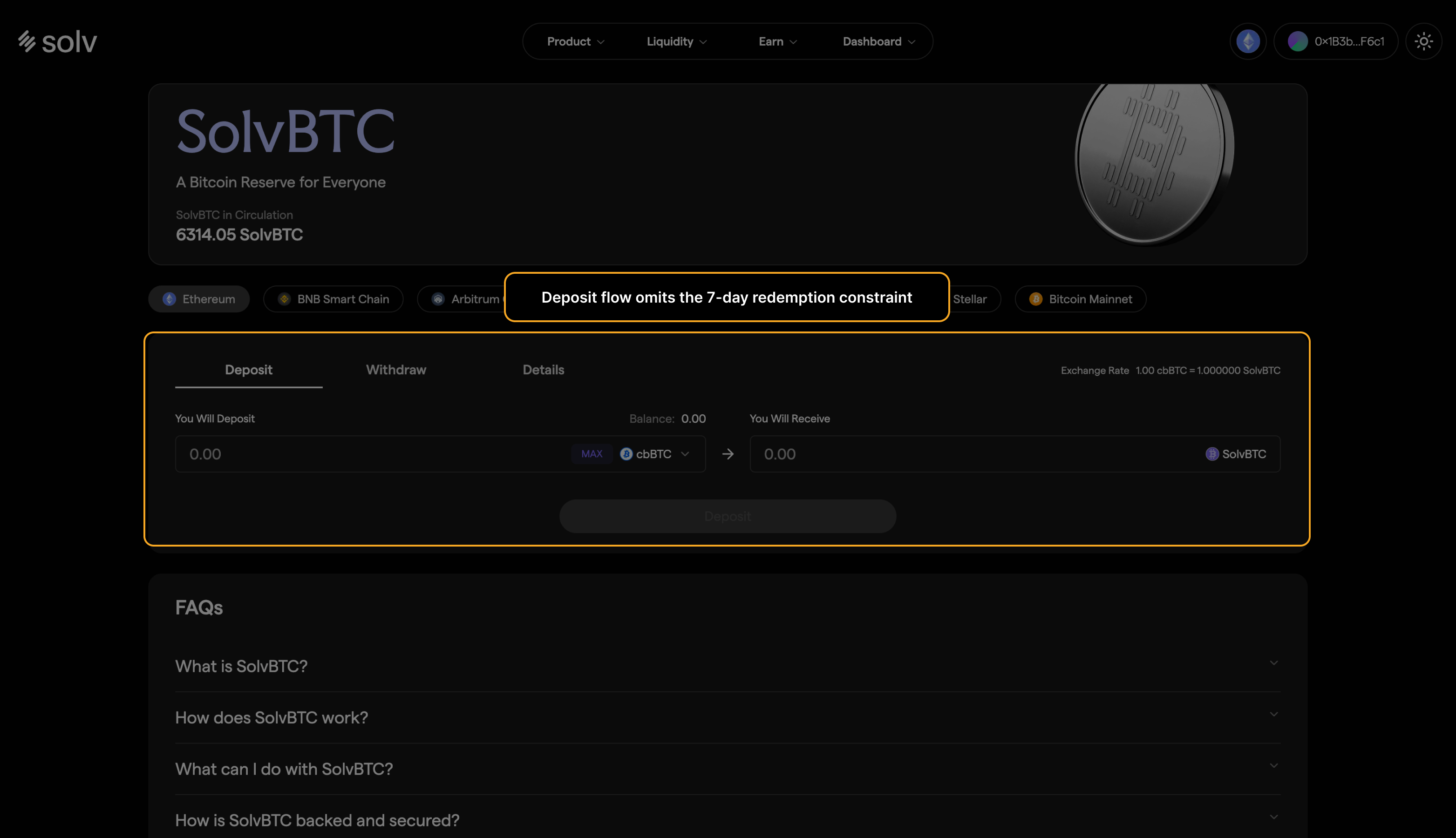

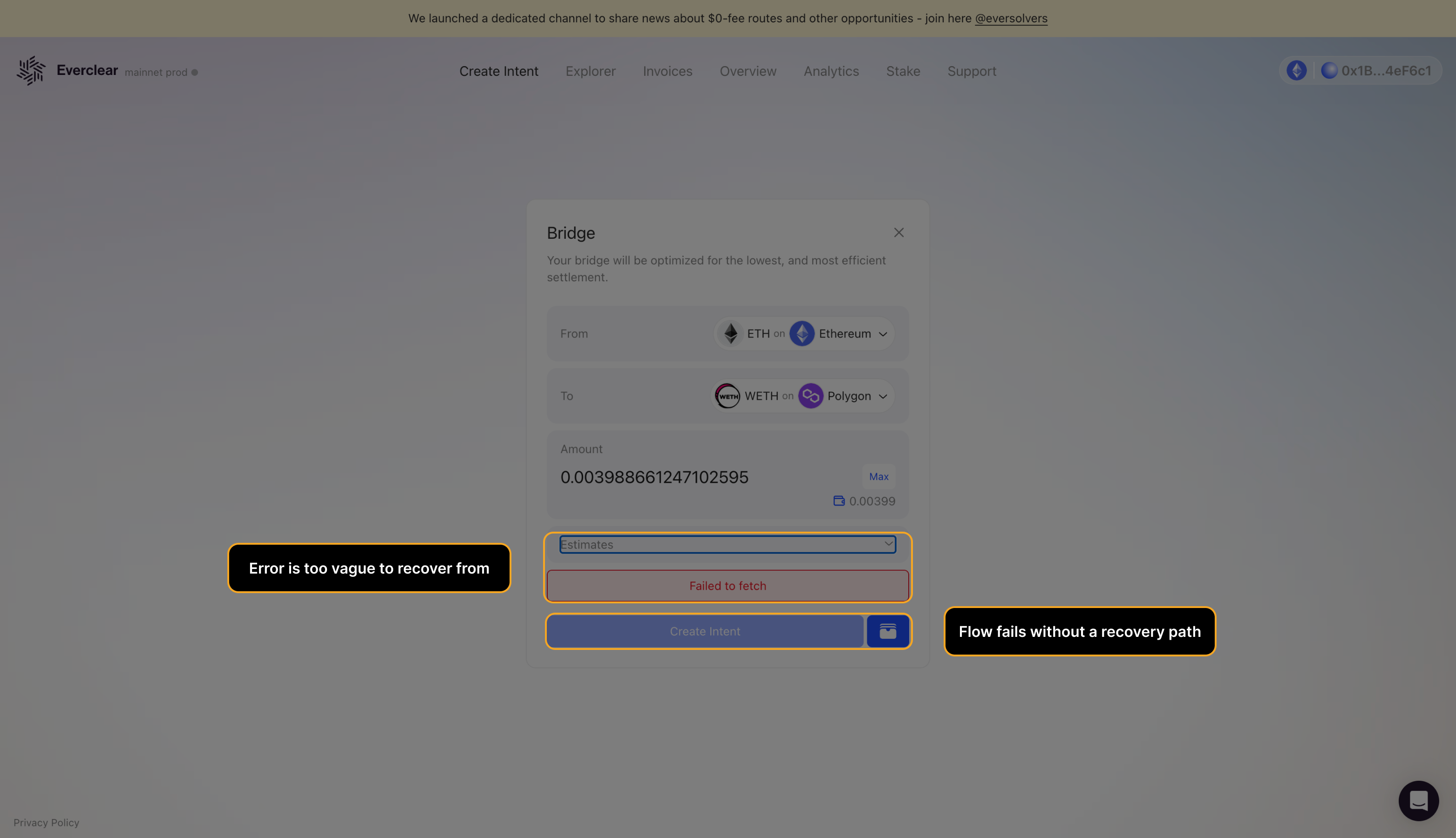

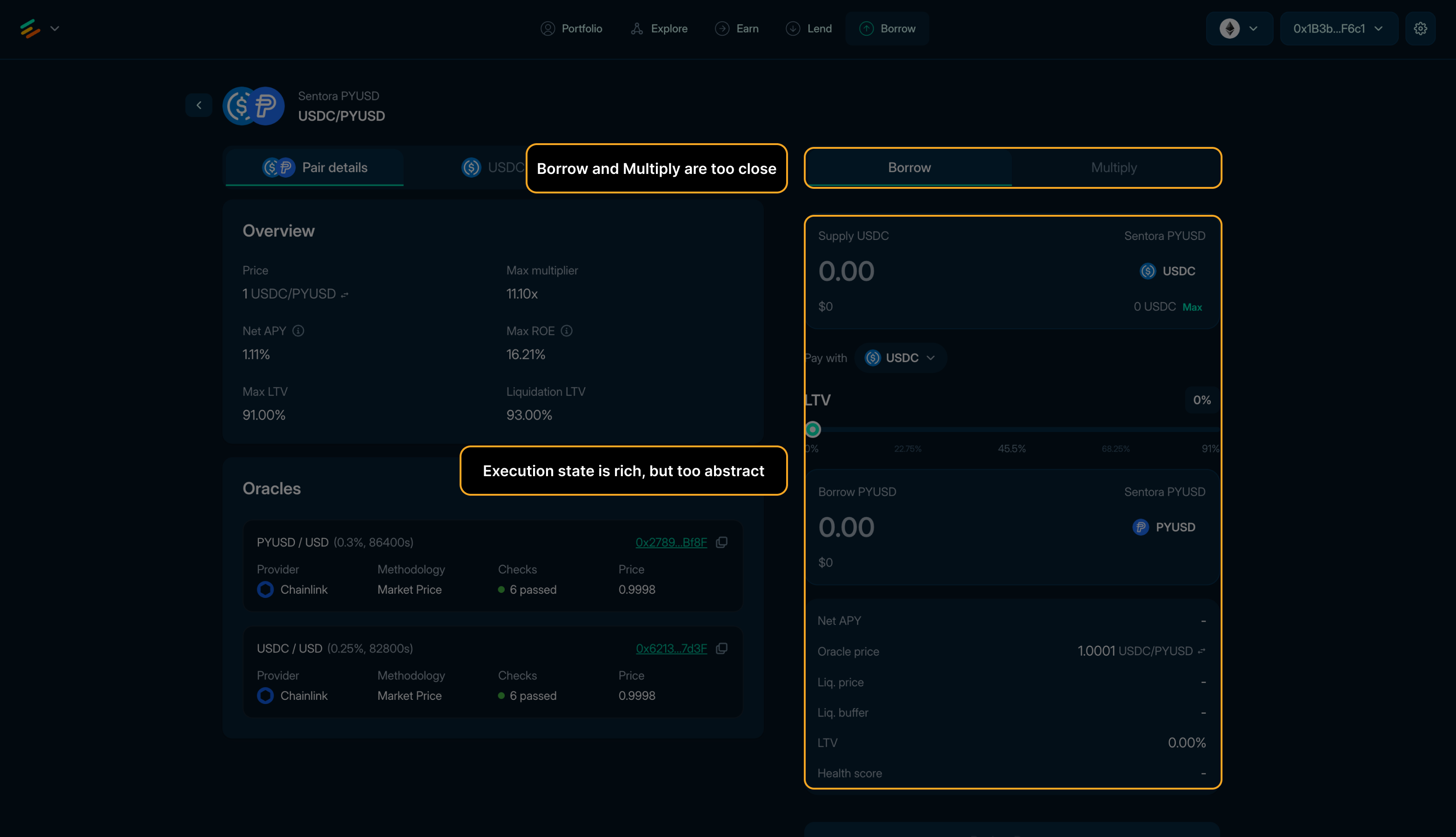

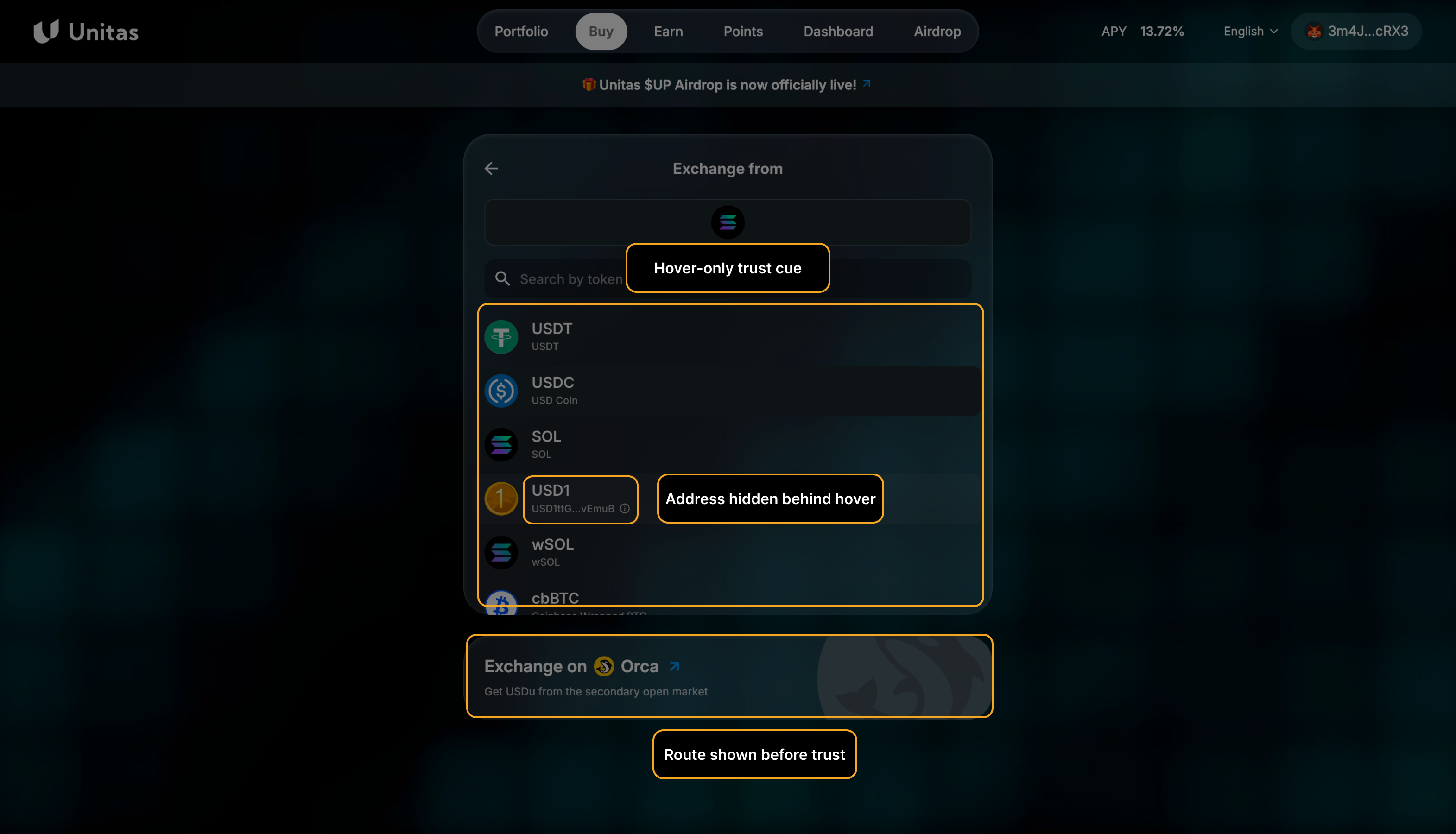

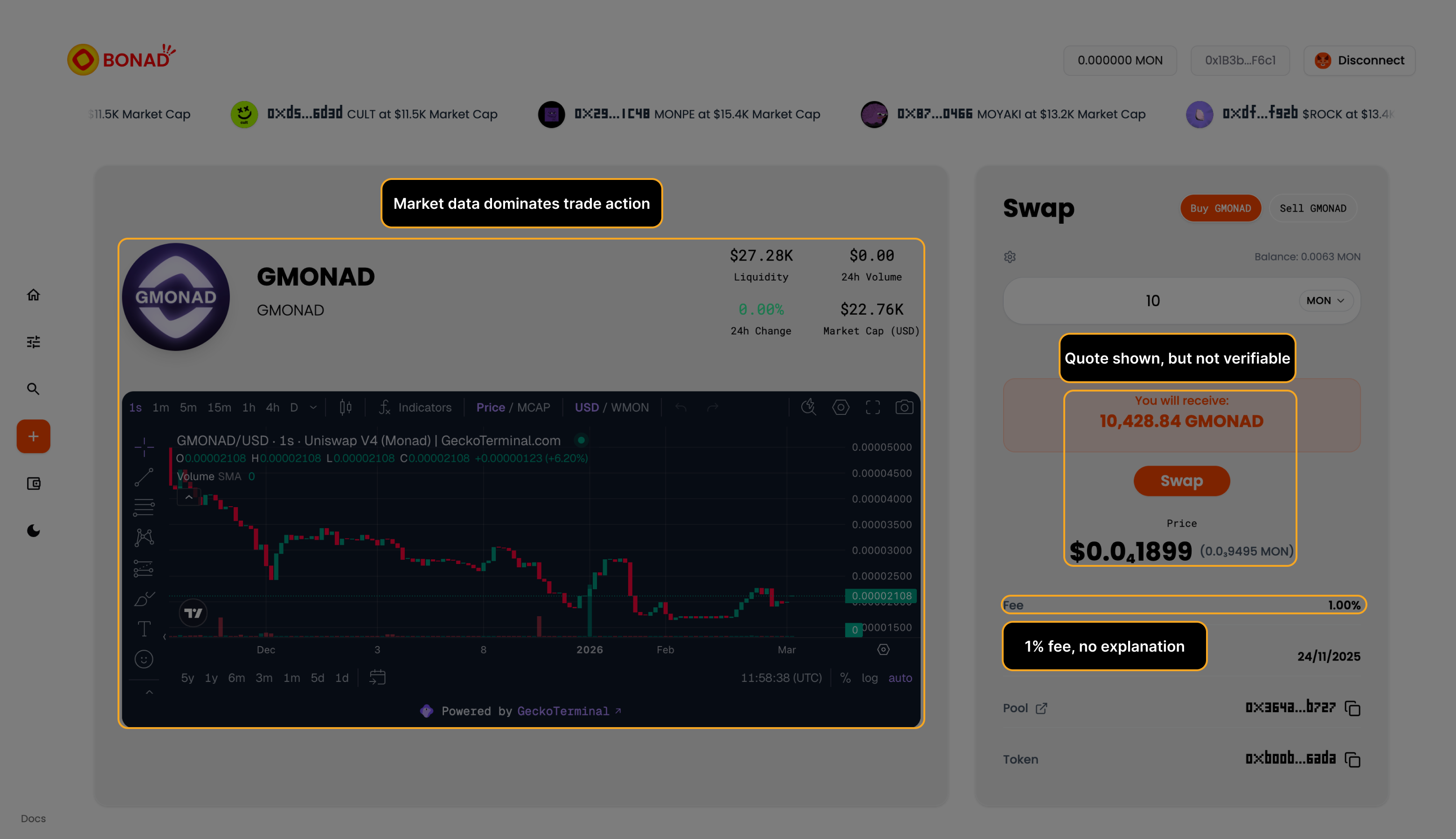

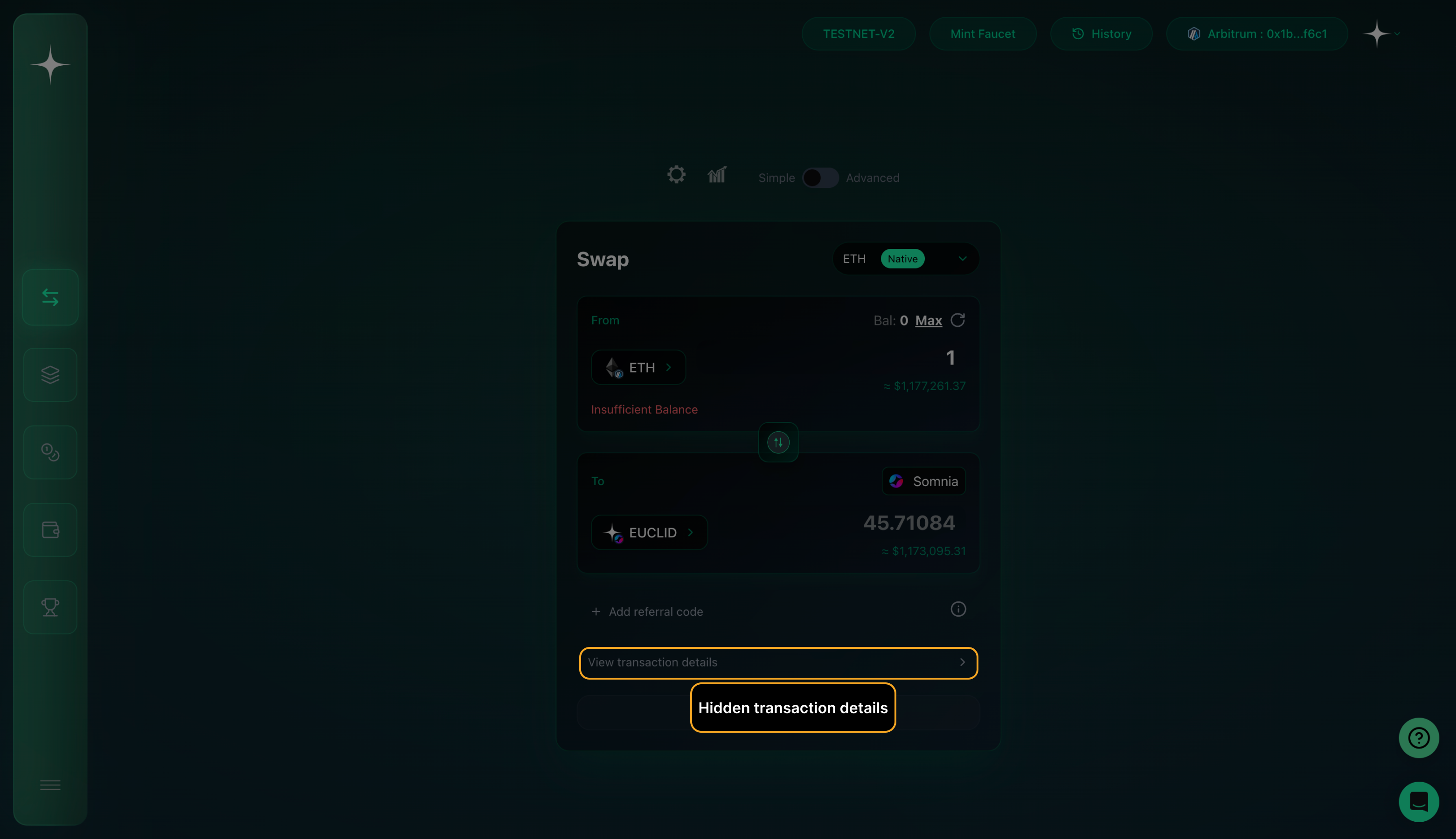

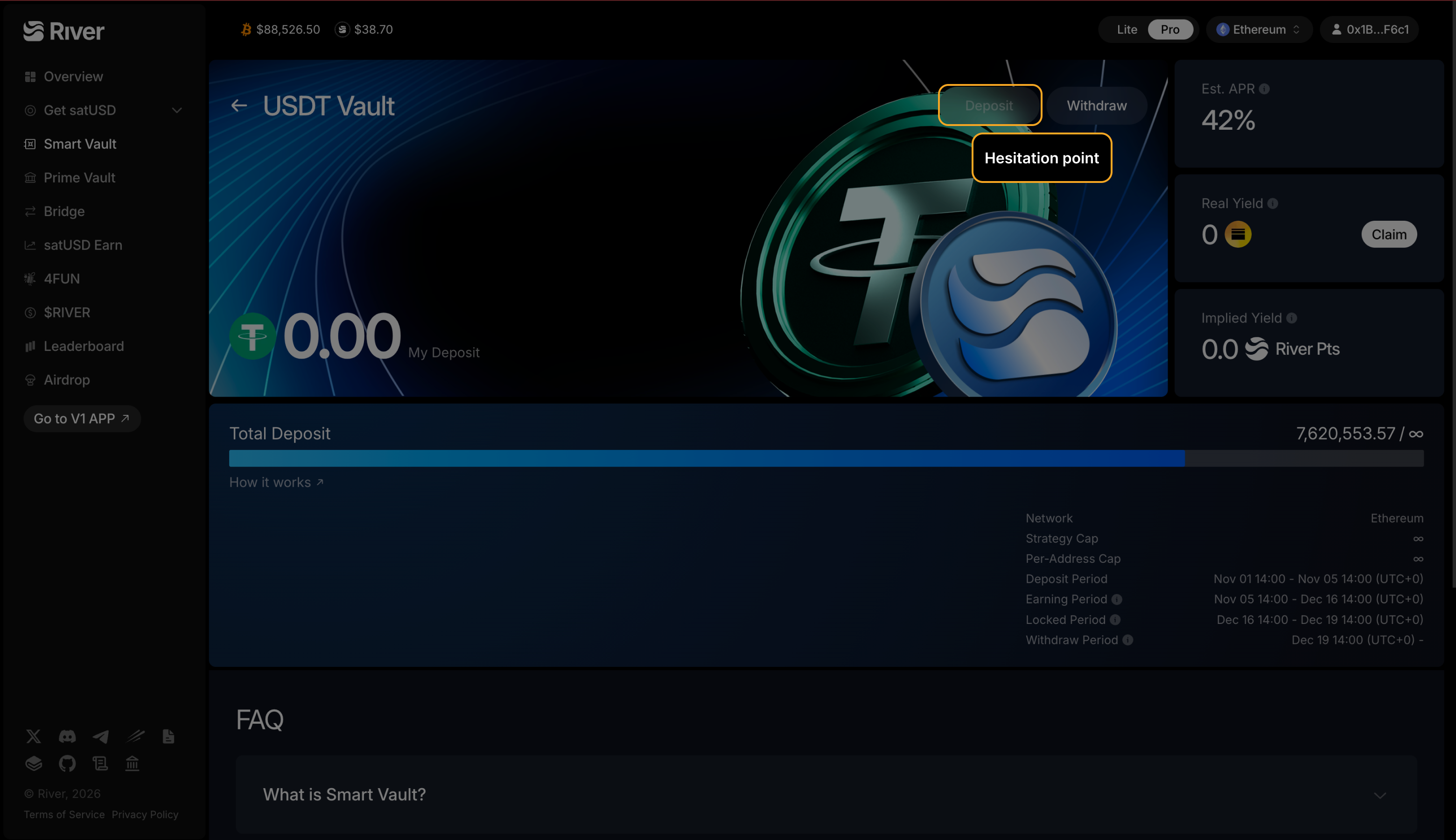

Users abandon when fees, slippage, or route details arrive too late in the decision sequence.

Abandonment

Reduce drop-off at swap, bridge, and borrow confirmation. I find the UI ambiguity that causes hesitation right before users commit.

Deliverable in 1 week: annotated findings, prioritized fixes, and a walkthrough call.

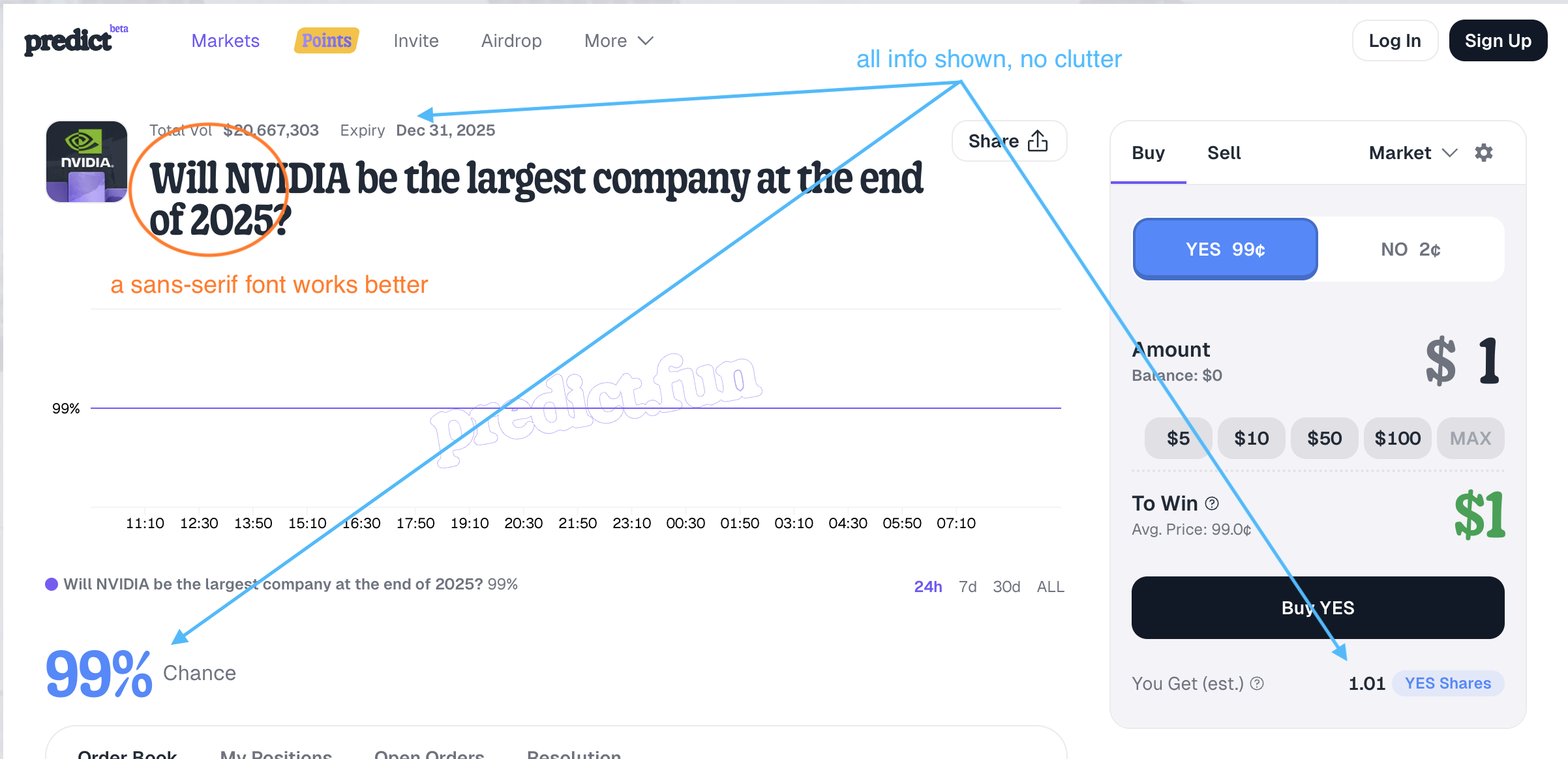

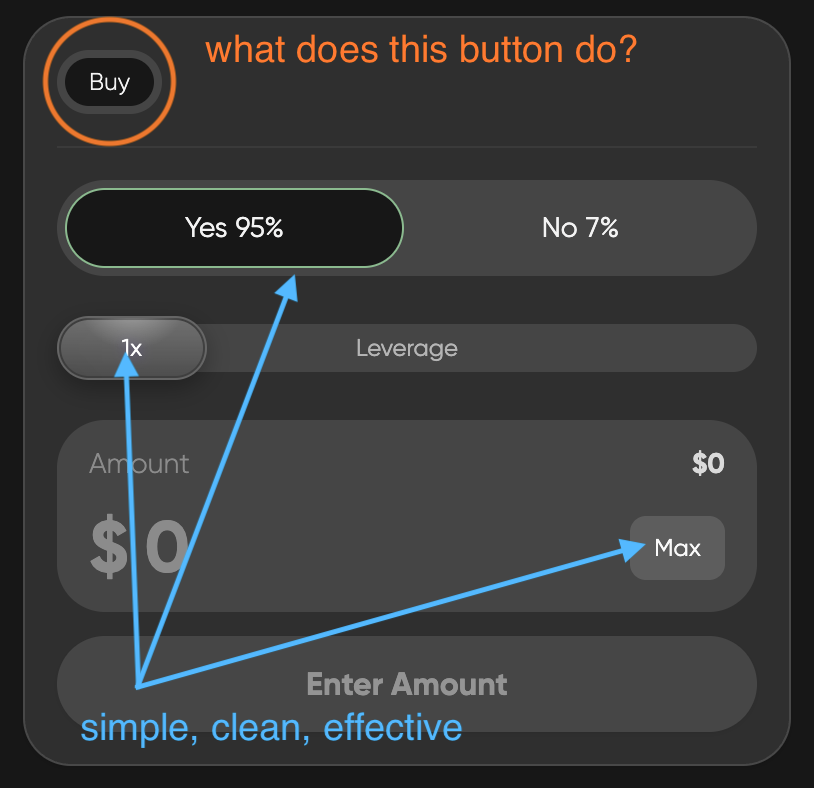

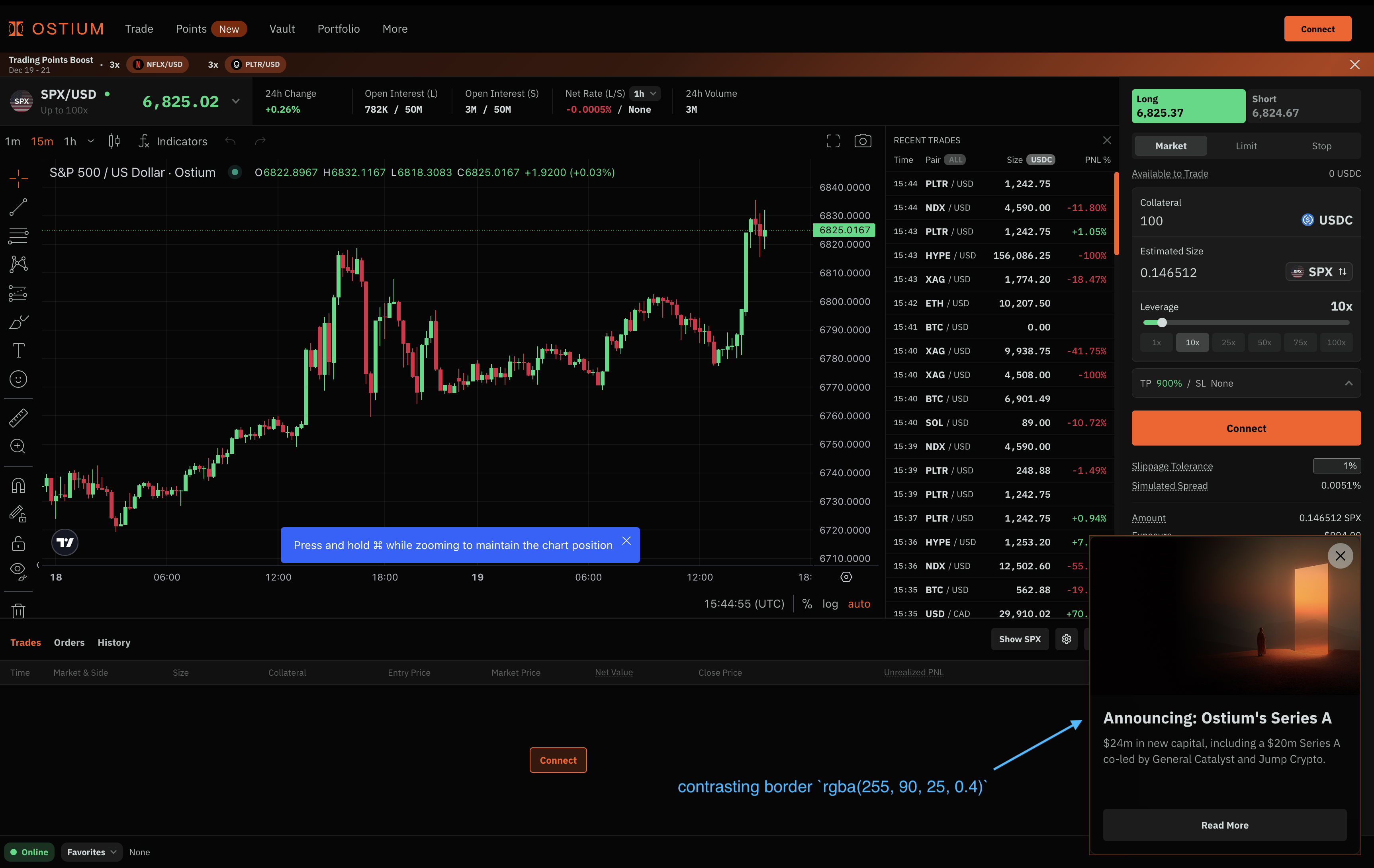

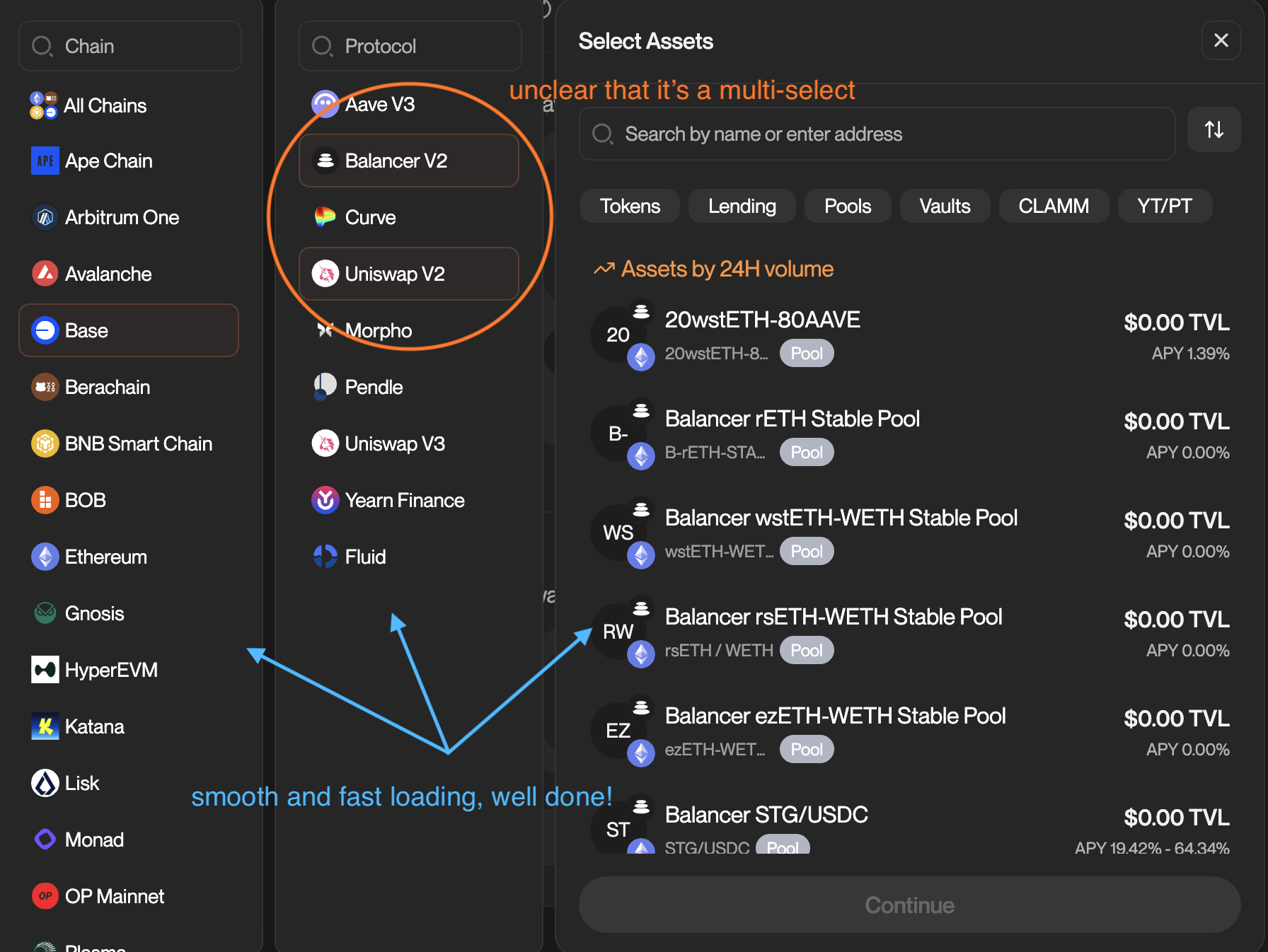

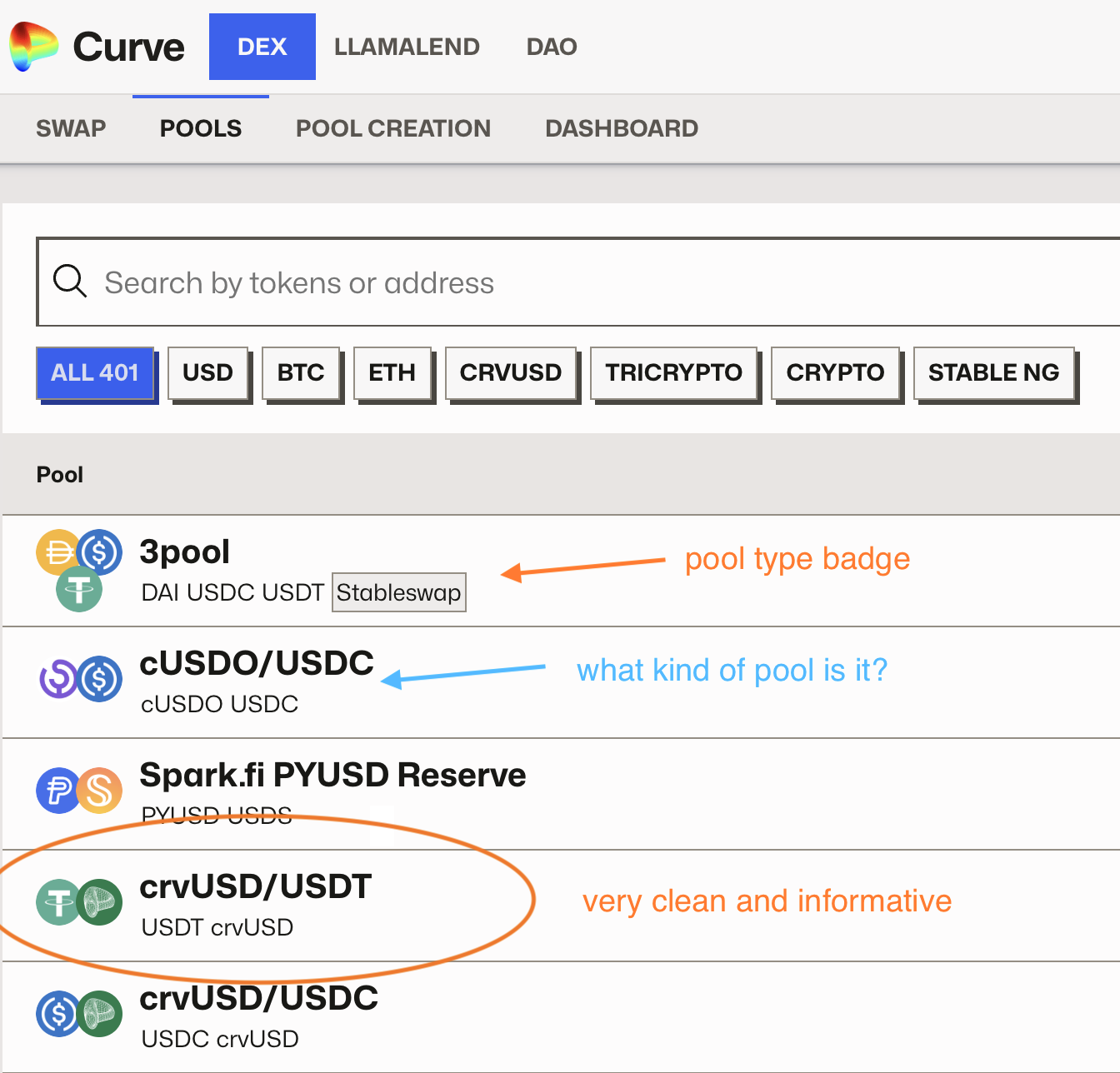

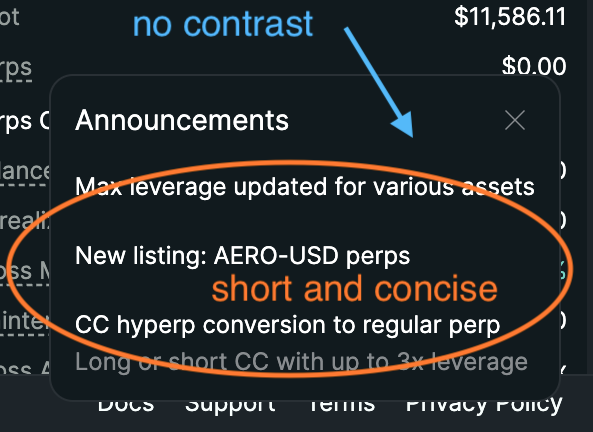

Public teardowns across 95 protocols. No client data.

Featured Audit

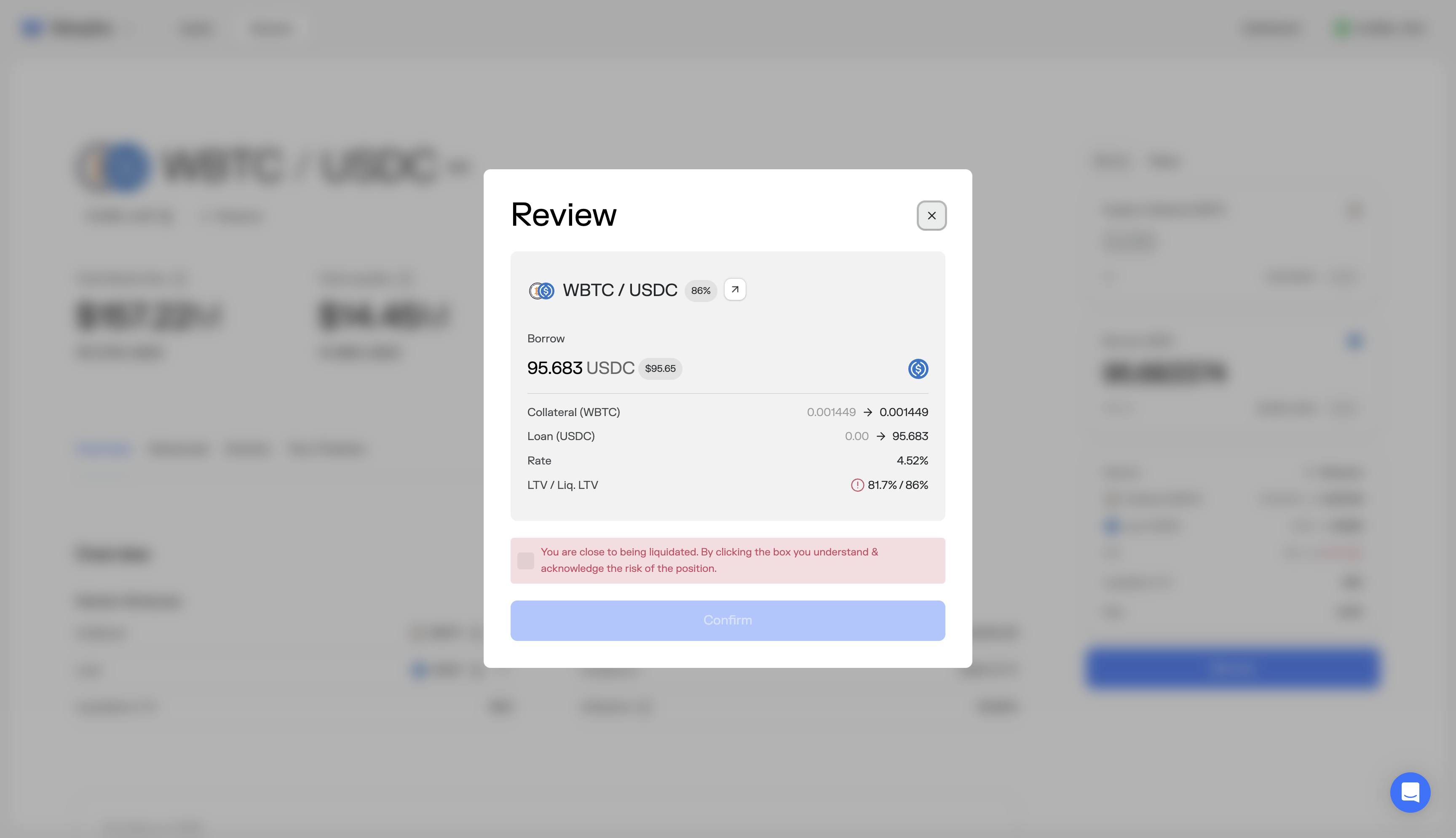

Full execution audit of Morpho Blue's borrow flow on Ethereum. Covers market selection, LTV risk communication, wallet approval anxiety, and confirmation screen design. Includes annotated screenshots and implementation-ready fixes.

Read full audit →Public teardowns. No client data.

No teardowns match ""

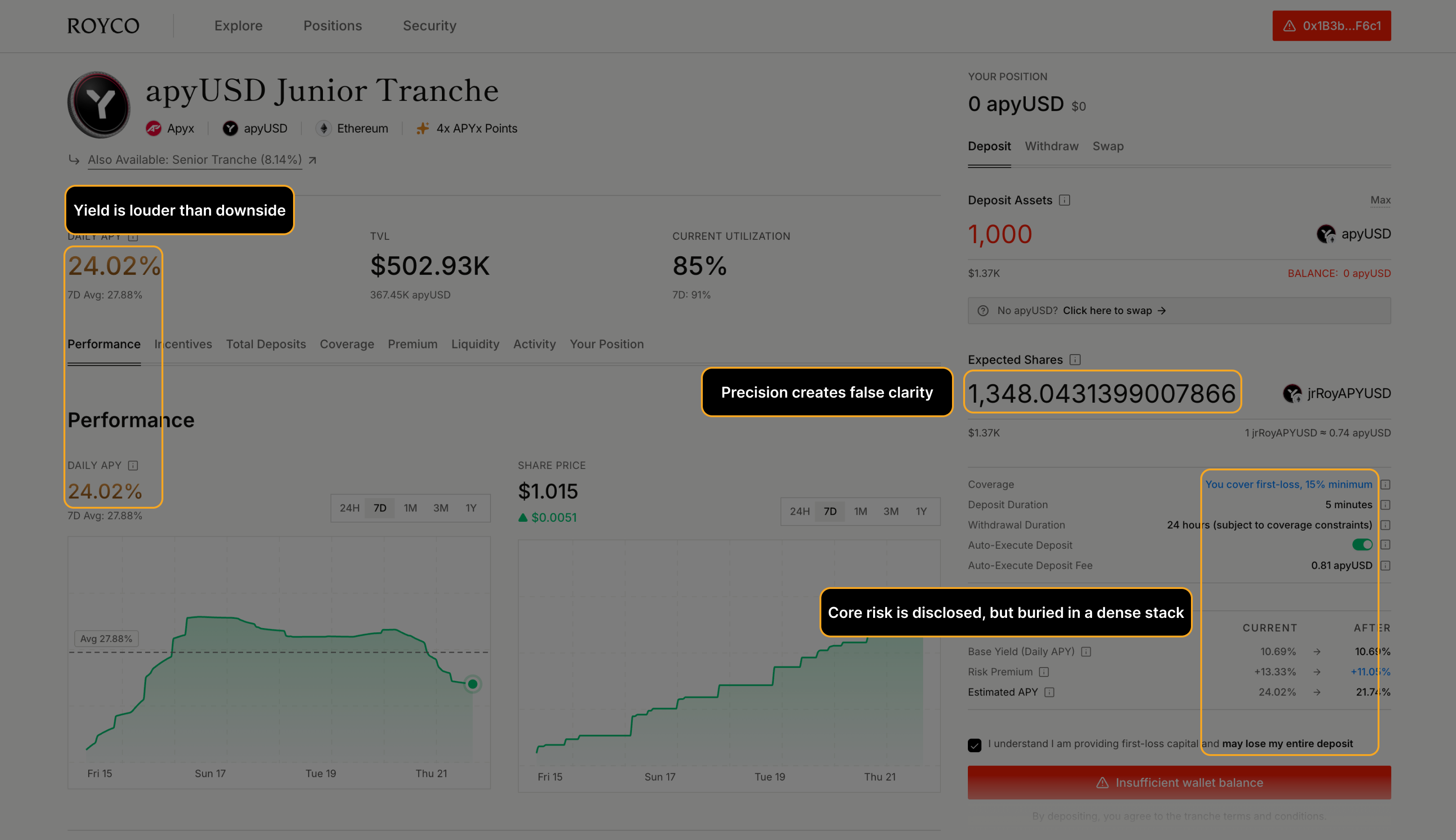

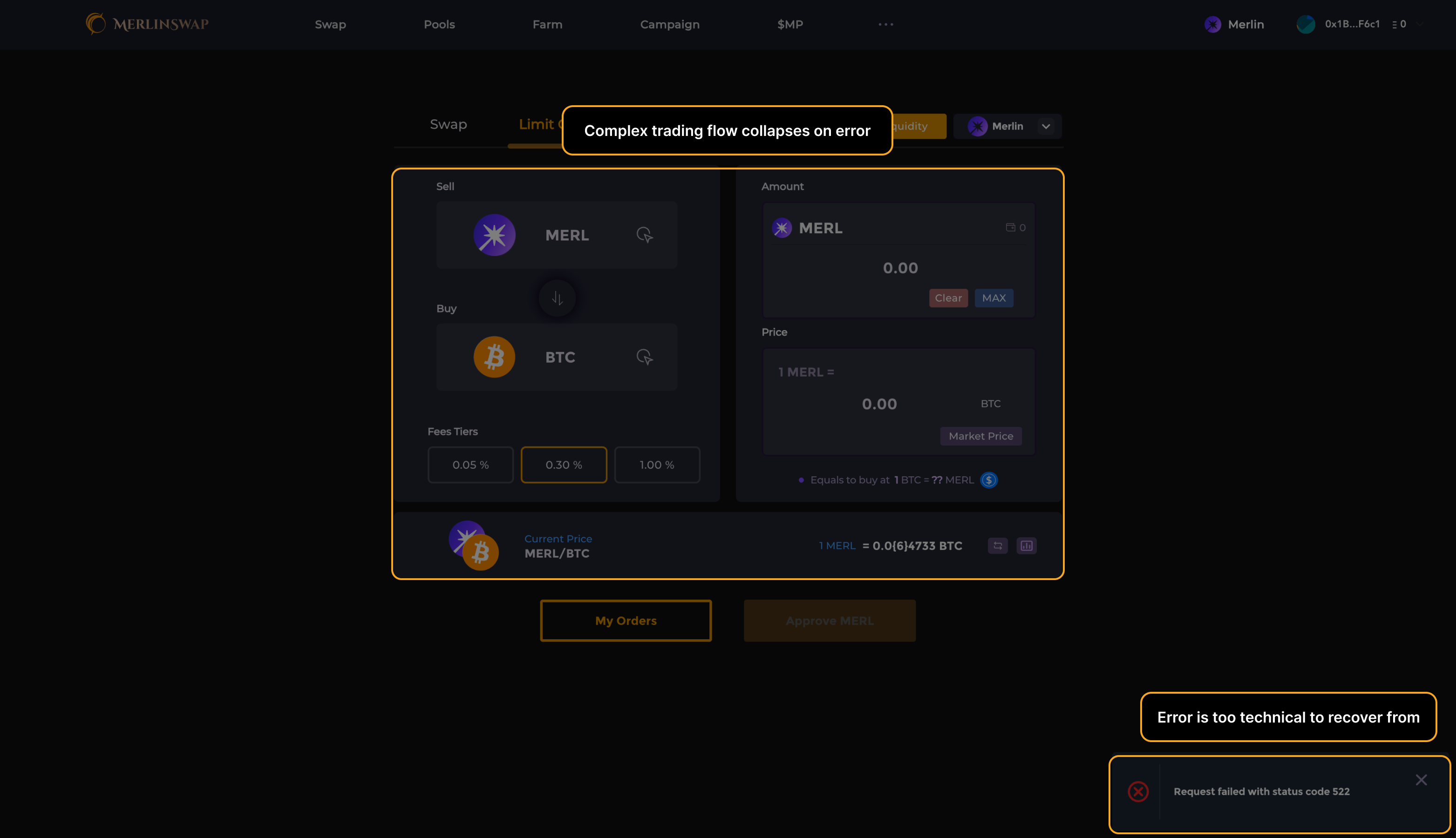

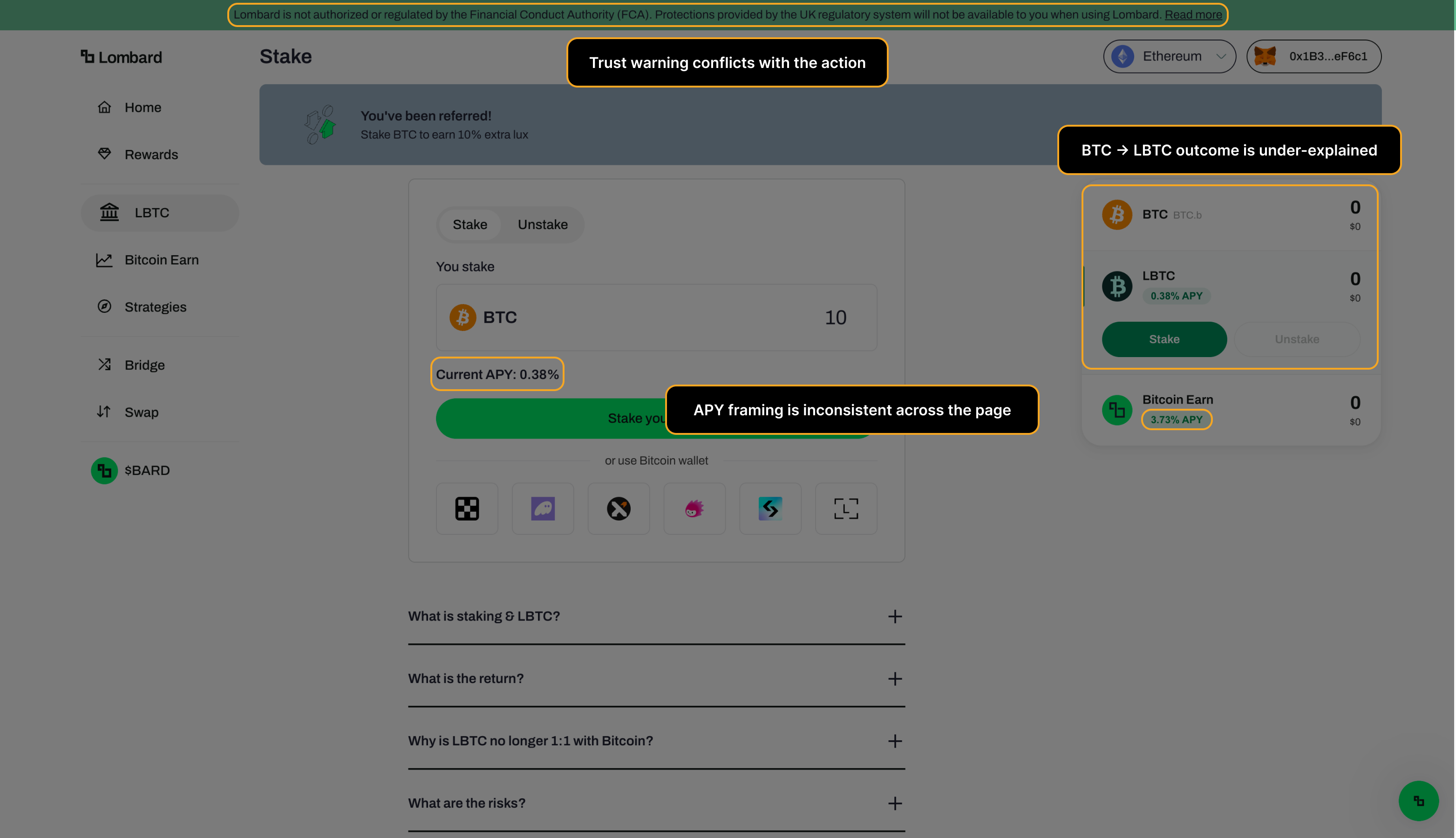

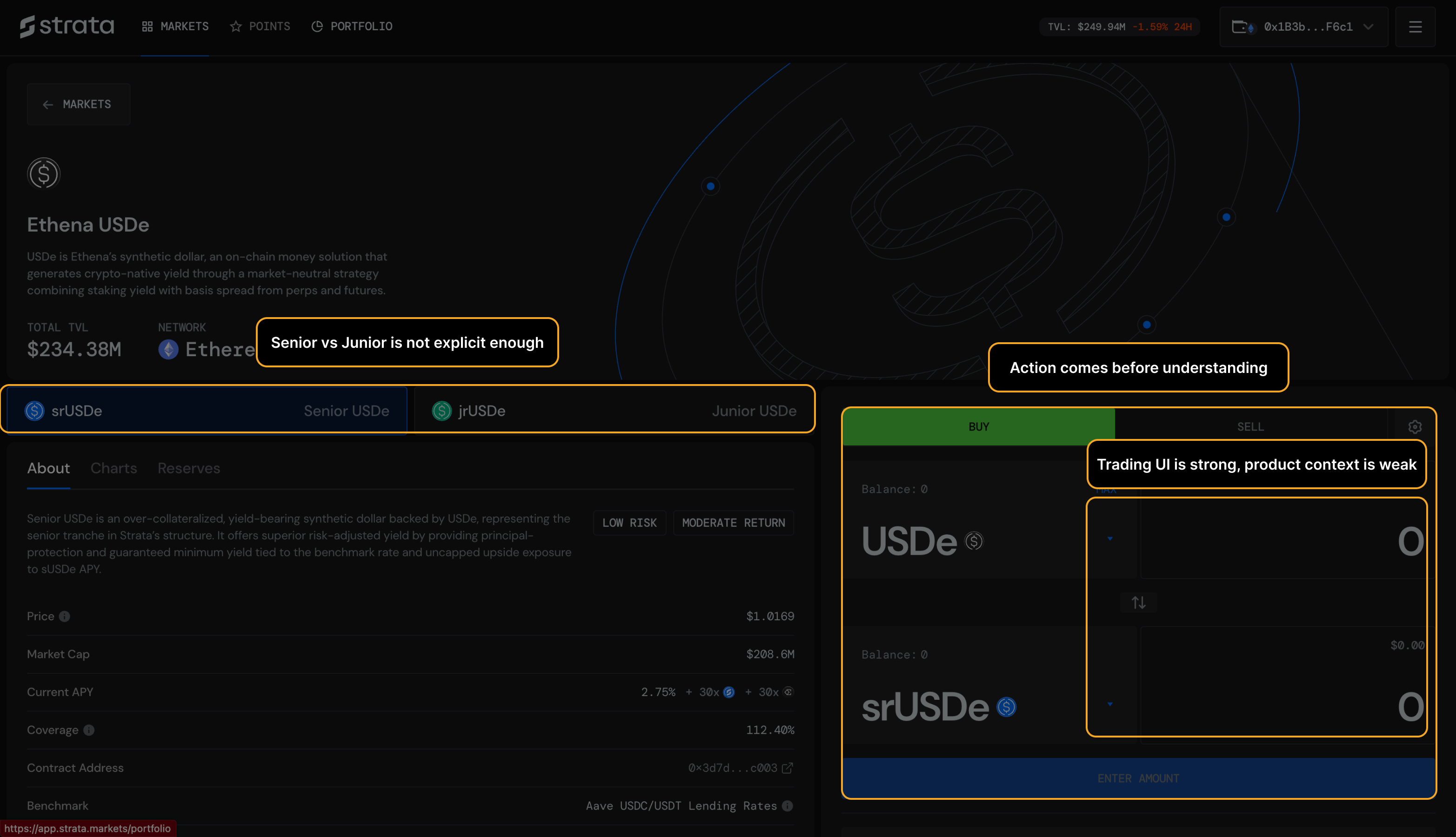

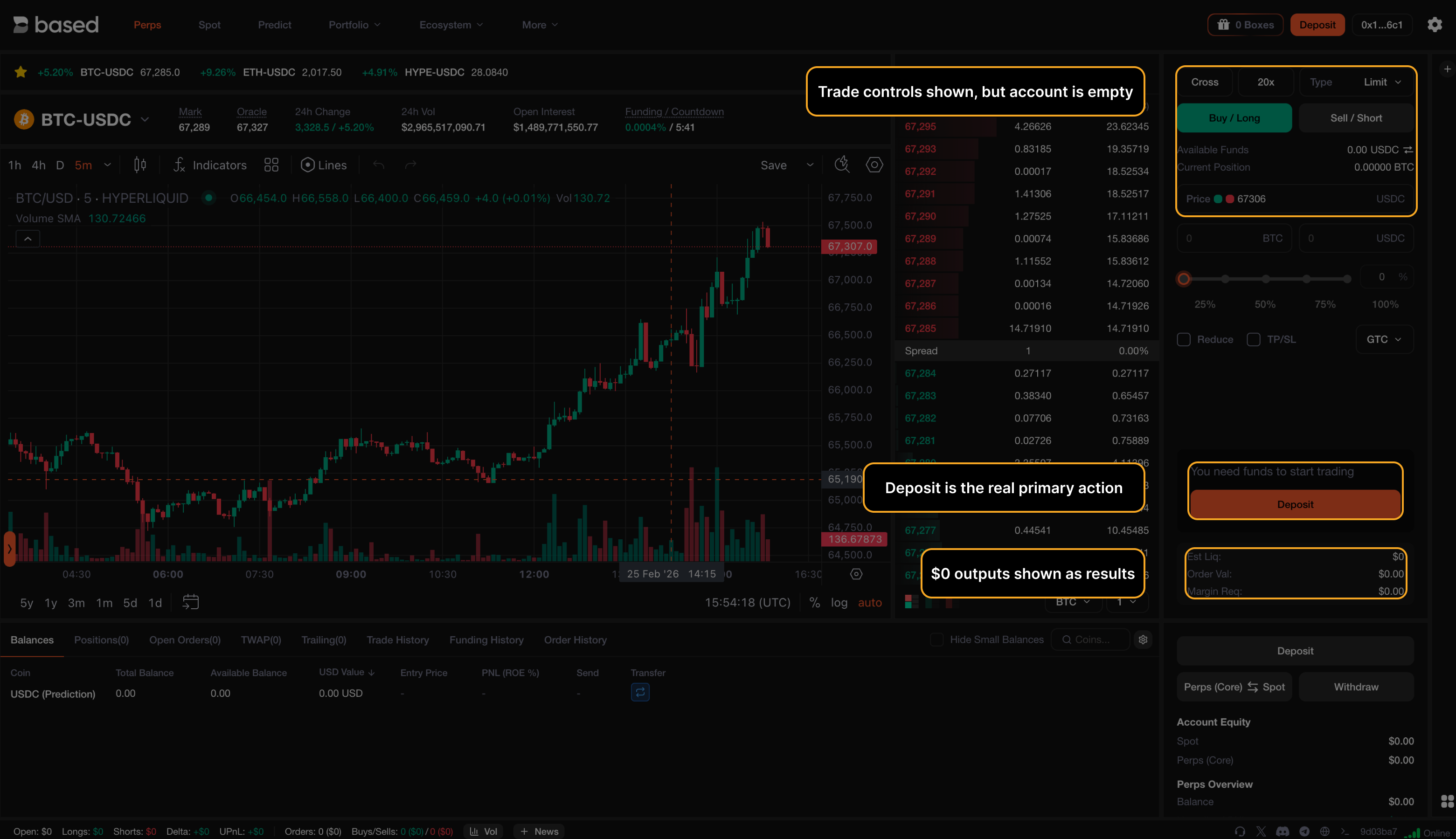

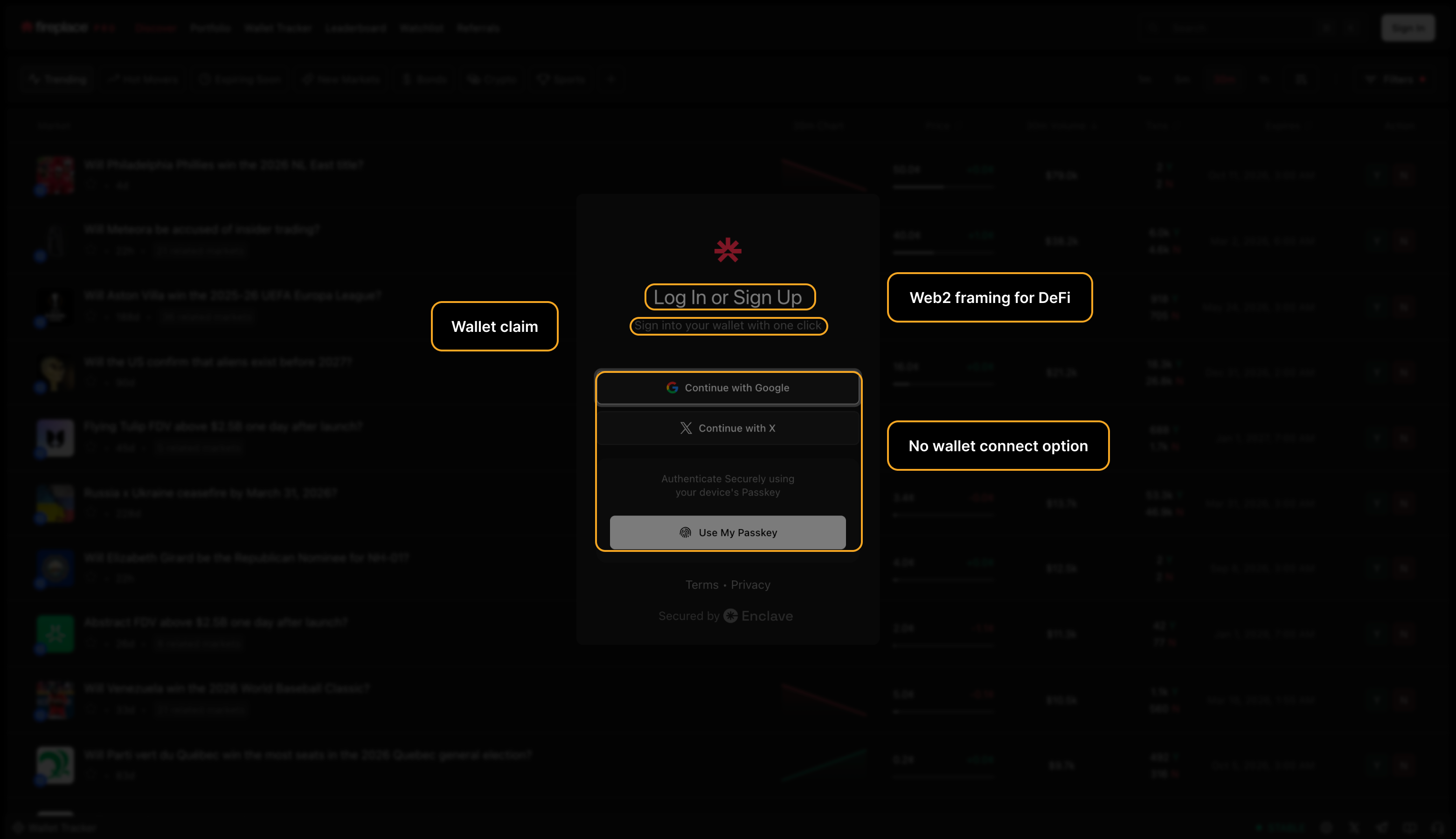

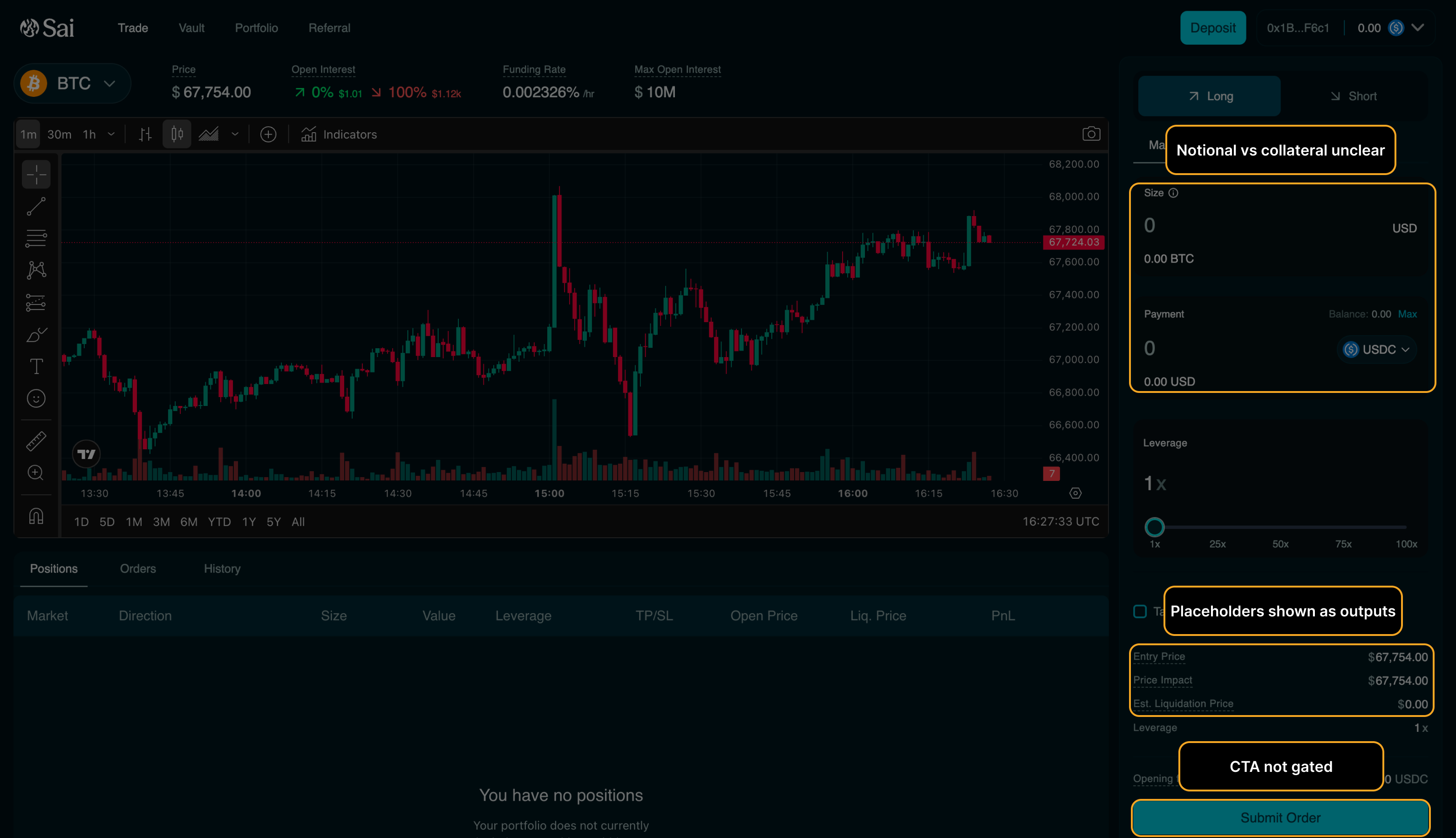

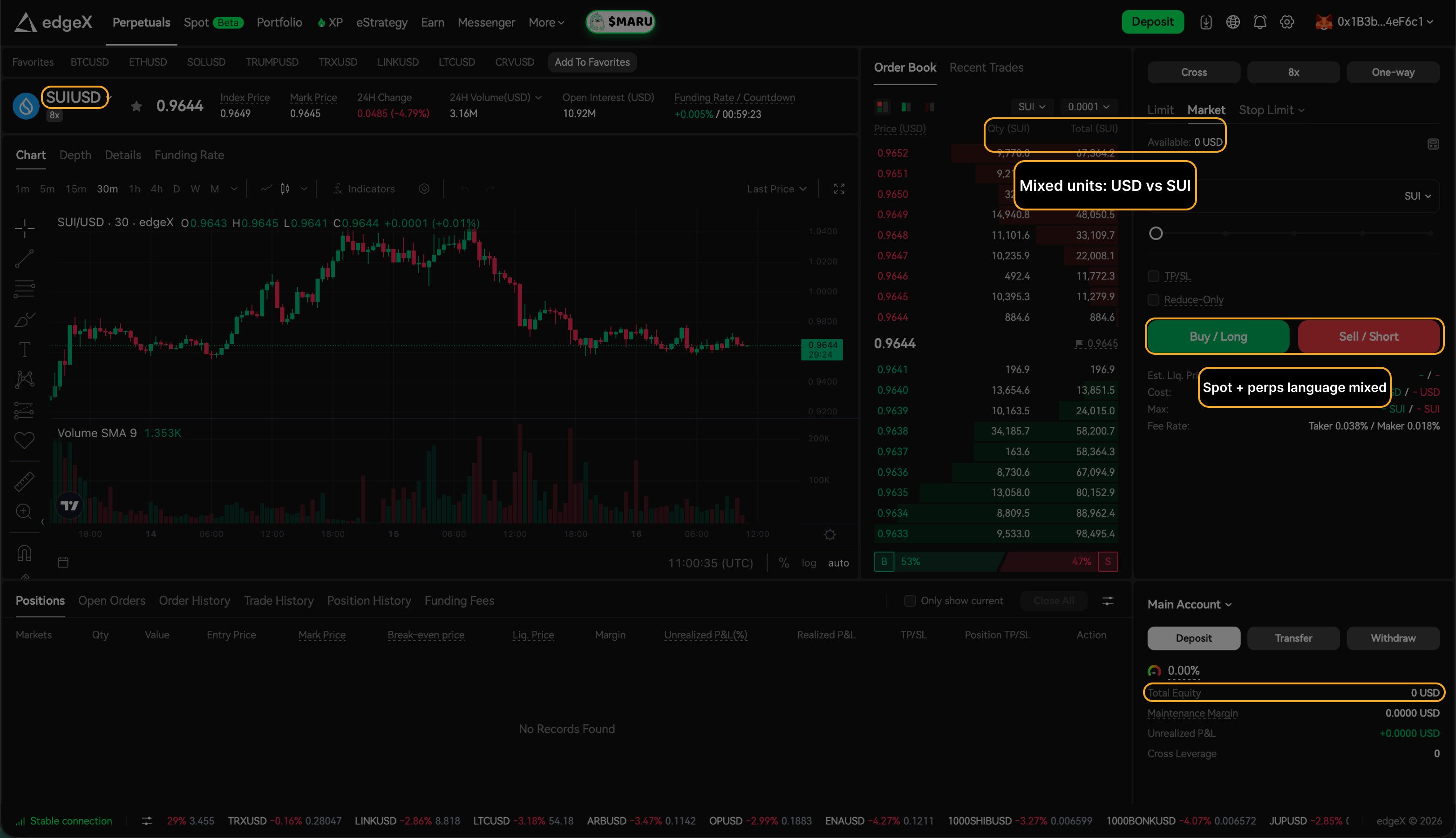

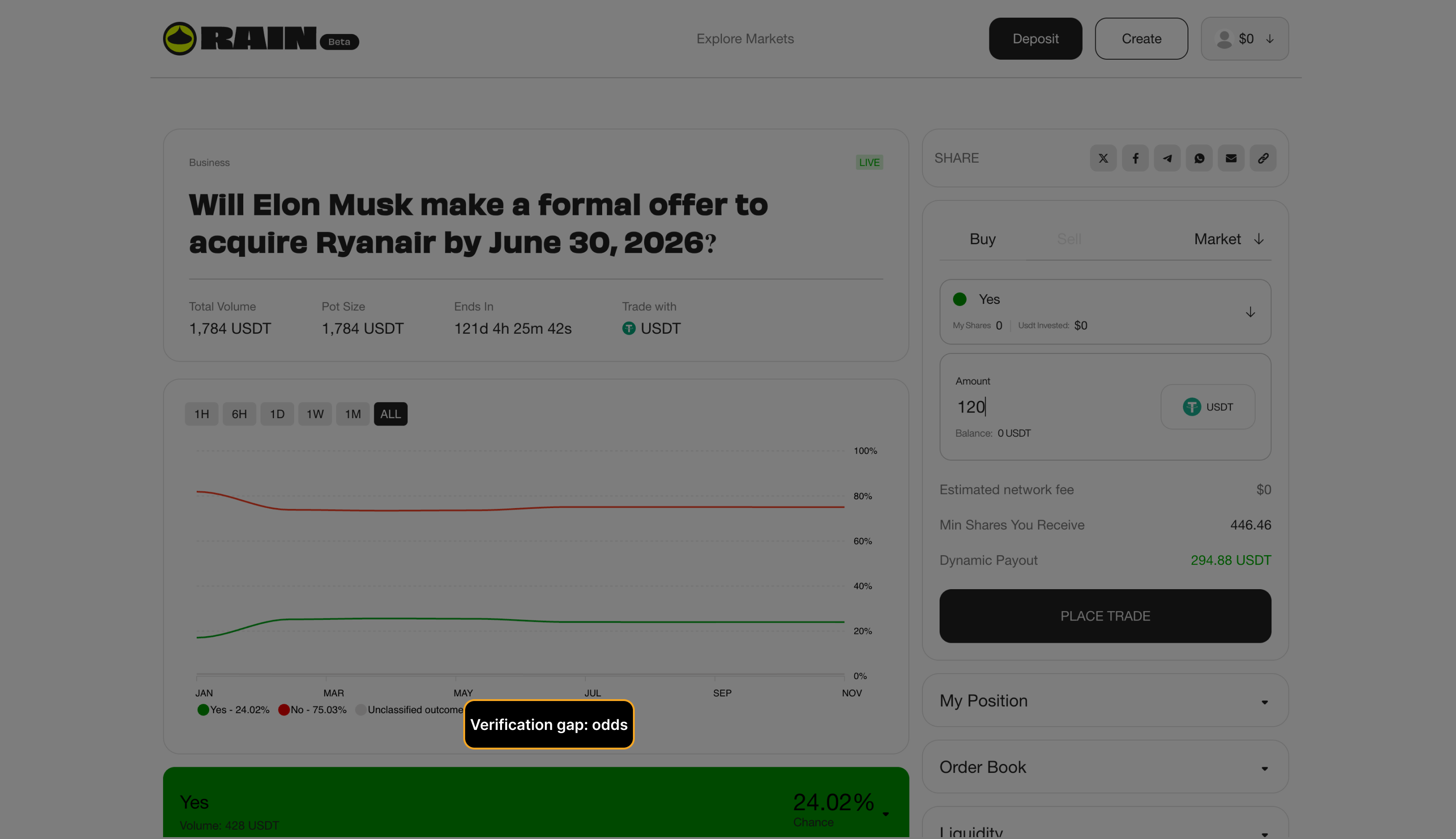

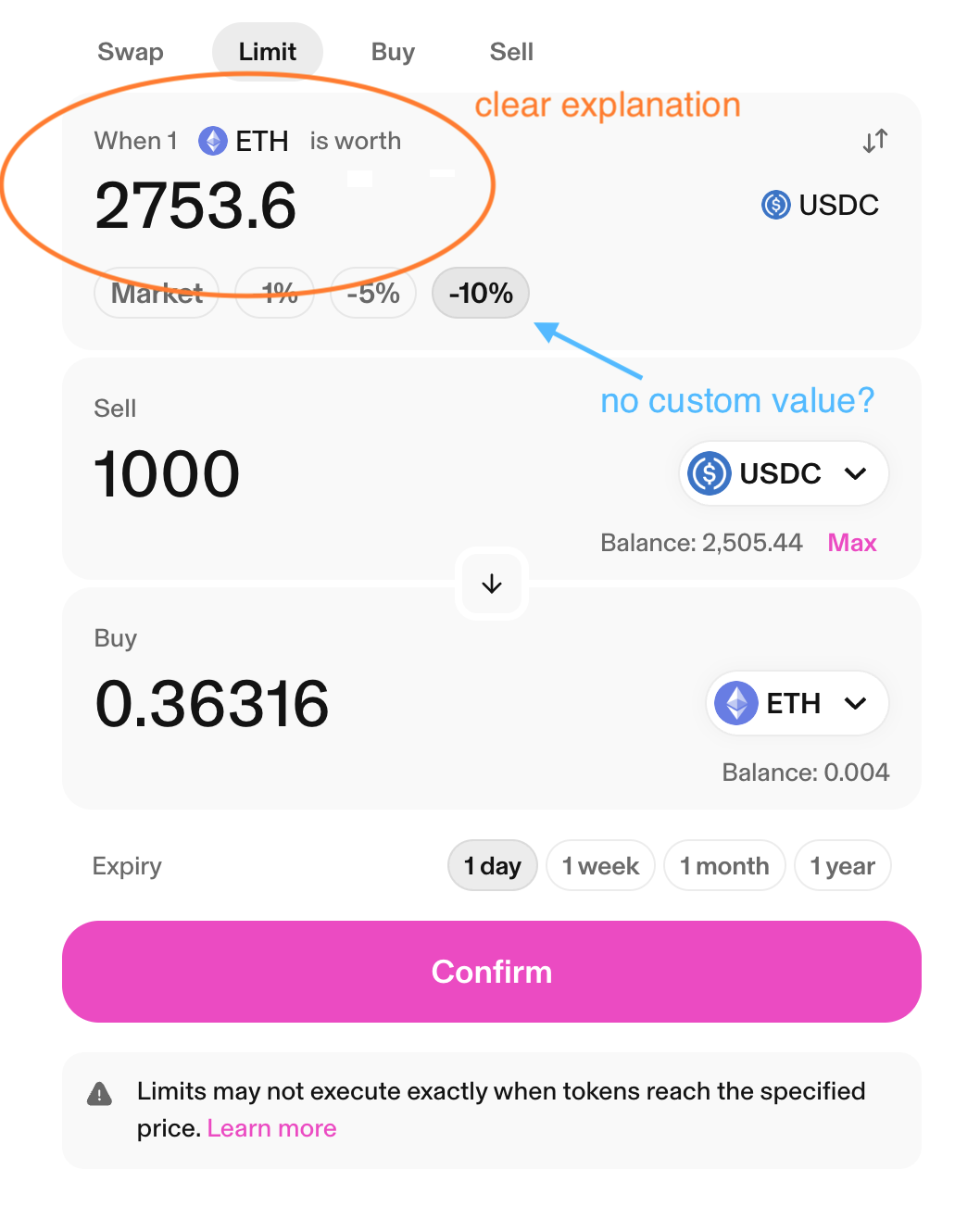

Users abandon when fees, slippage, or route details arrive too late in the decision sequence.

Abandonment

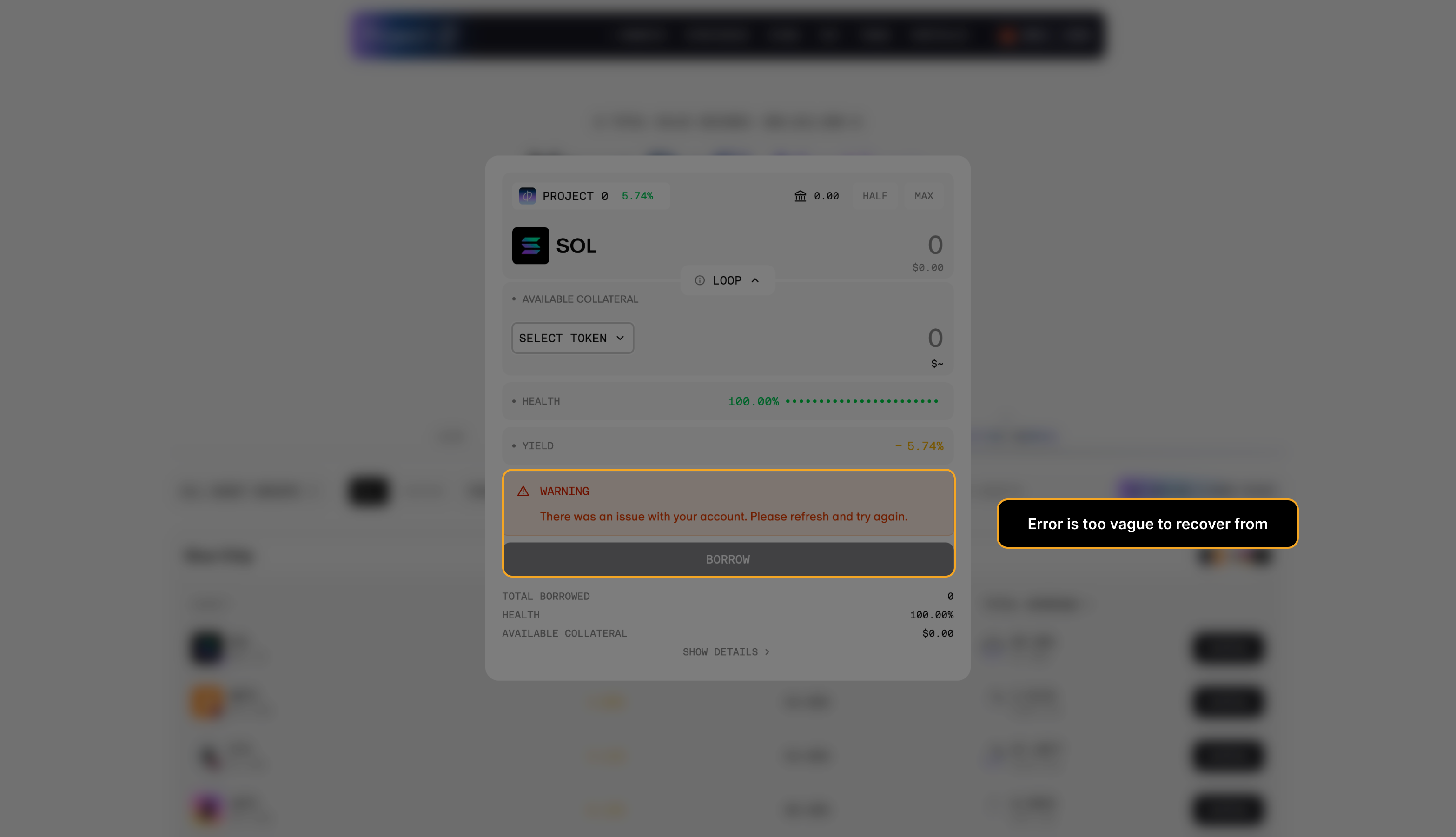

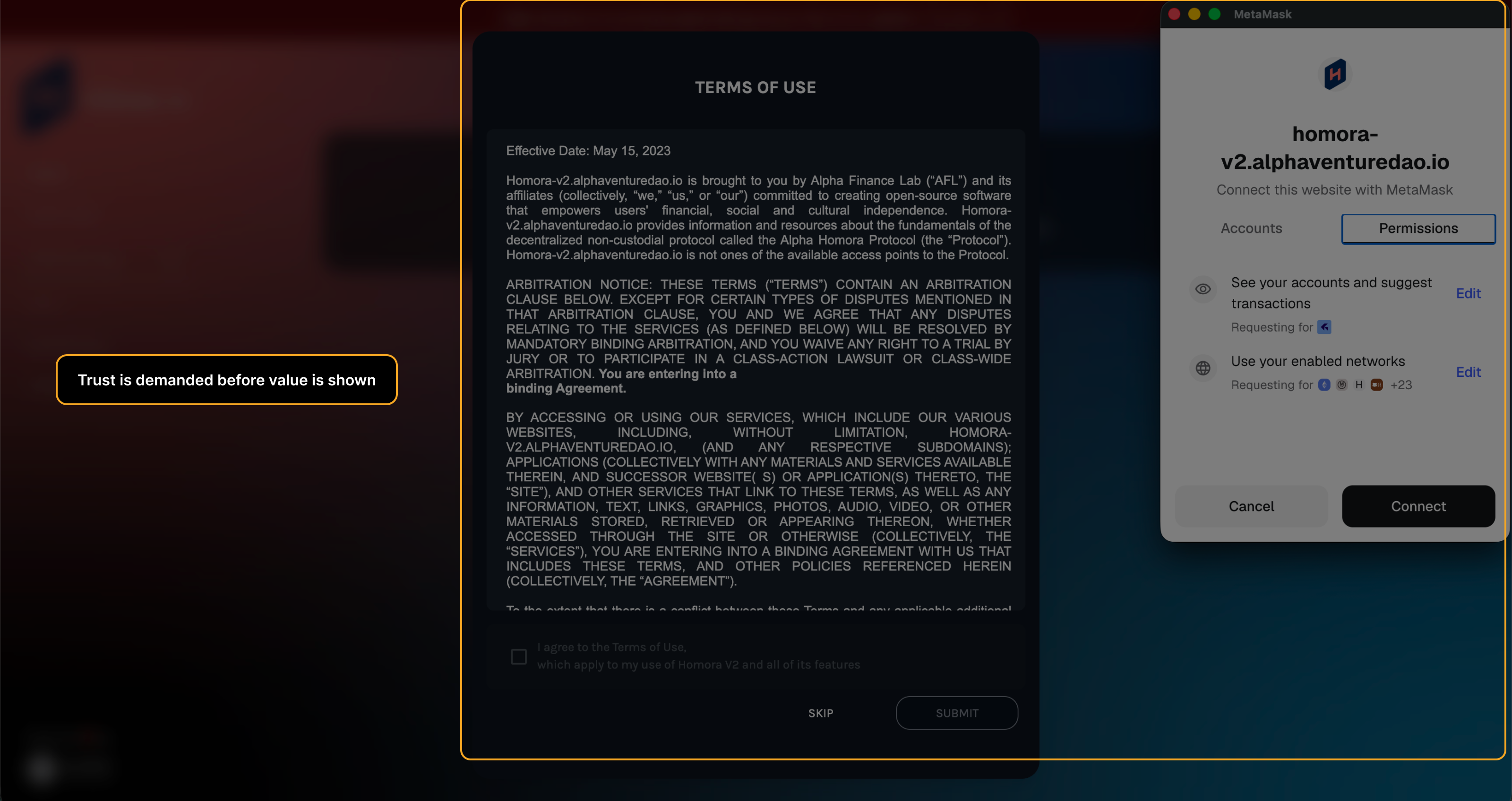

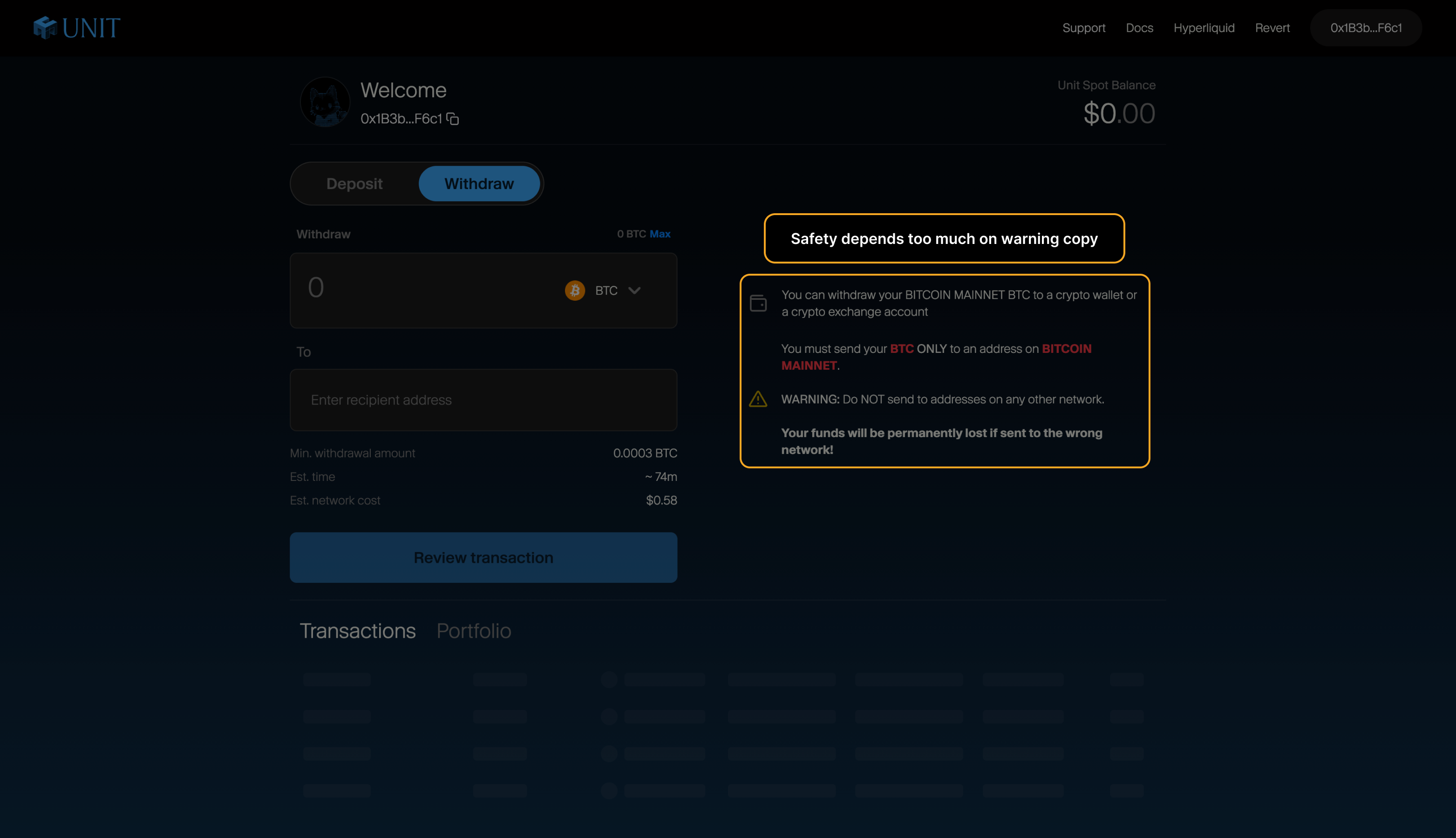

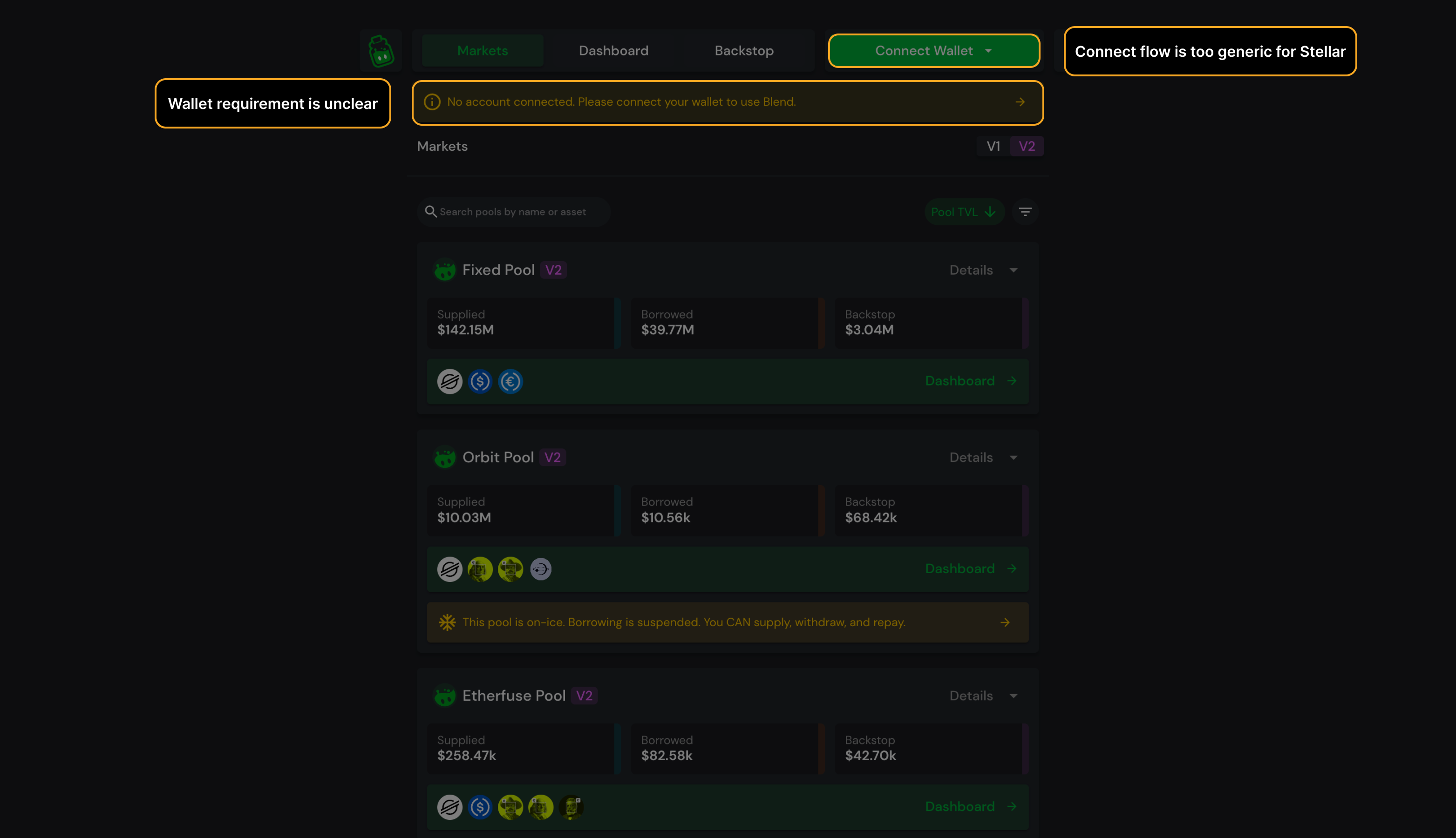

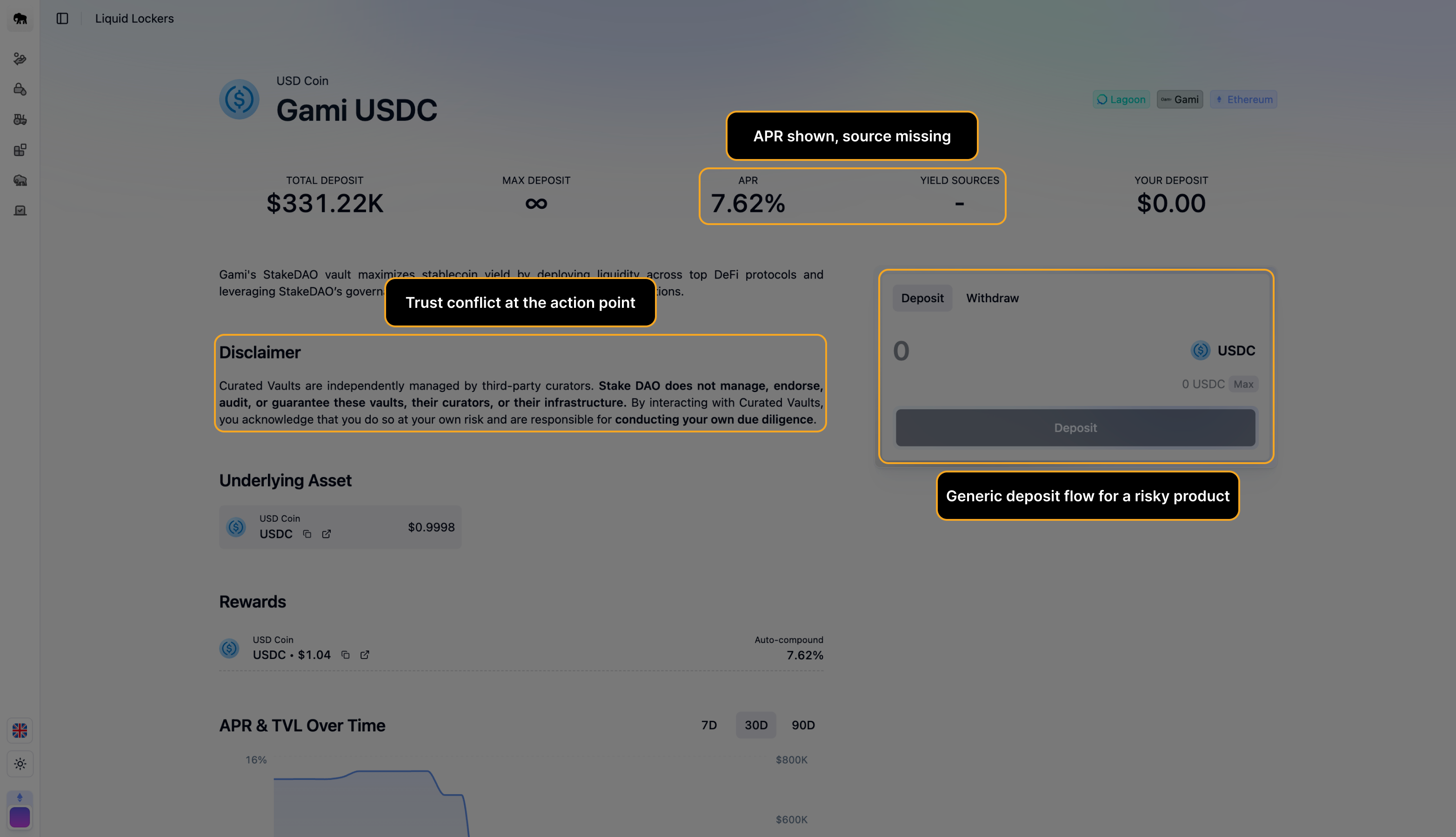

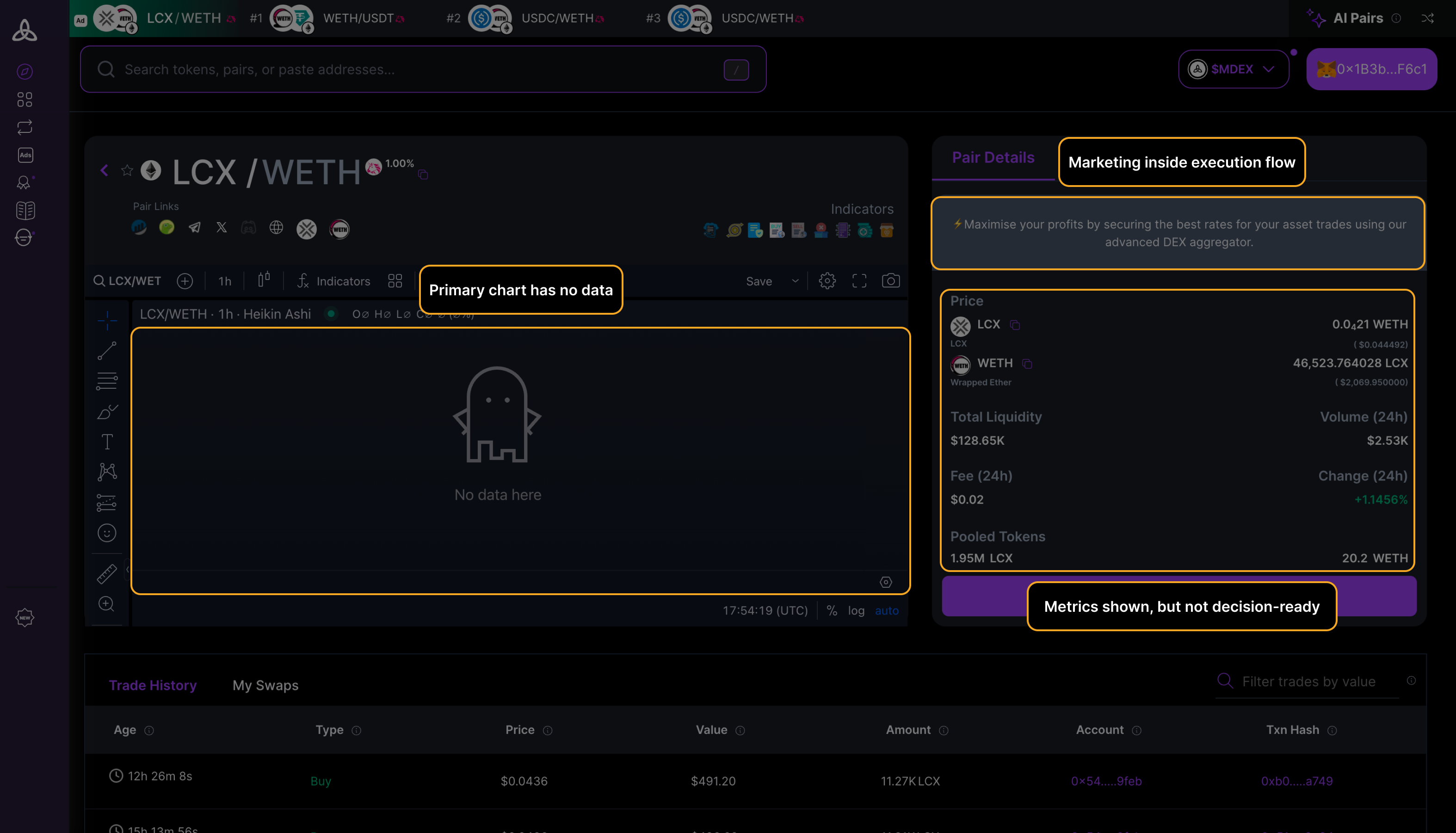

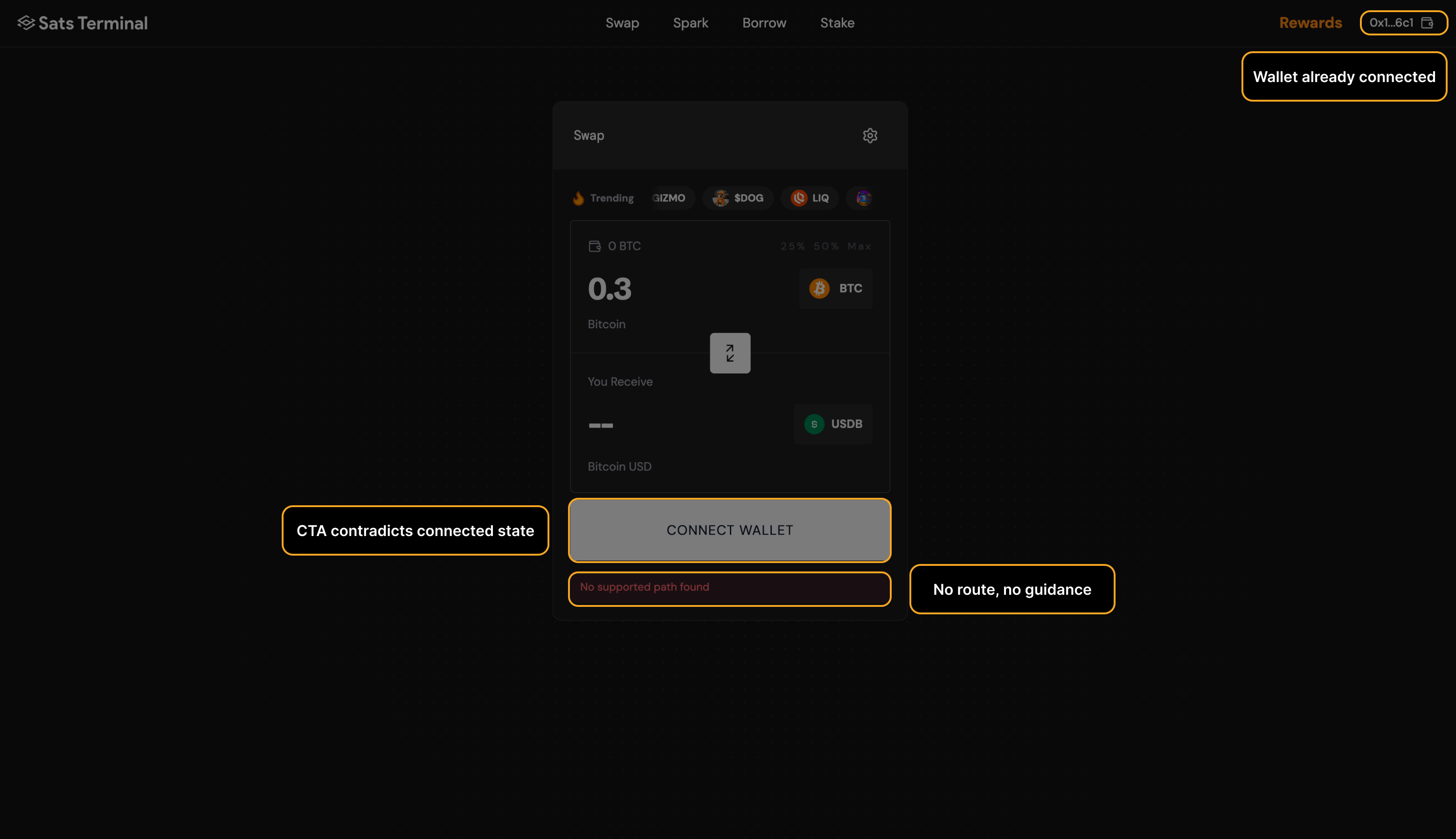

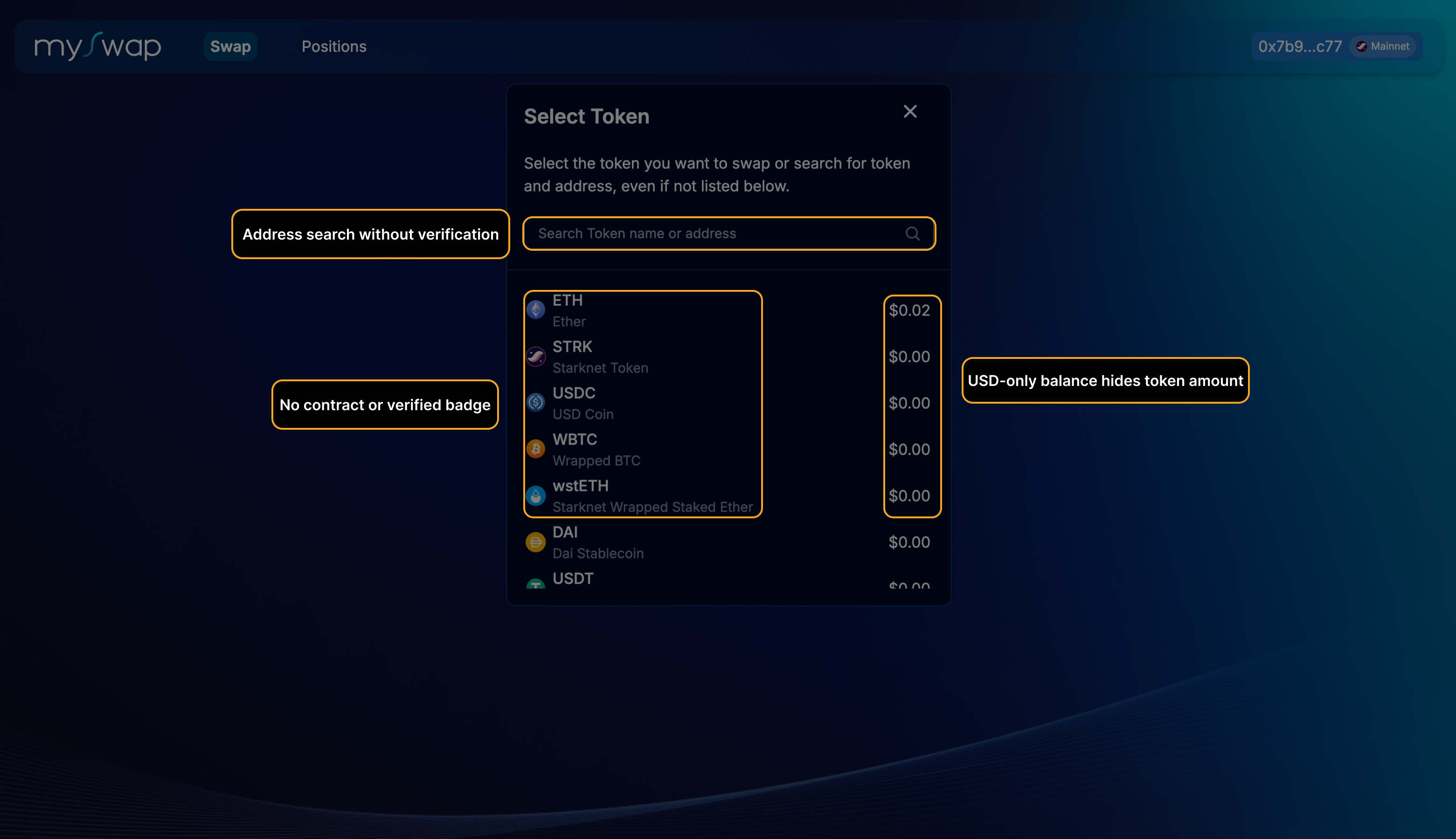

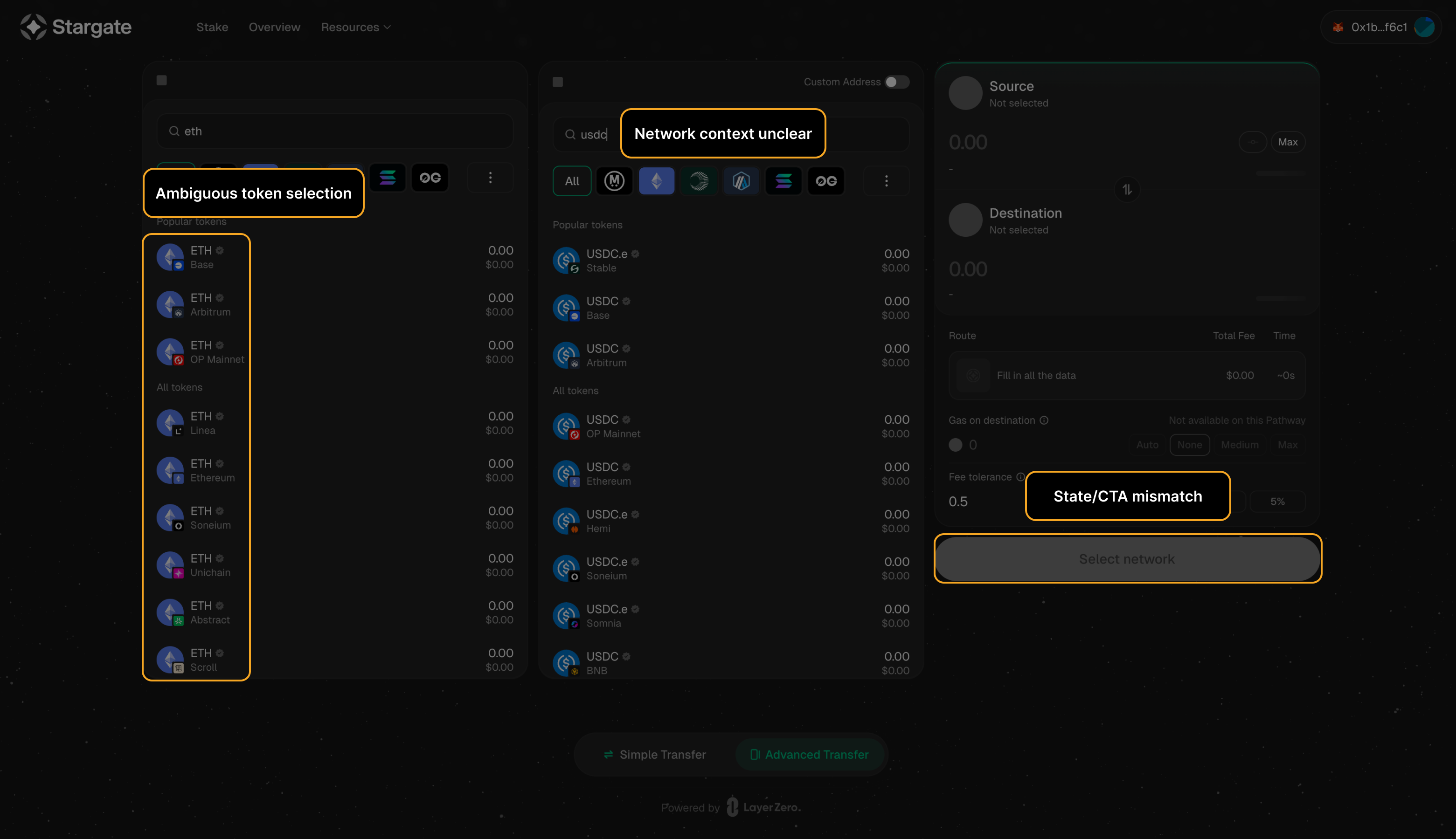

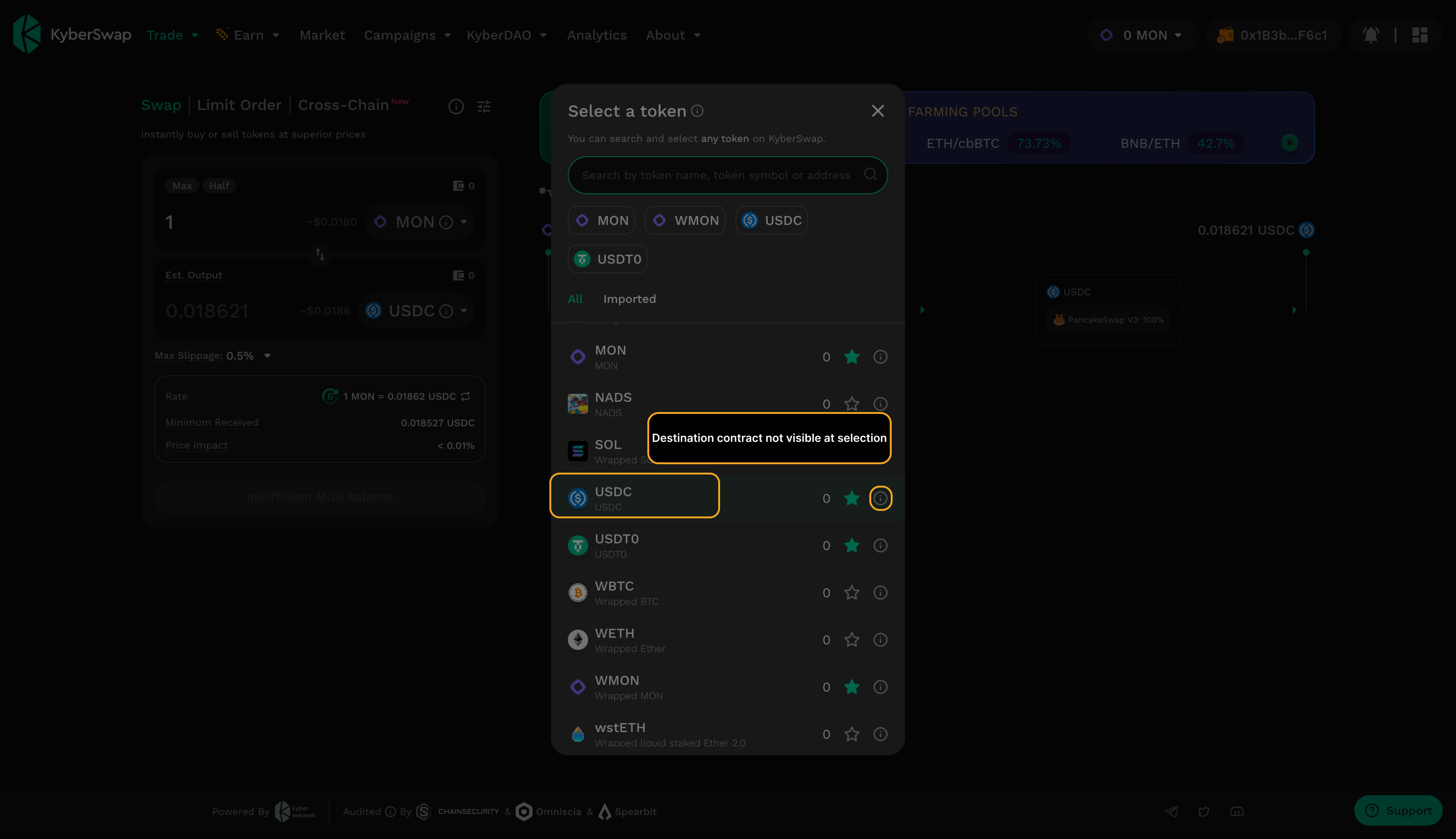

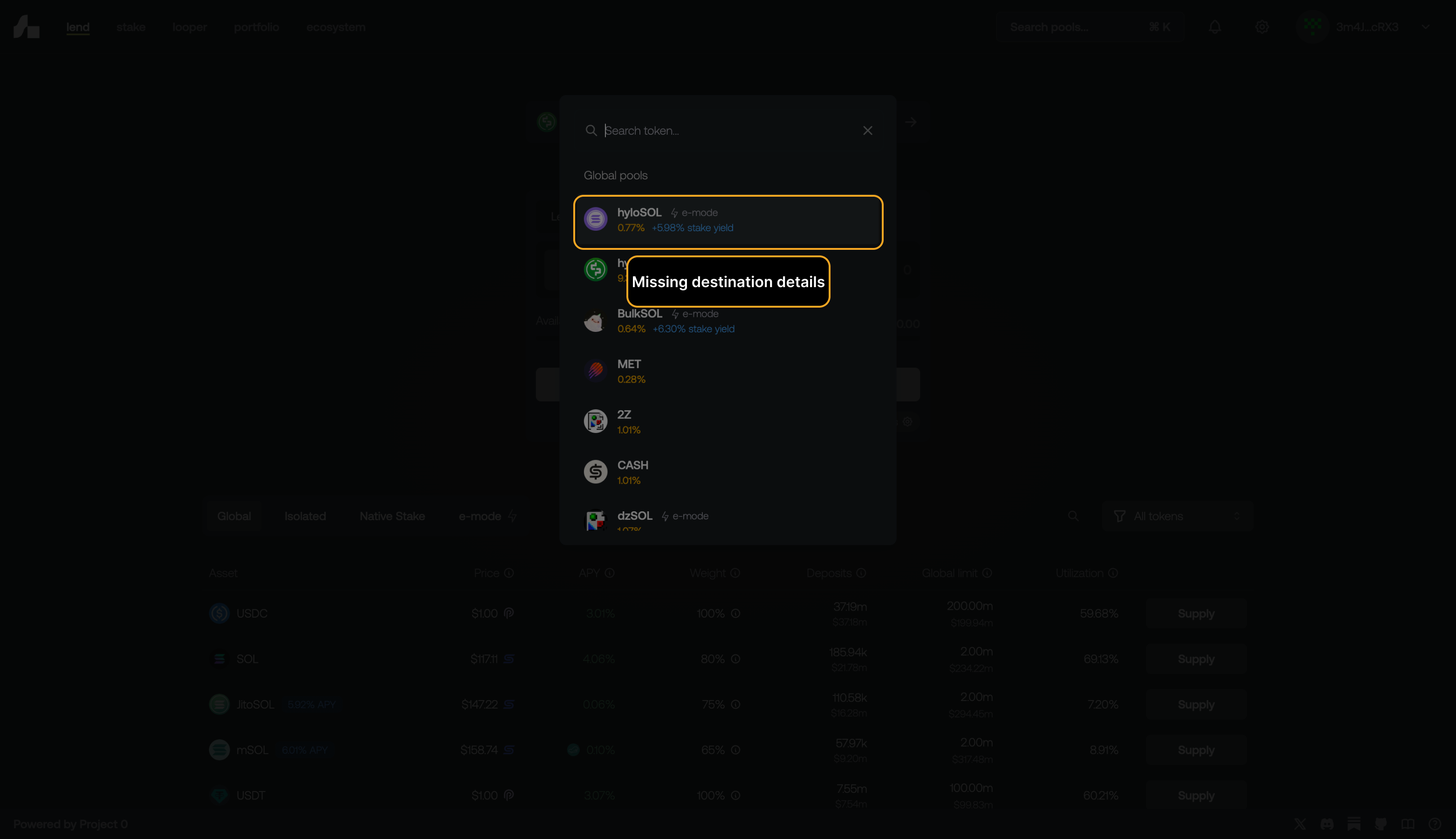

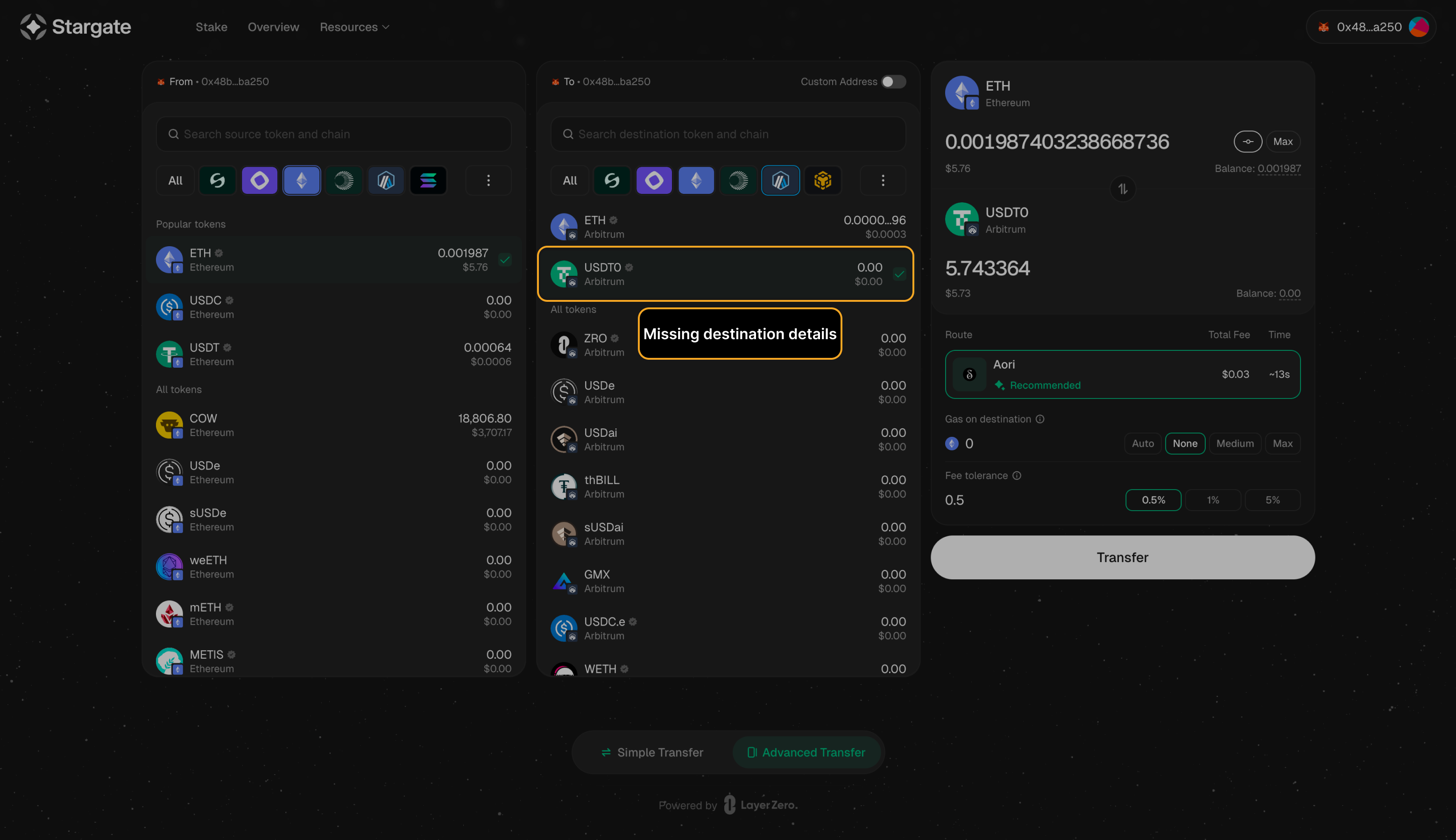

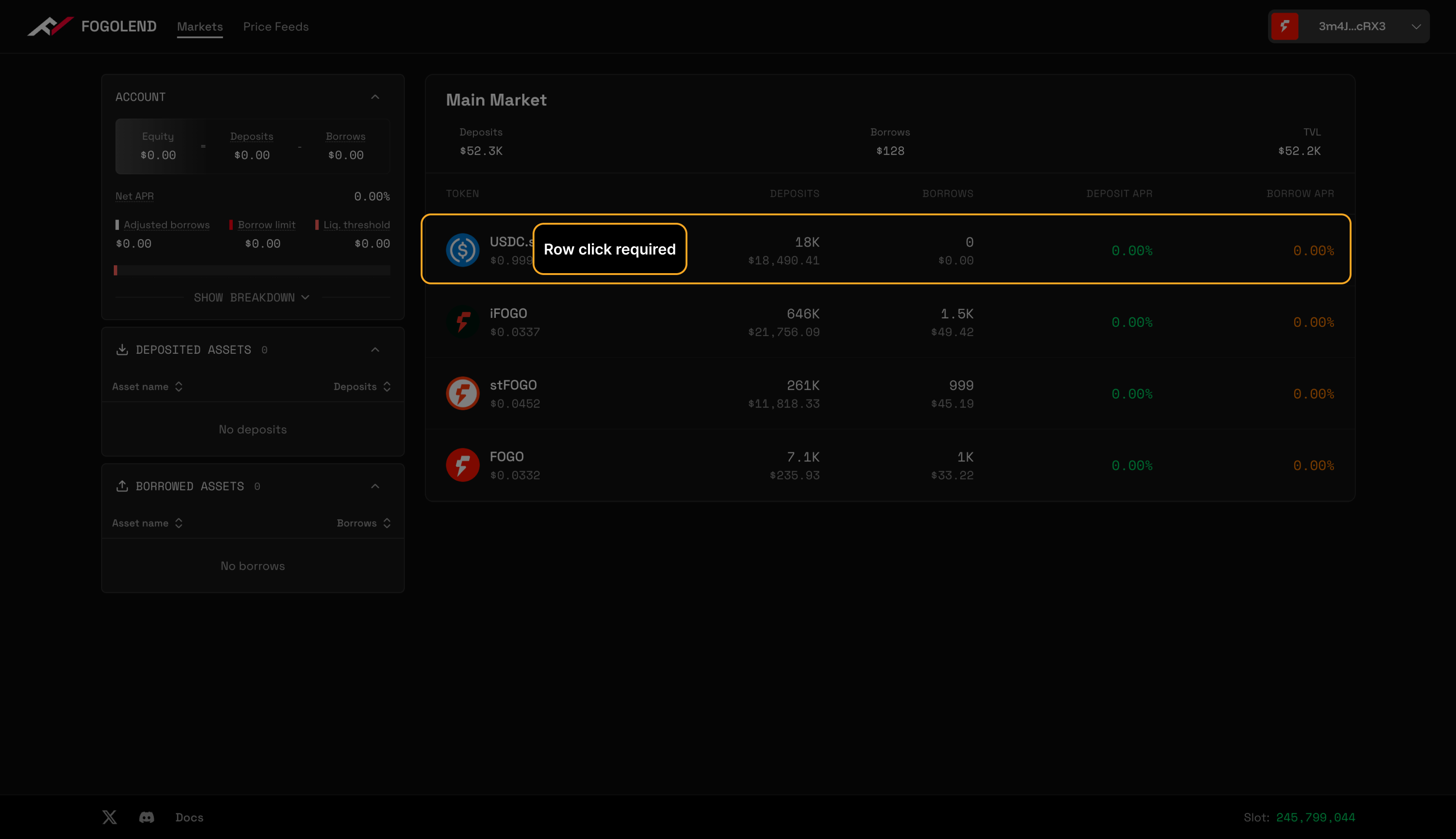

Missing destination validation introduces doubt at exactly the moment commitment is required.

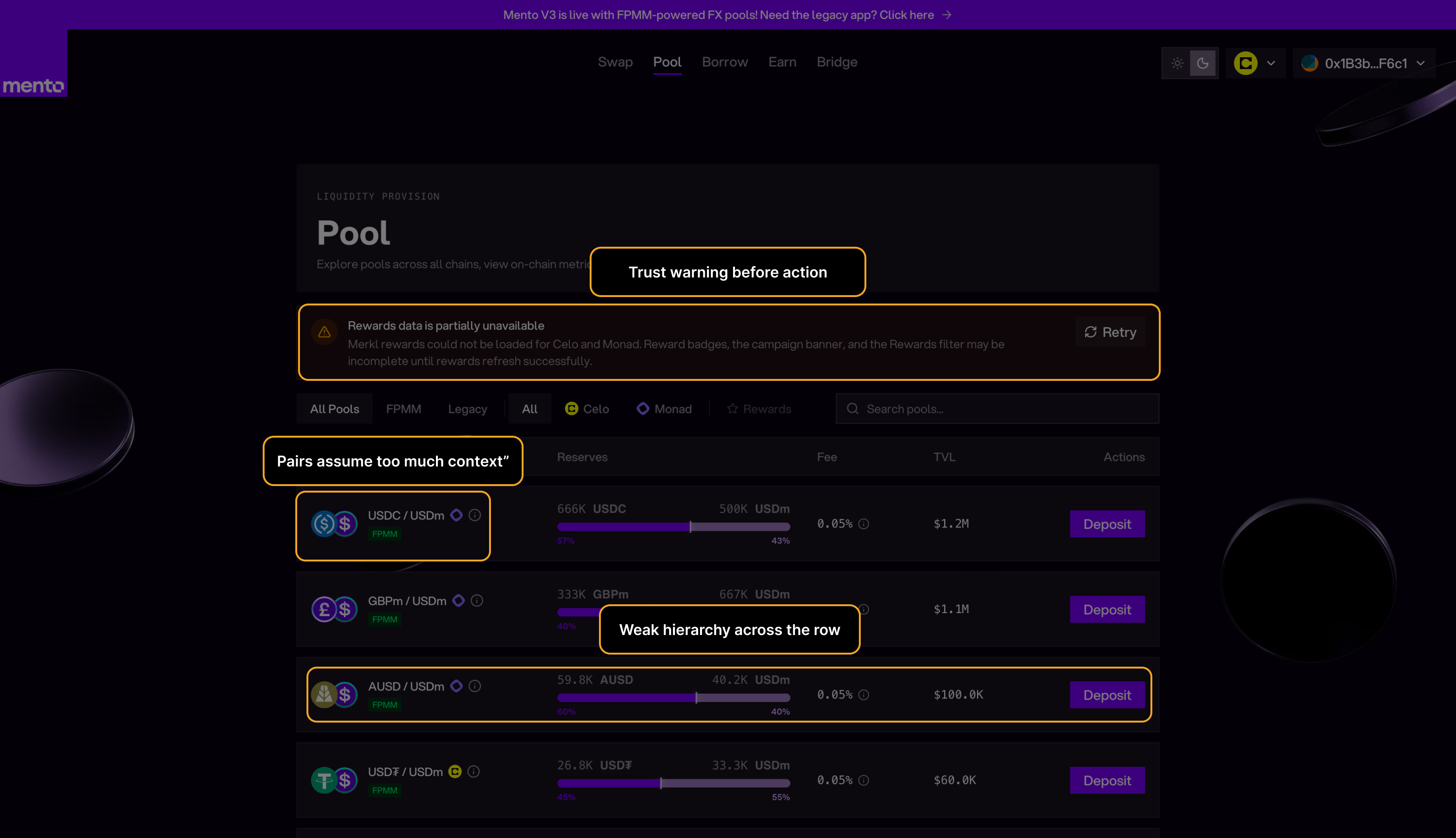

Support Burden

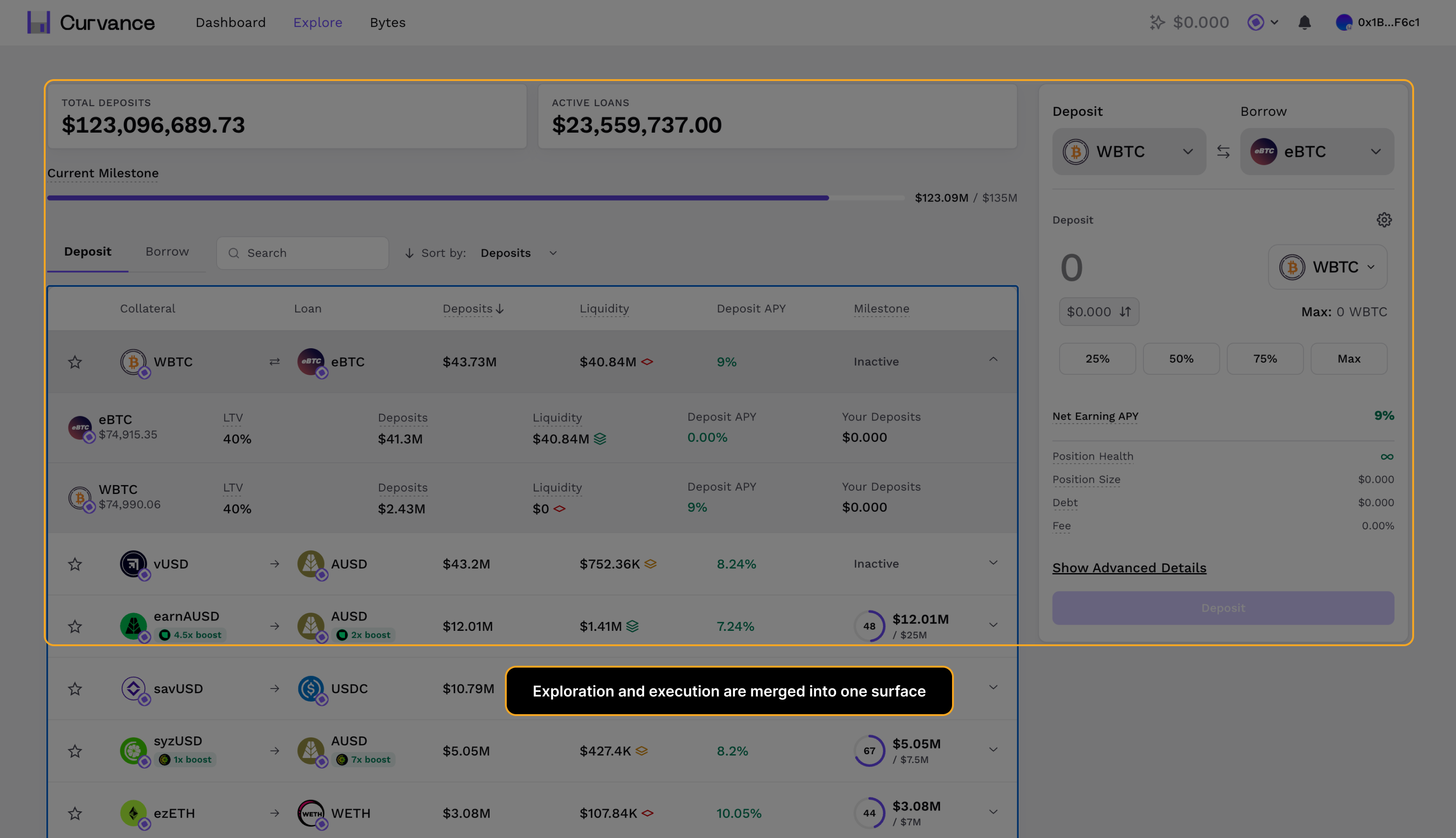

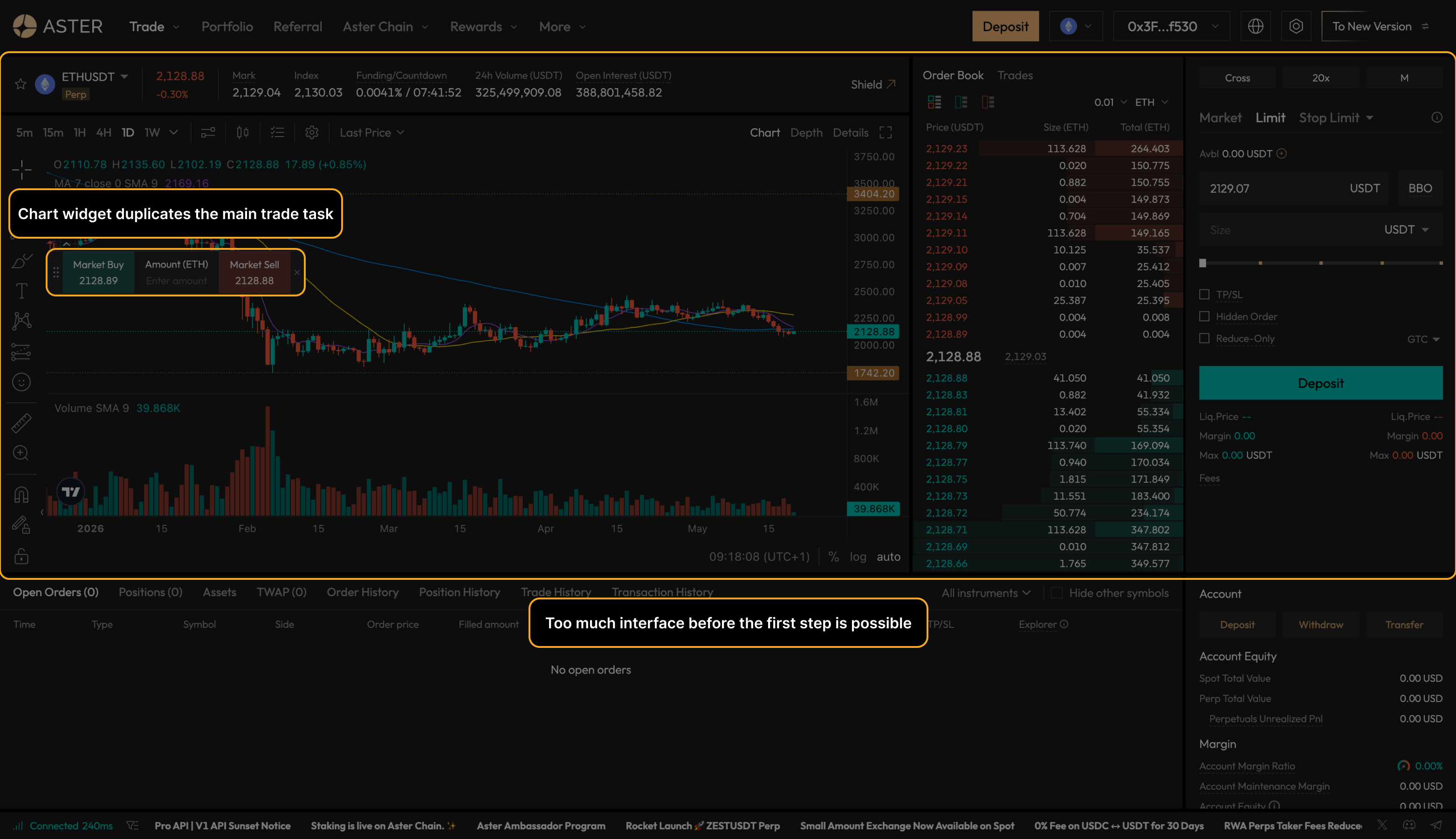

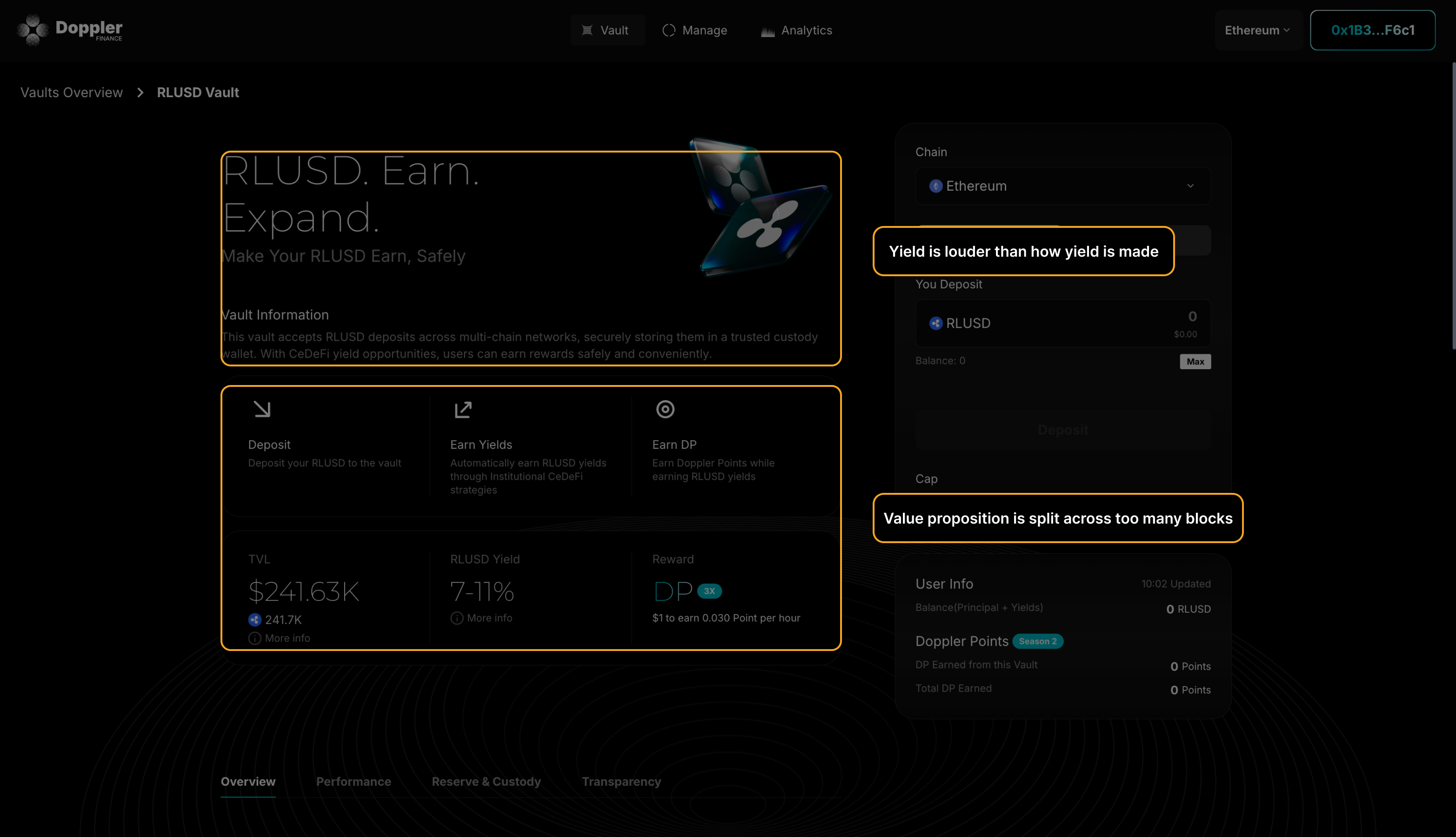

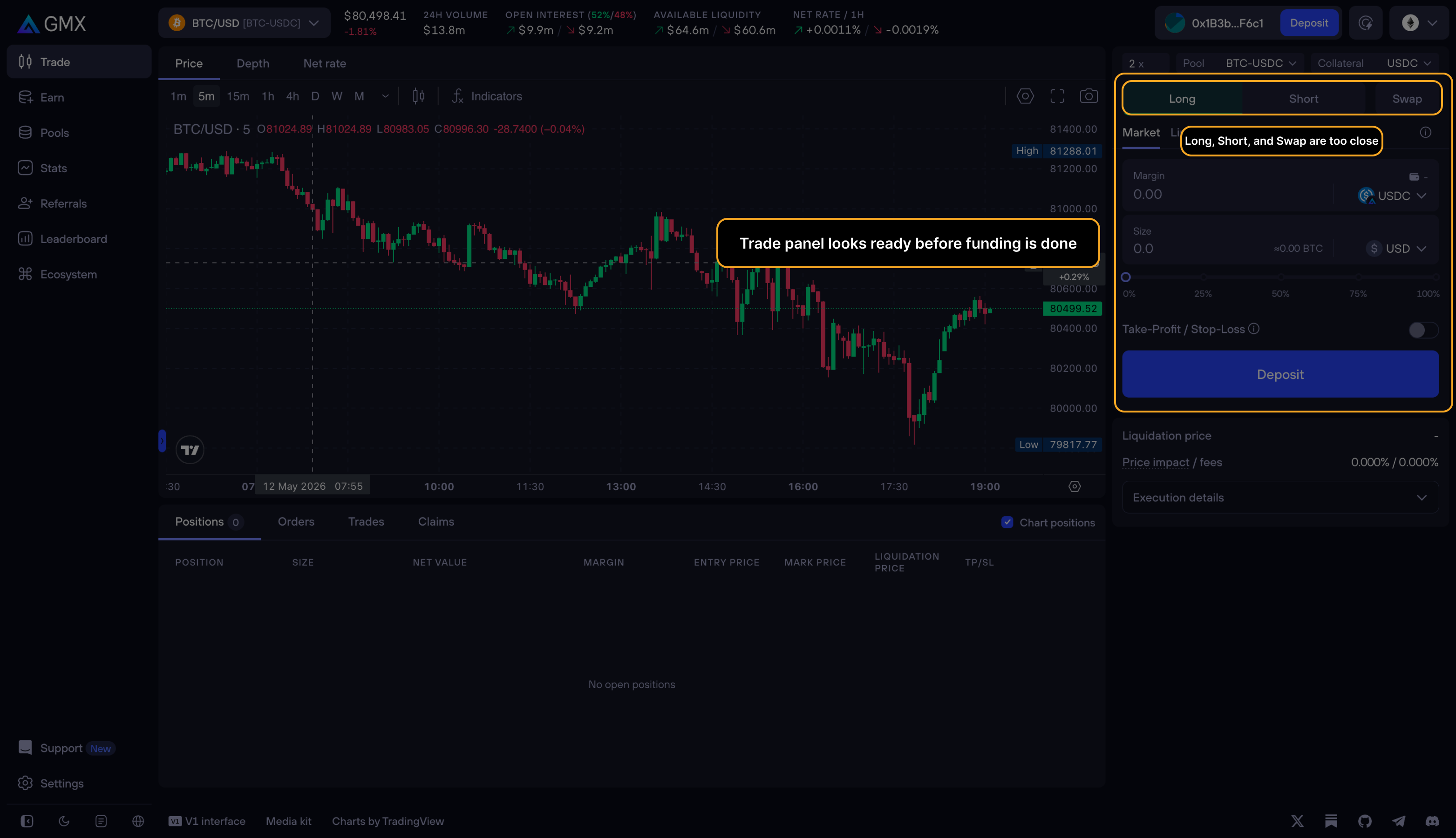

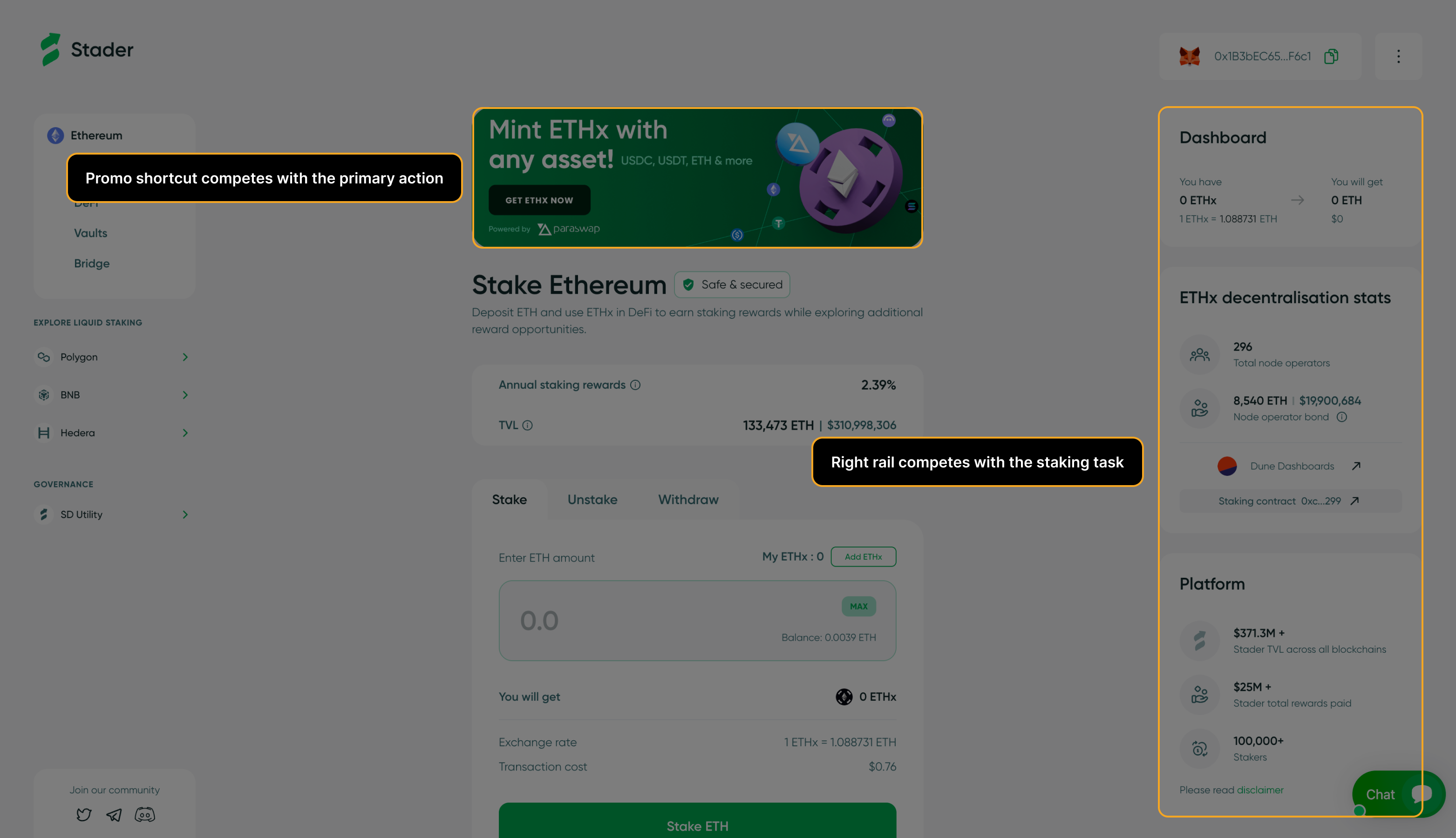

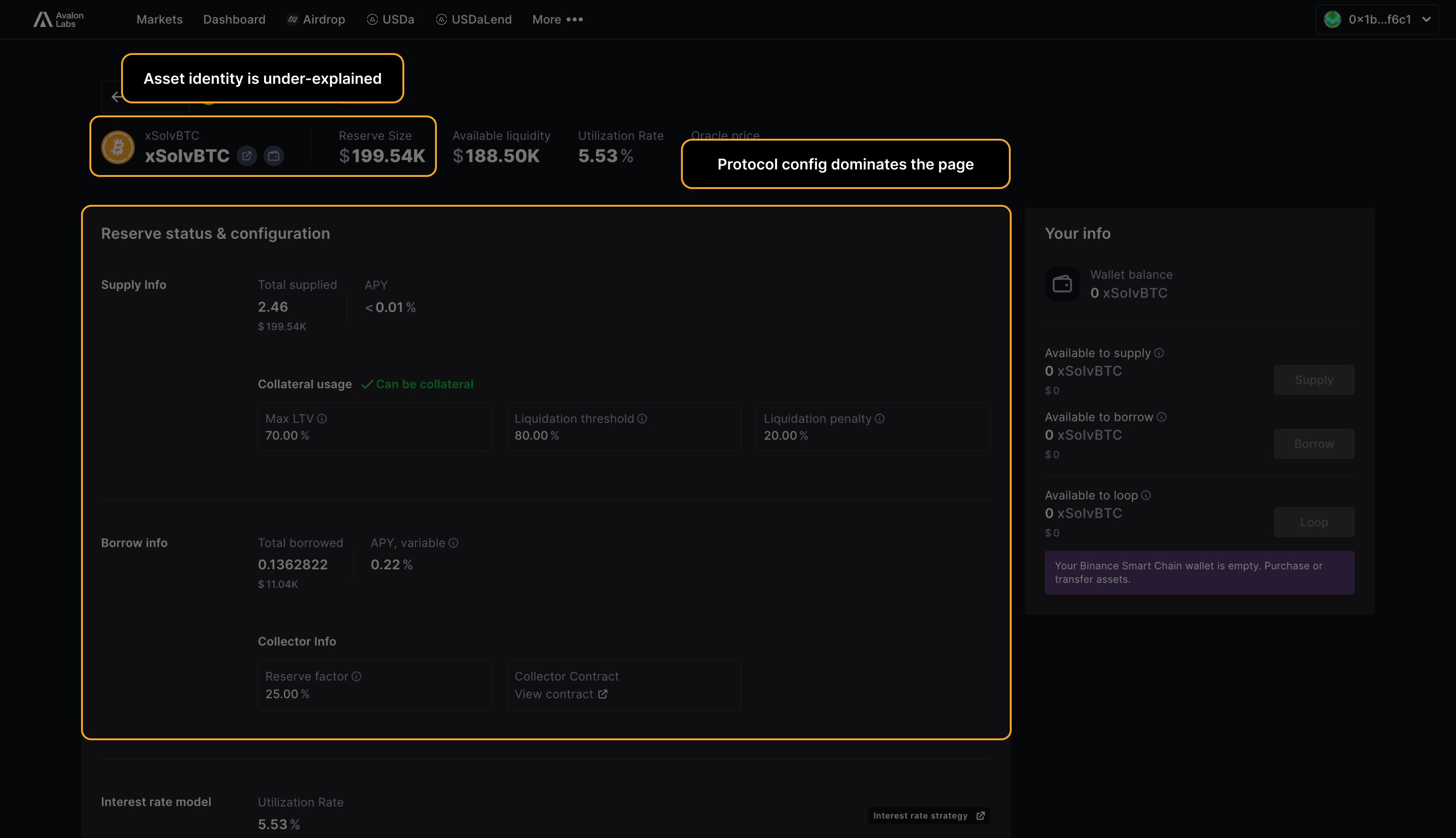

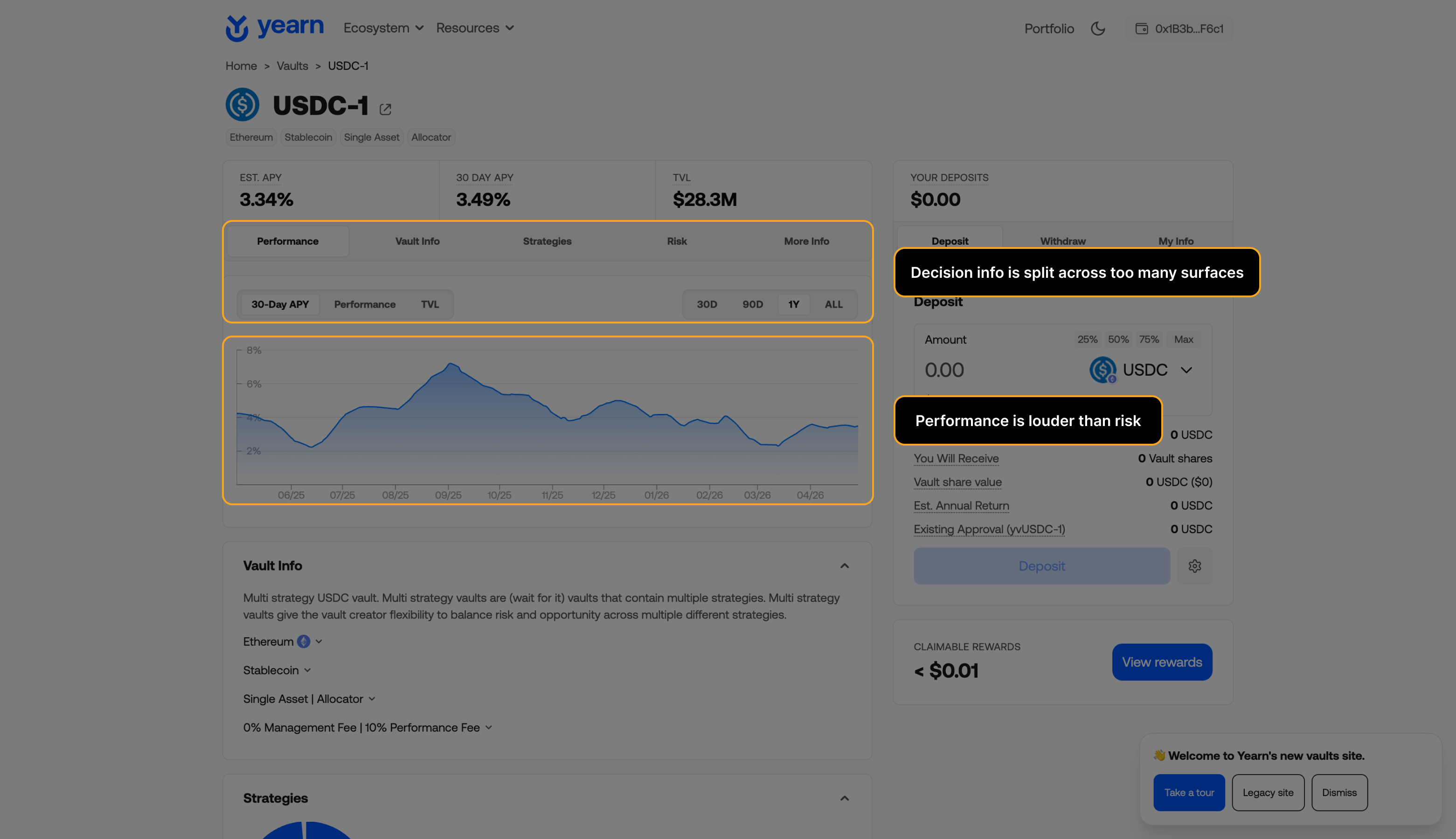

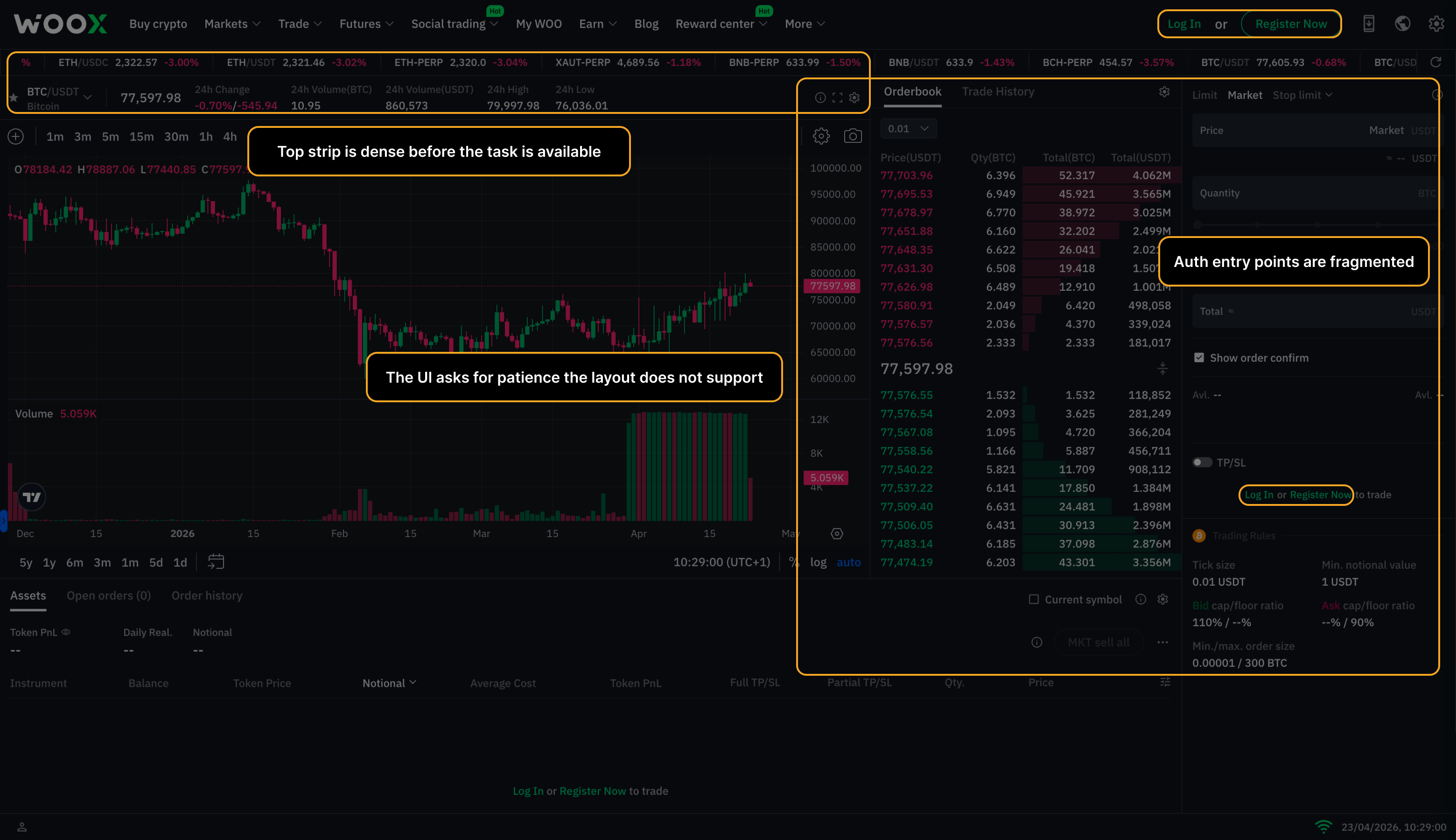

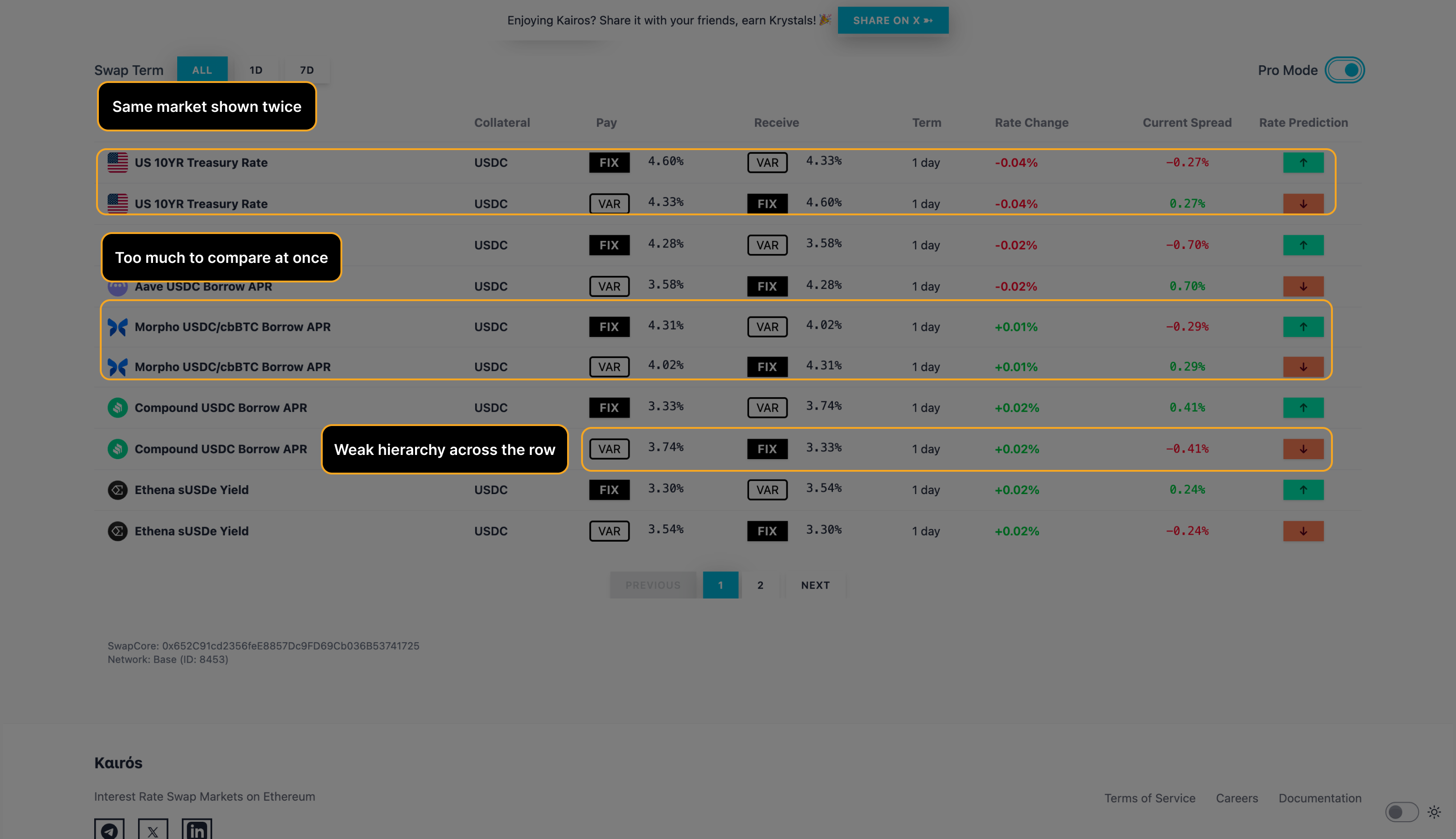

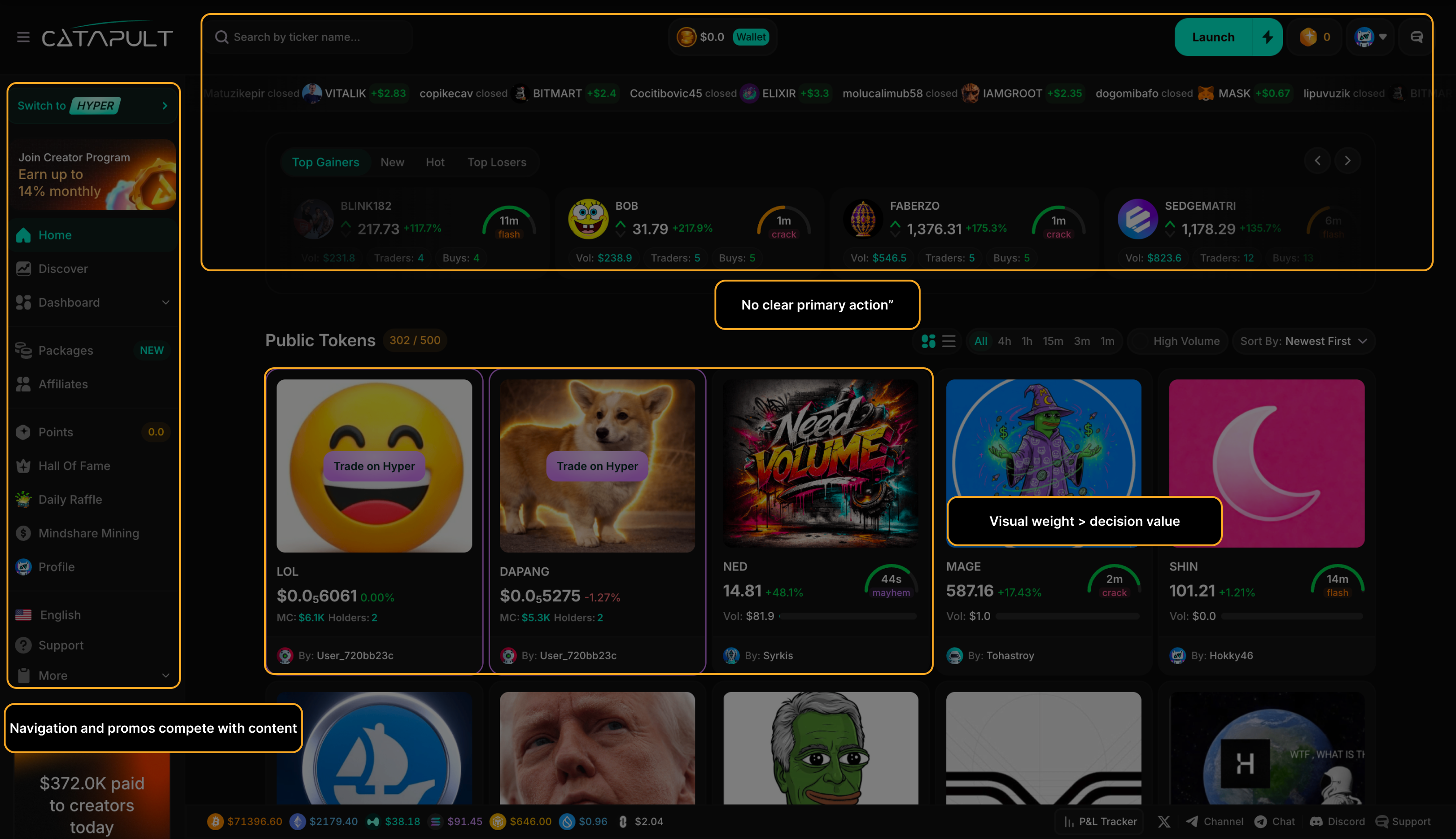

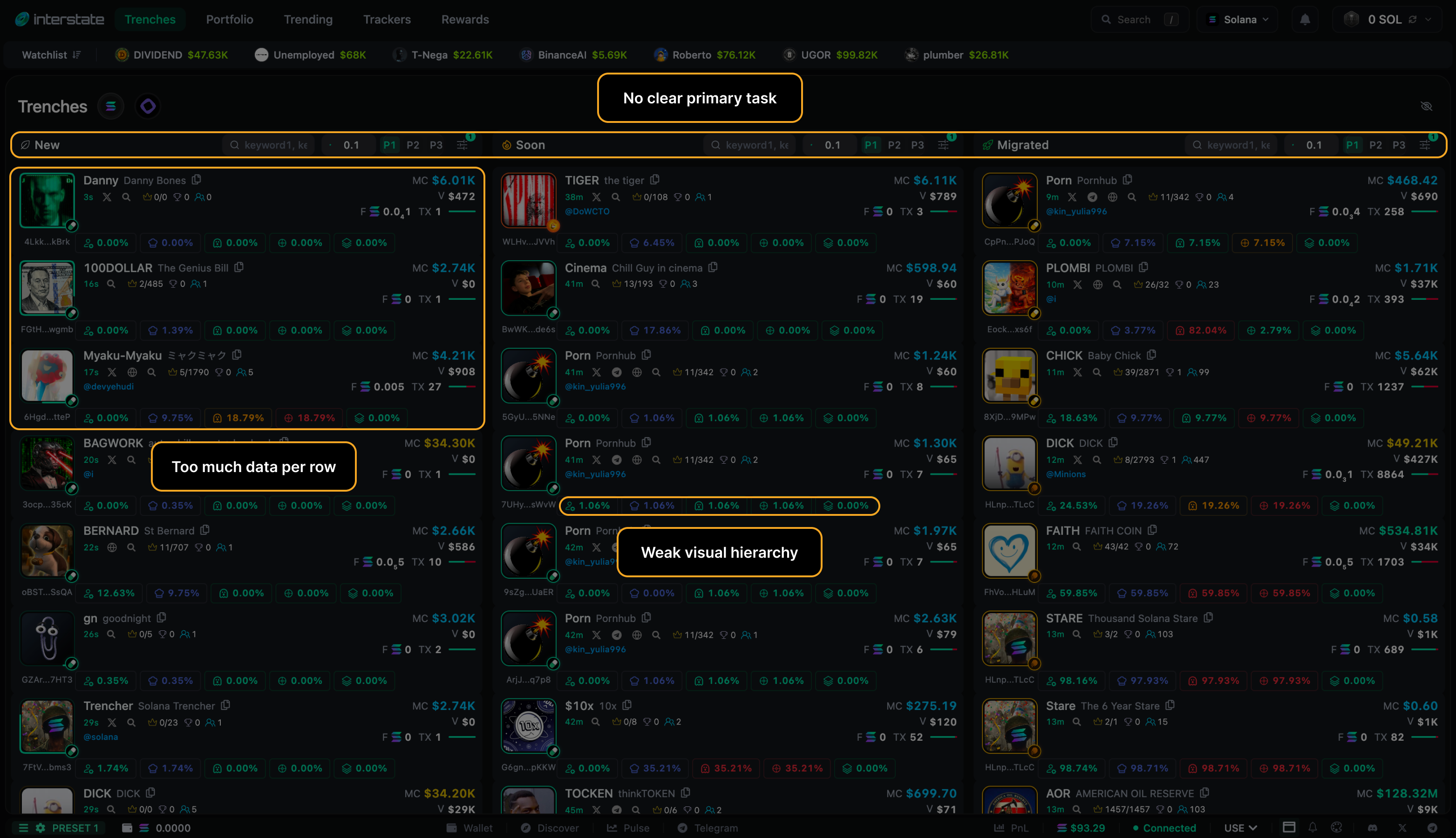

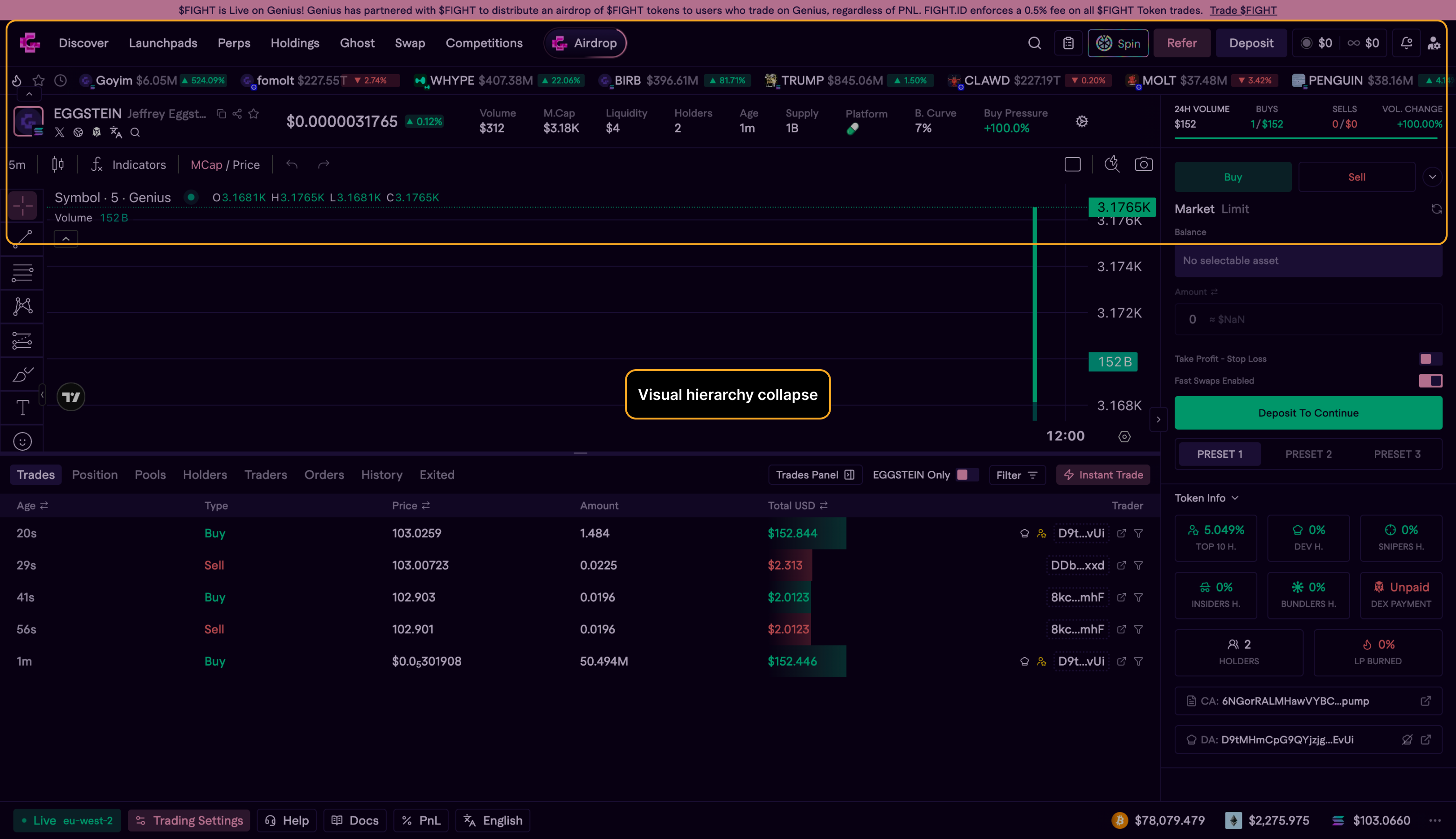

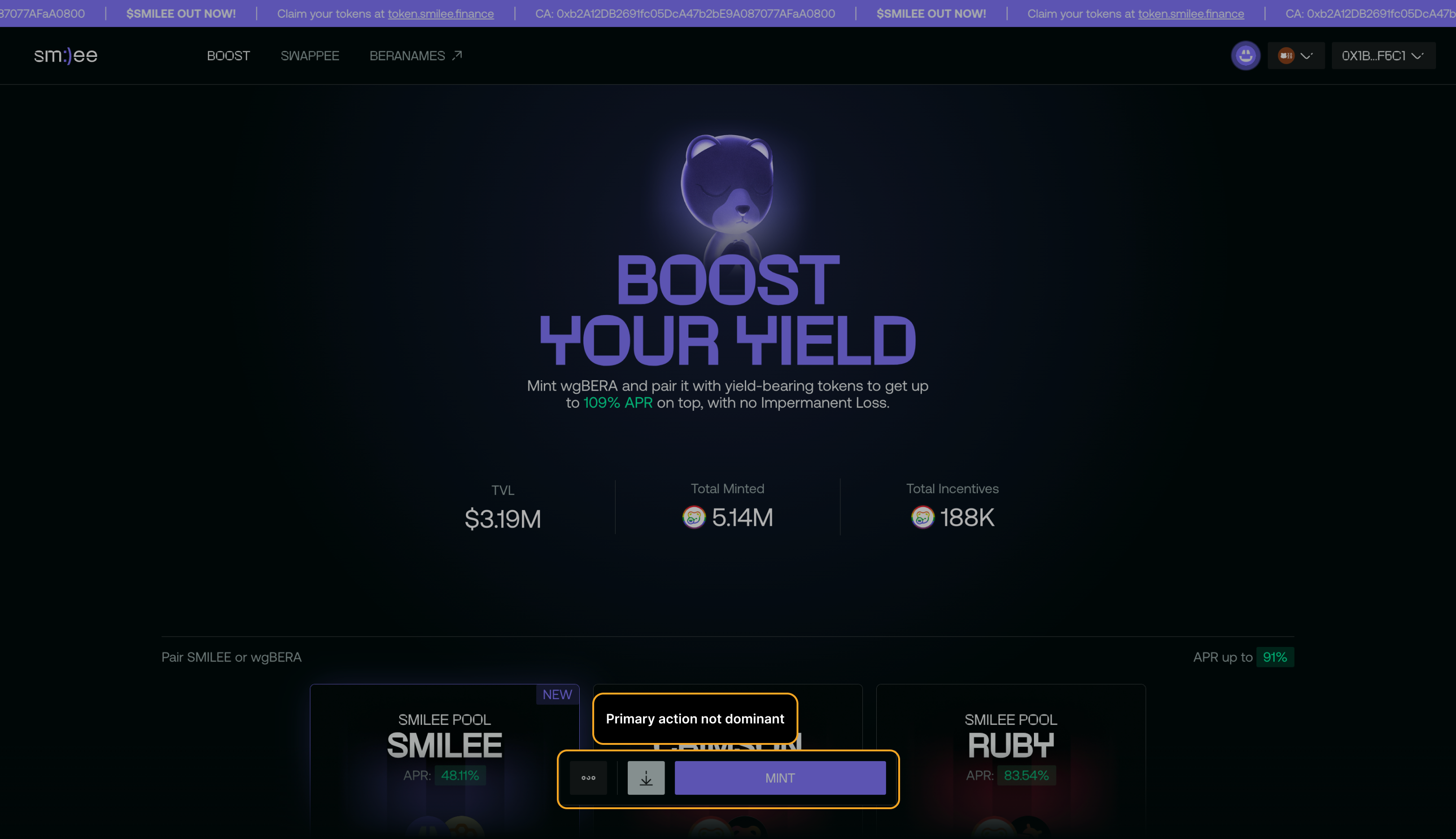

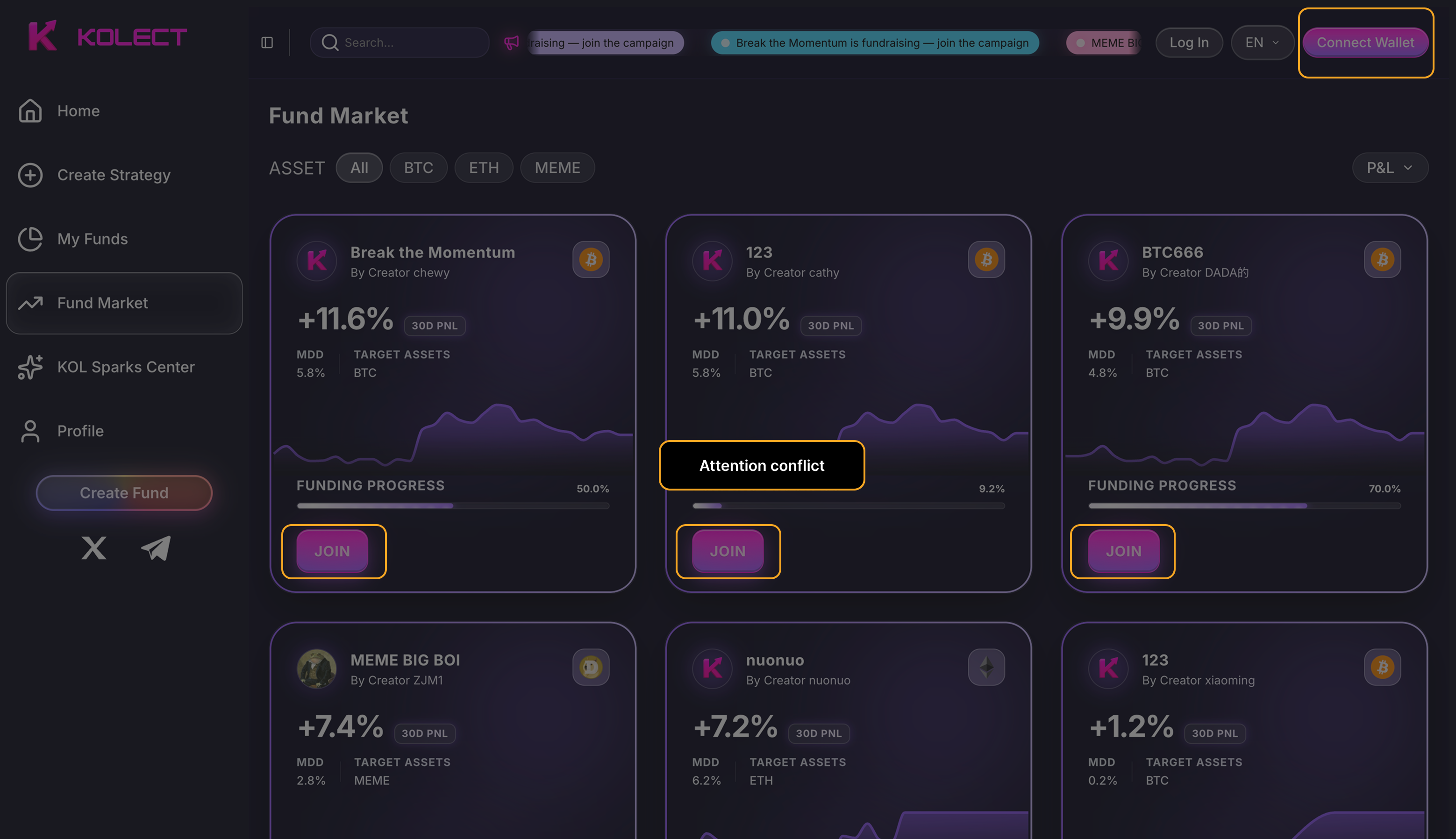

Visual hierarchy collapse forces scanning instead of deciding, raising abandonment risk at peak intent.

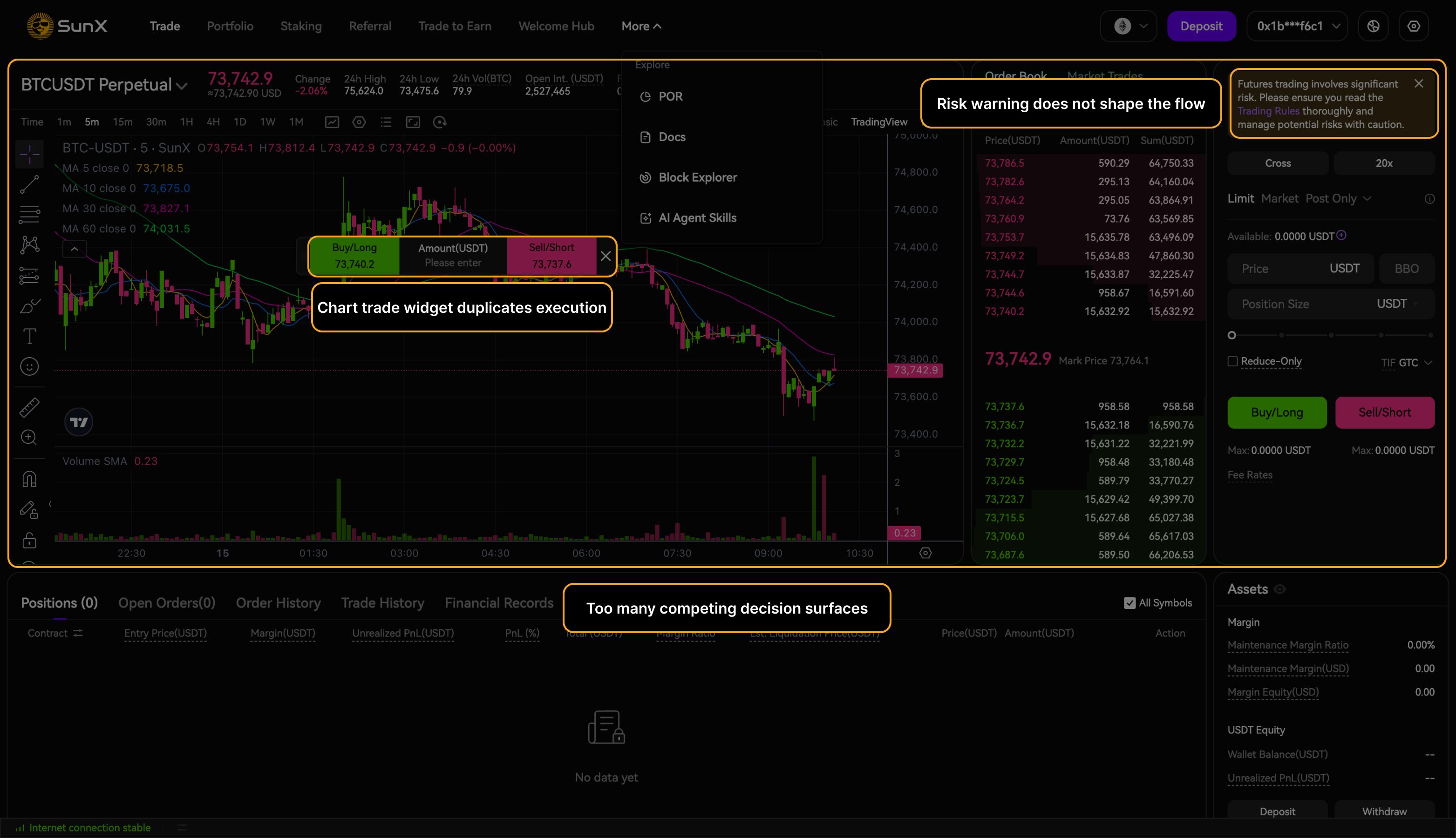

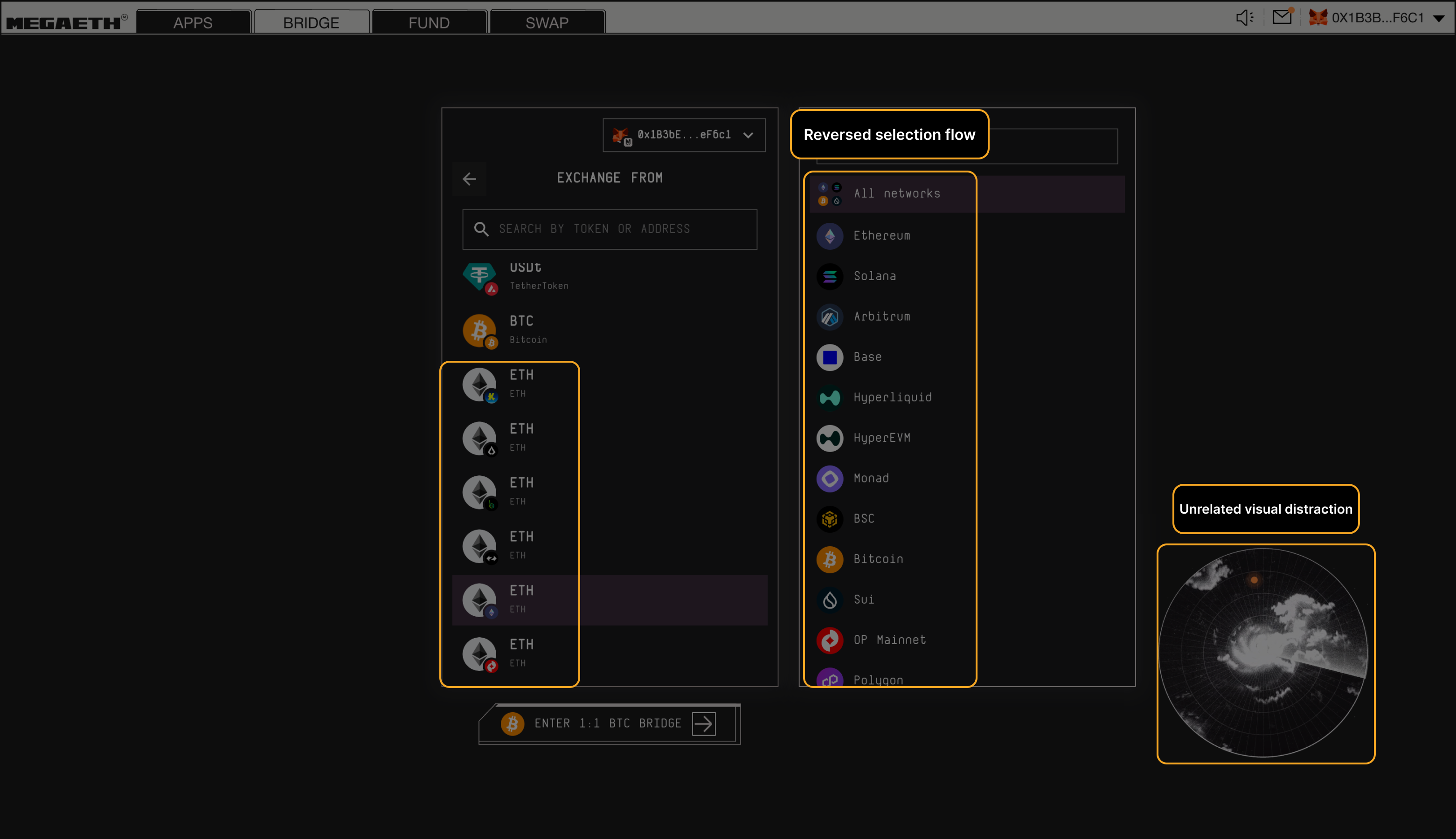

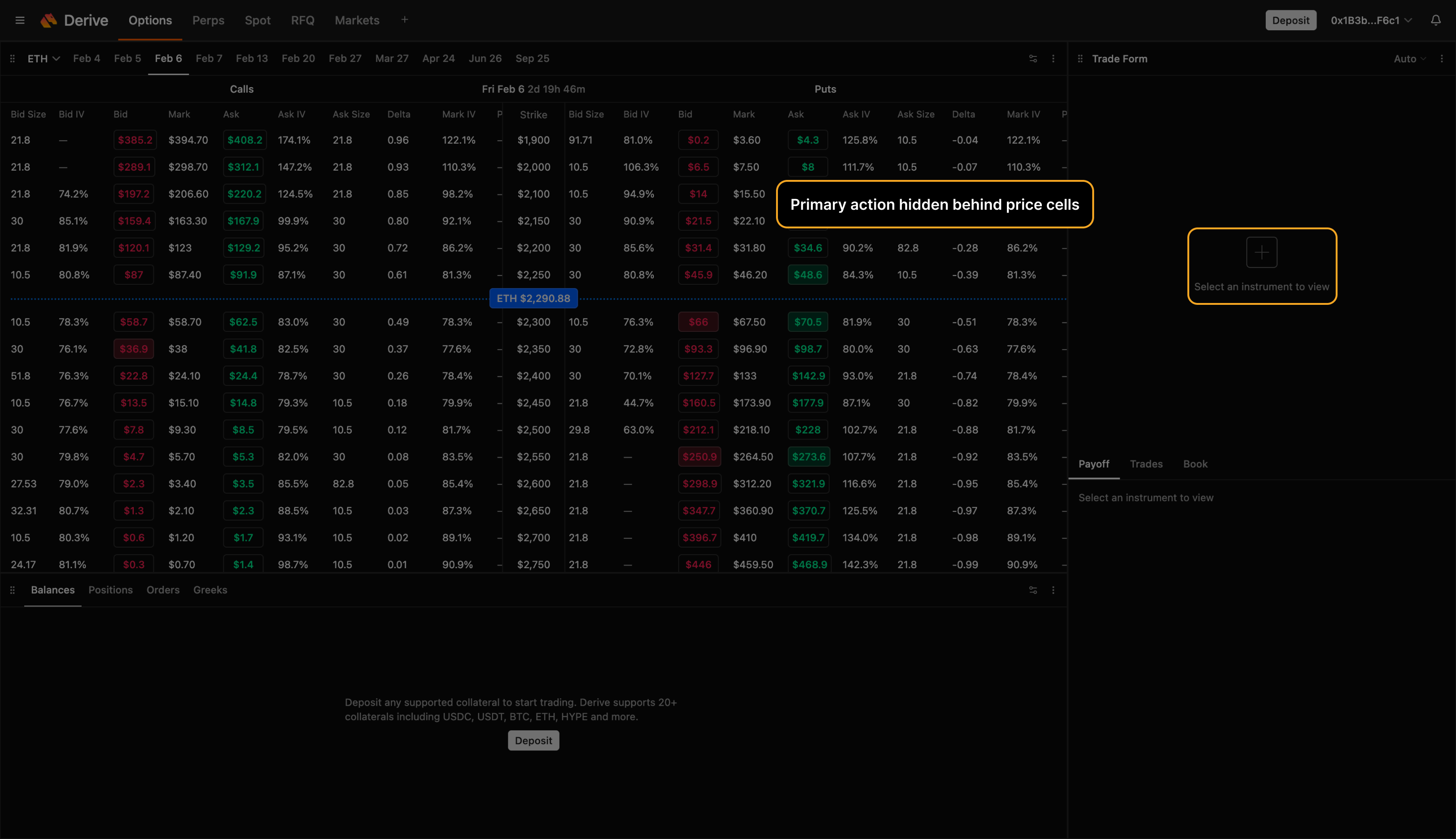

Mis-execution Risk

Each issue is tagged by type, severity, and where it appears in the flow.

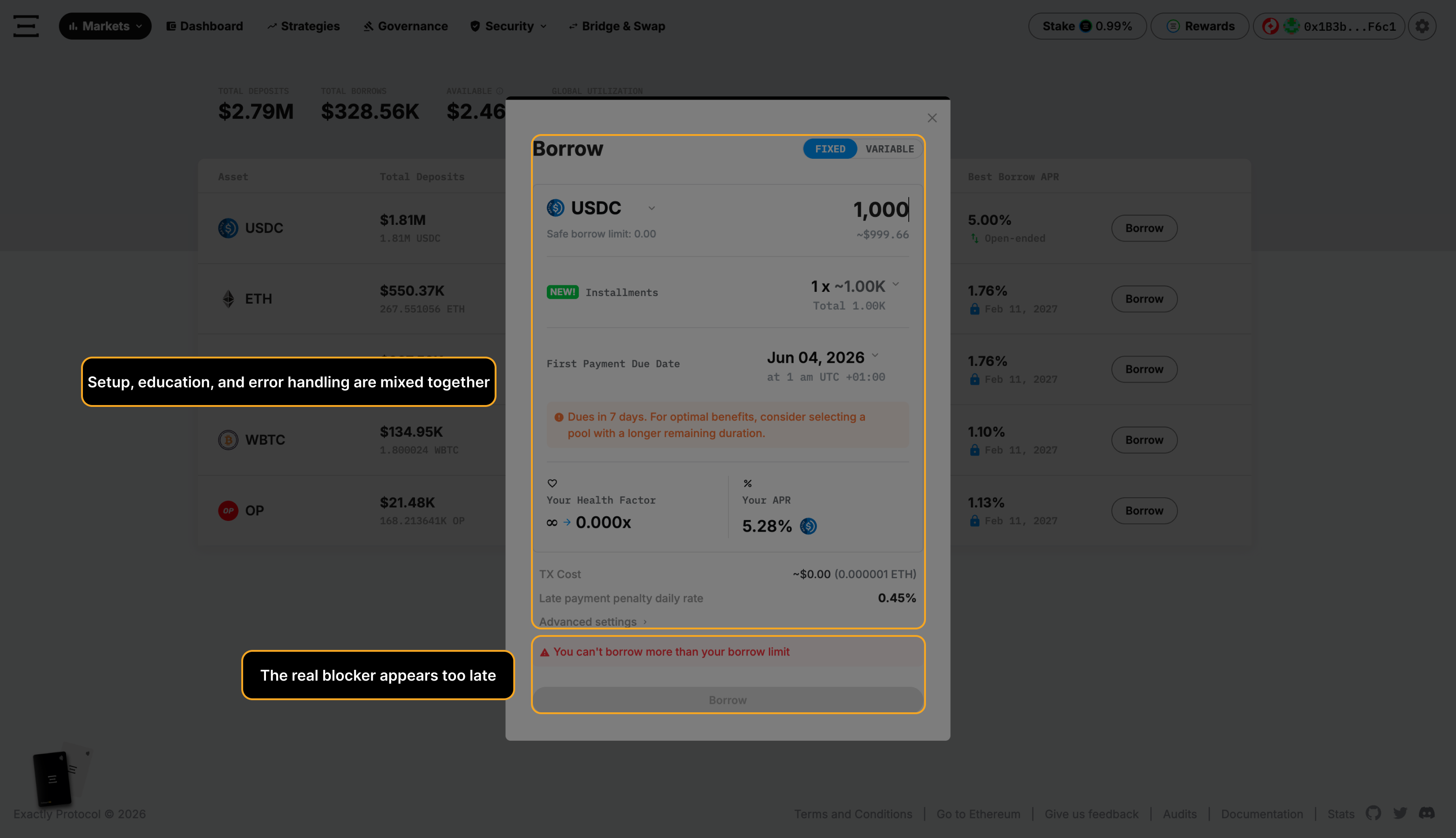

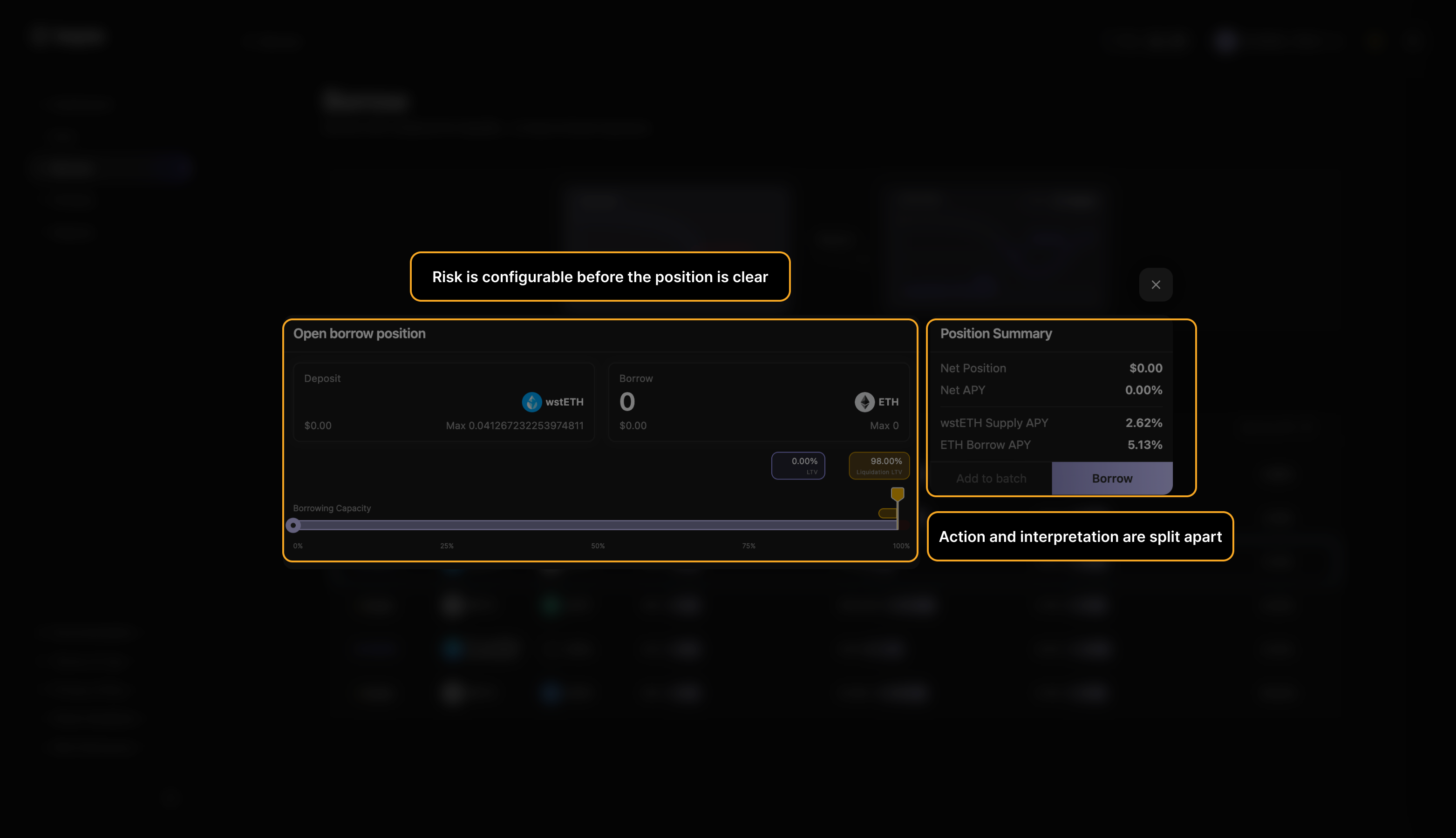

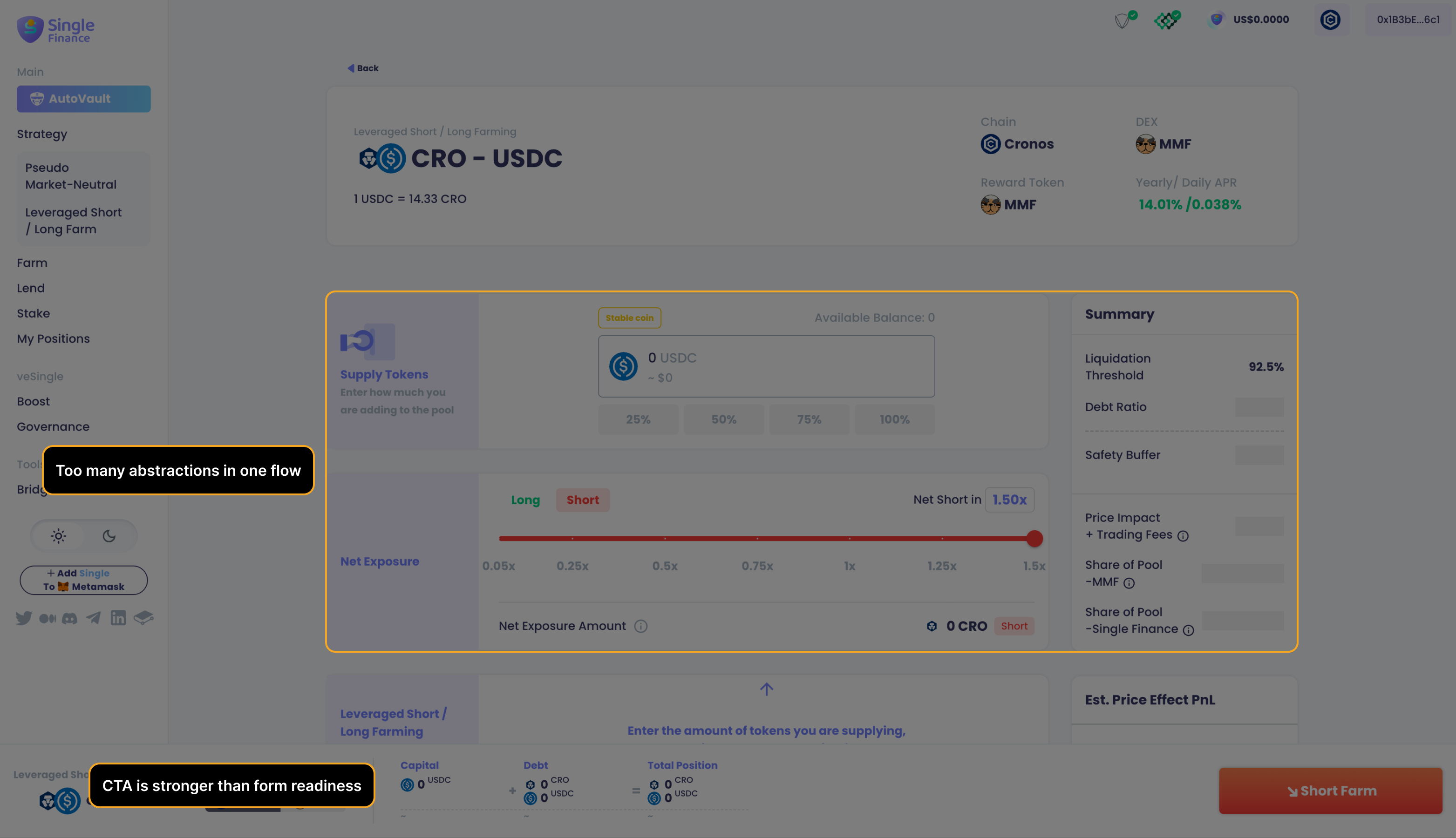

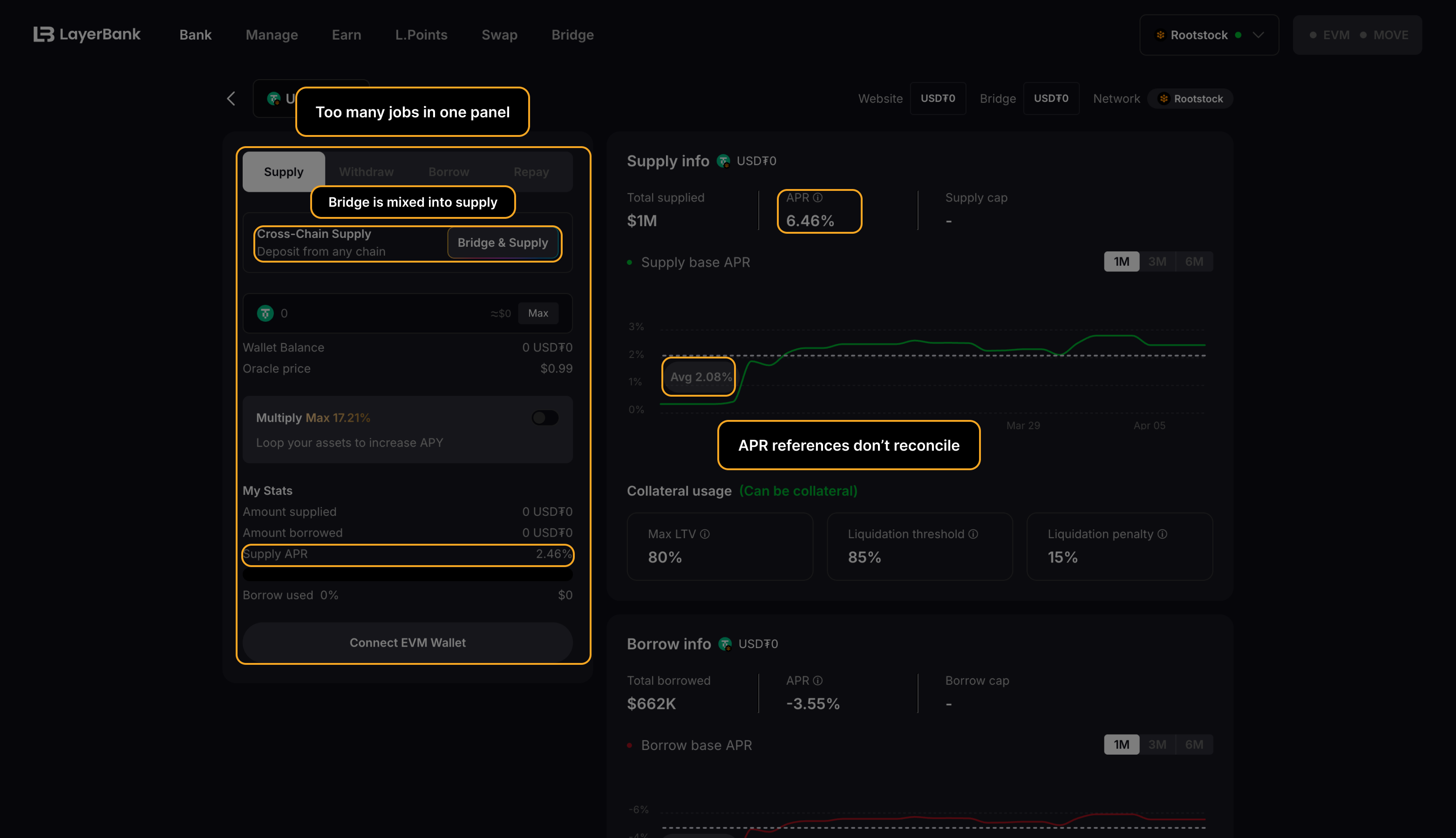

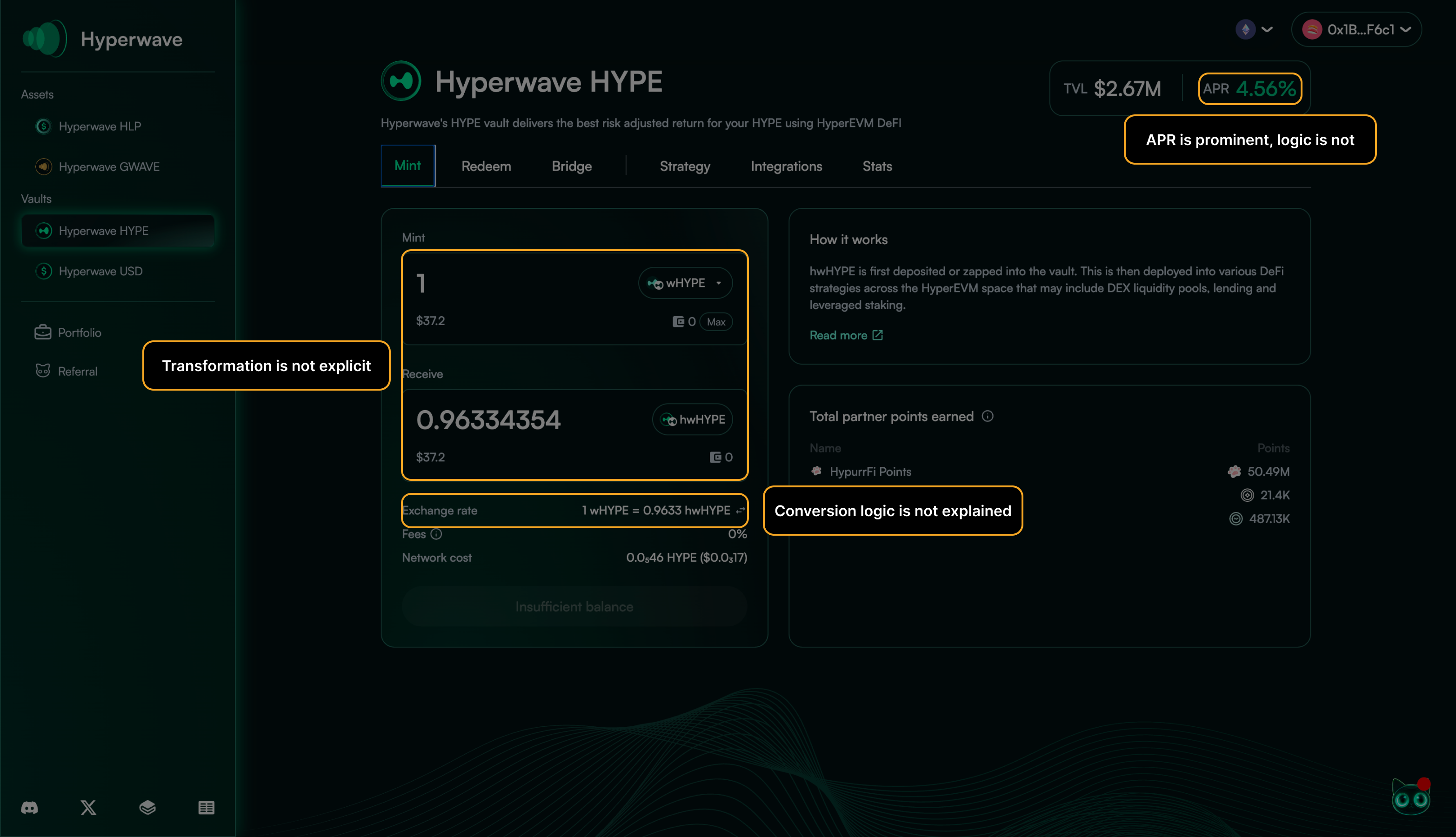

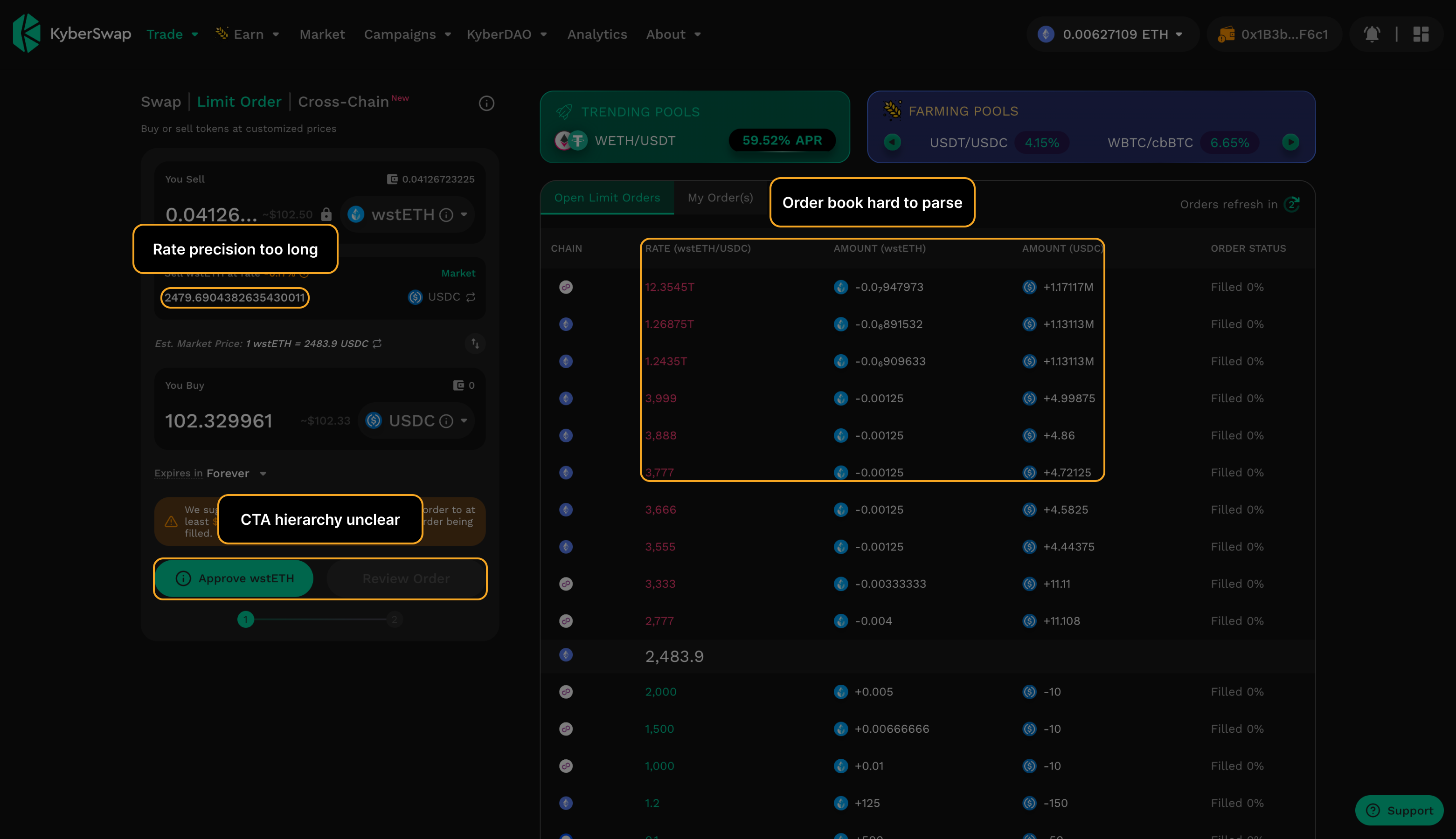

Contract details, fees, or slippage arrive after the user has already formed intent — or not at all.

Swap routes that surface price impact only after confirmation is triggered.

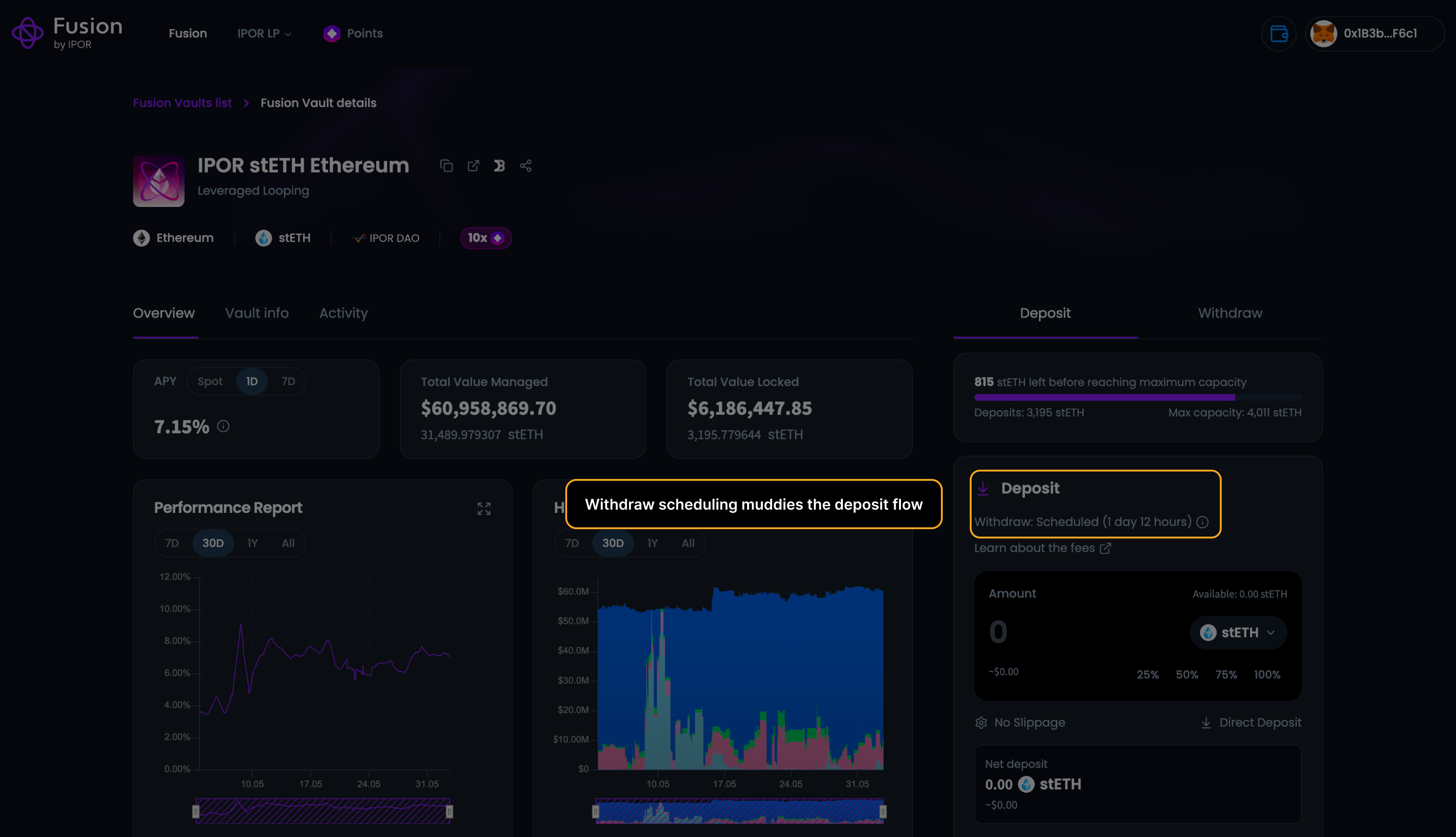

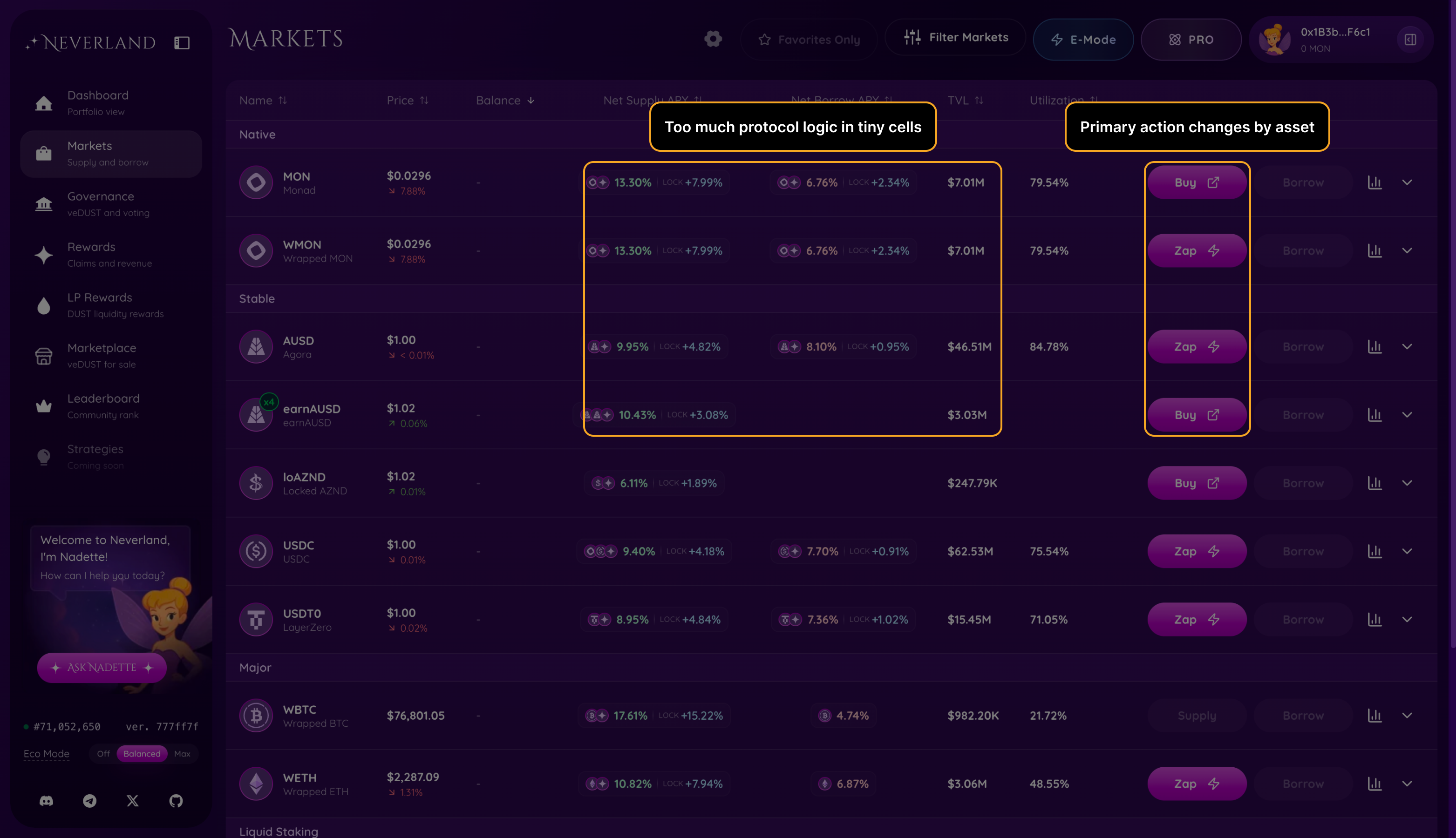

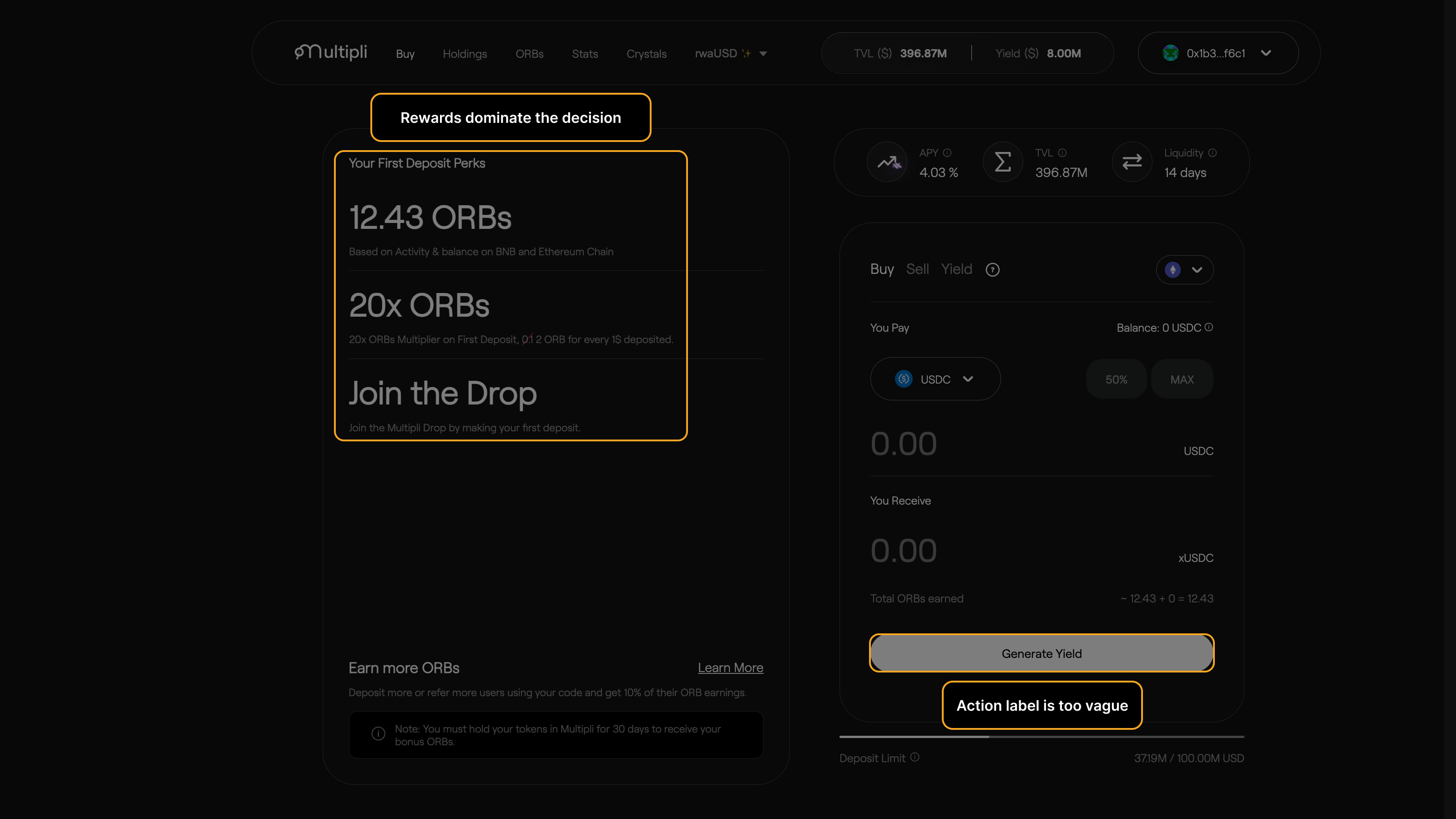

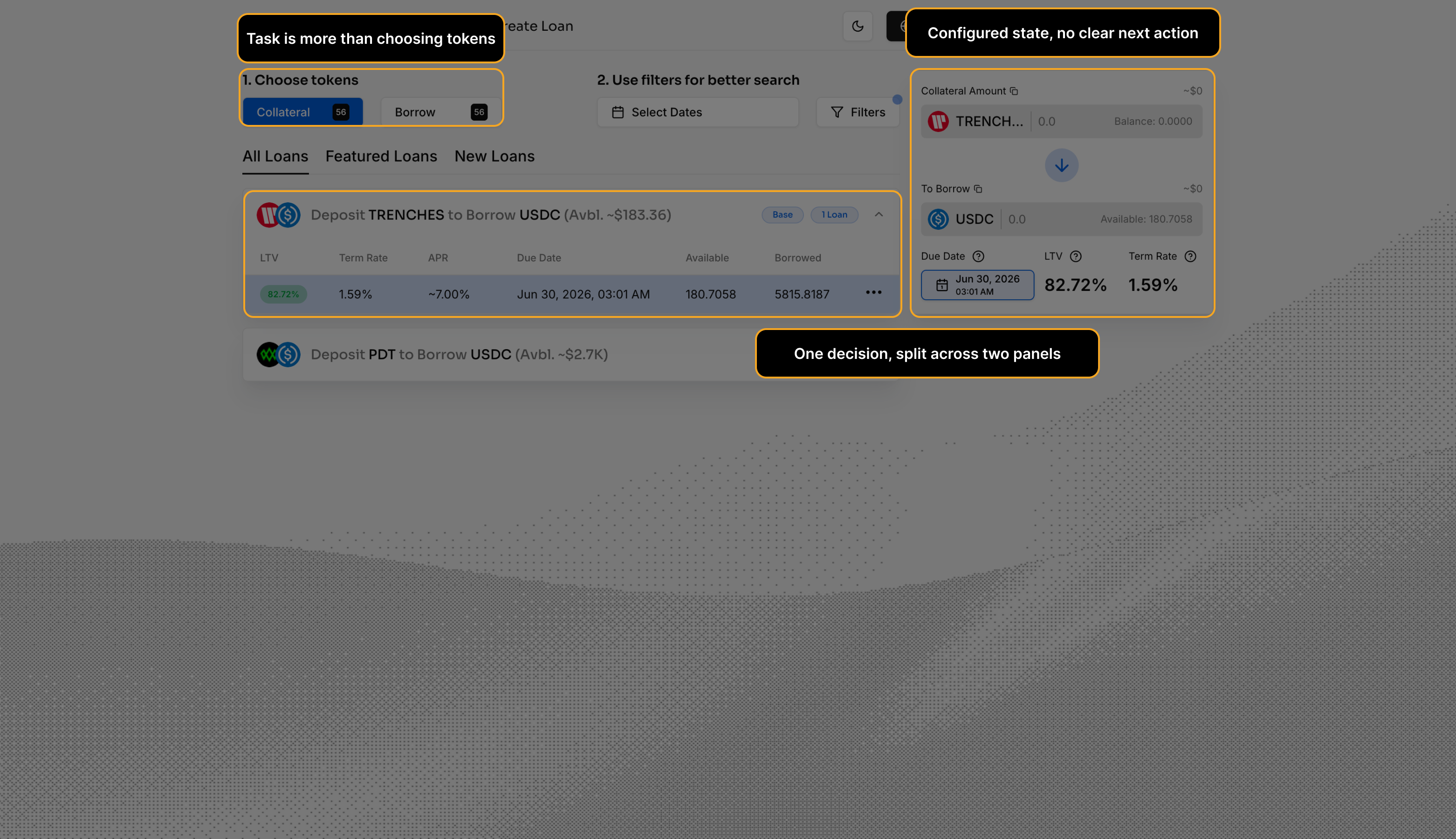

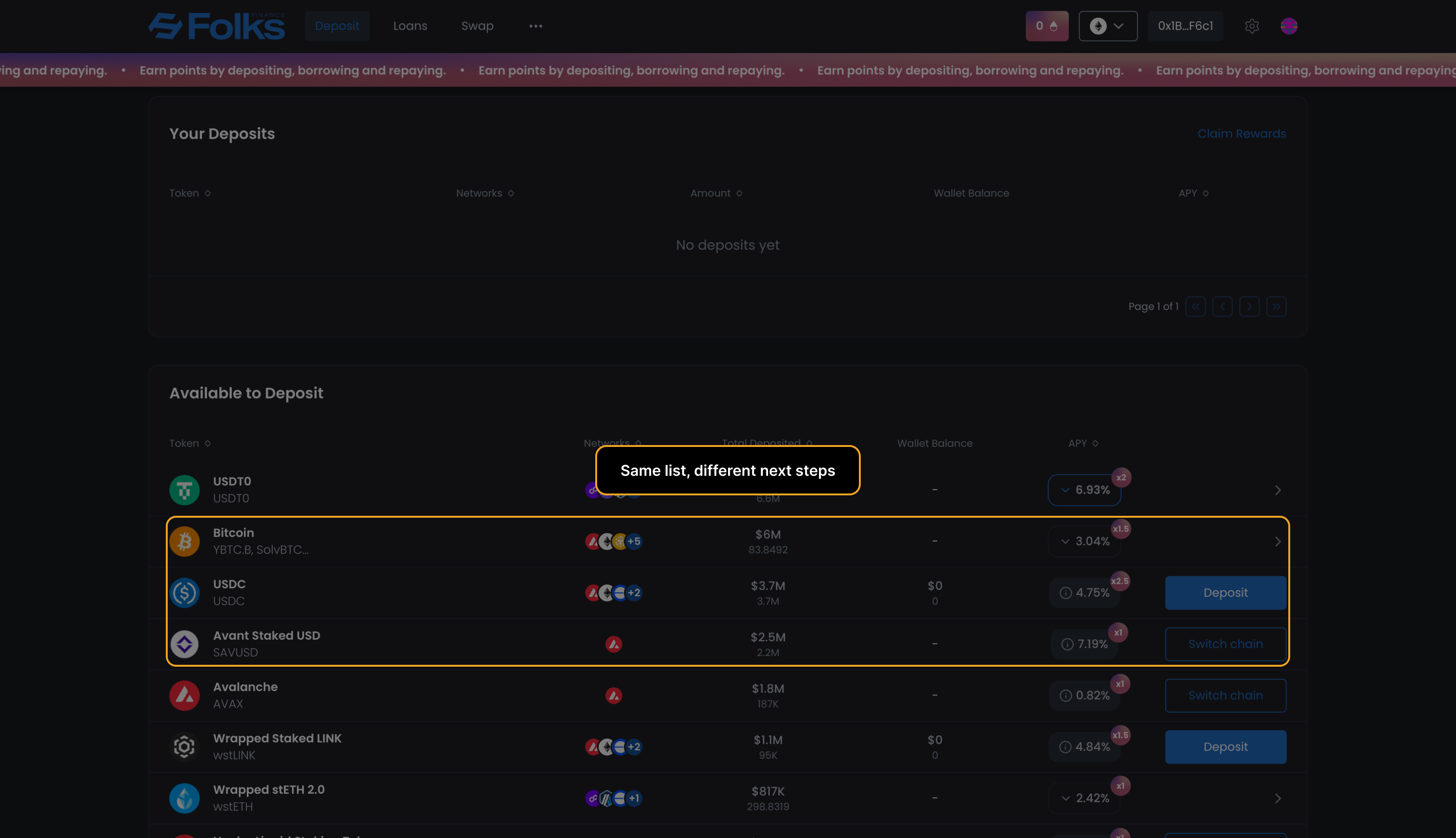

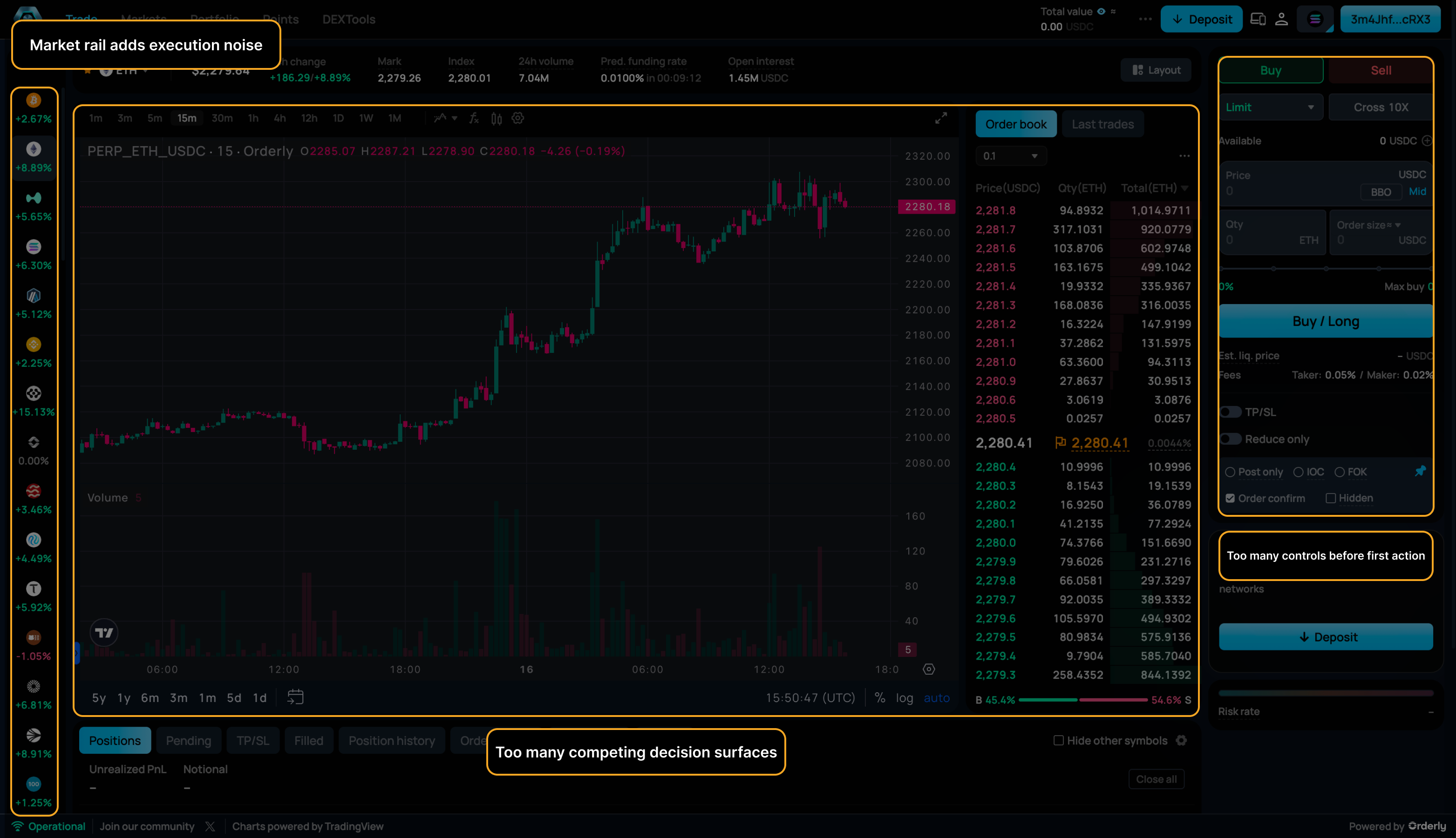

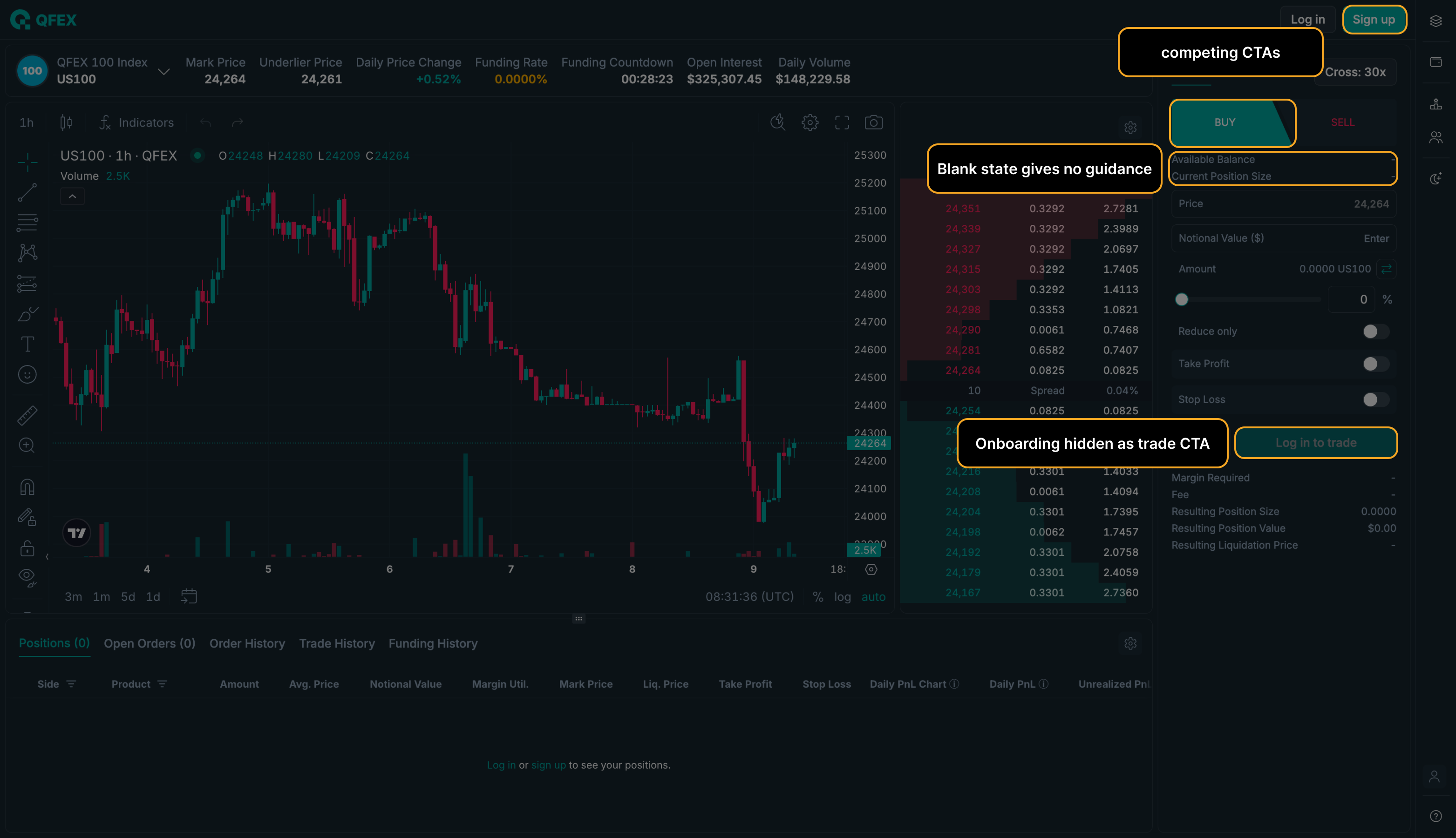

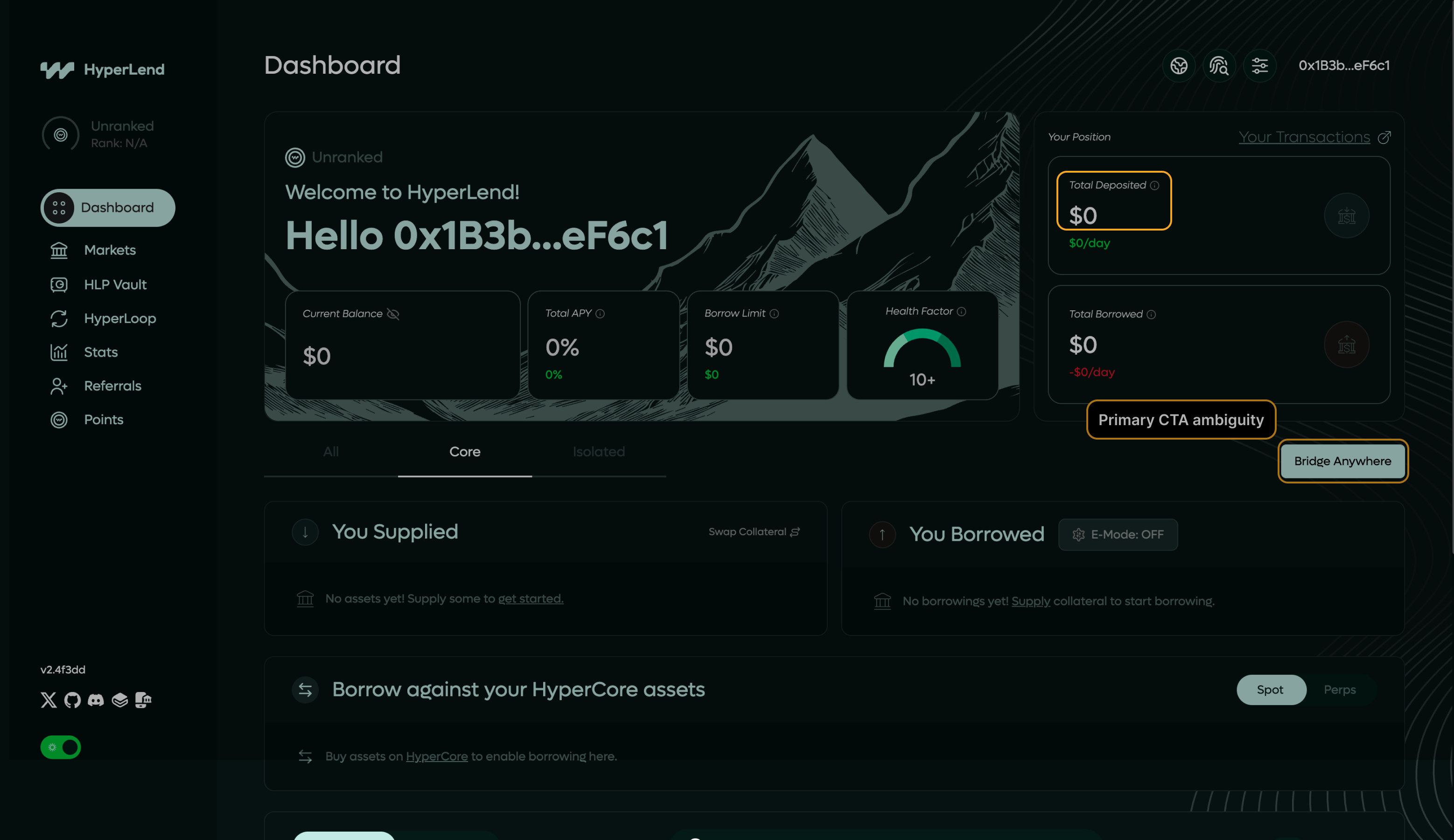

The call-to-action doesn't reflect the next logical step given the current application state.

"Approve" and "Swap" rendered identically when the flow requires sequential completion.

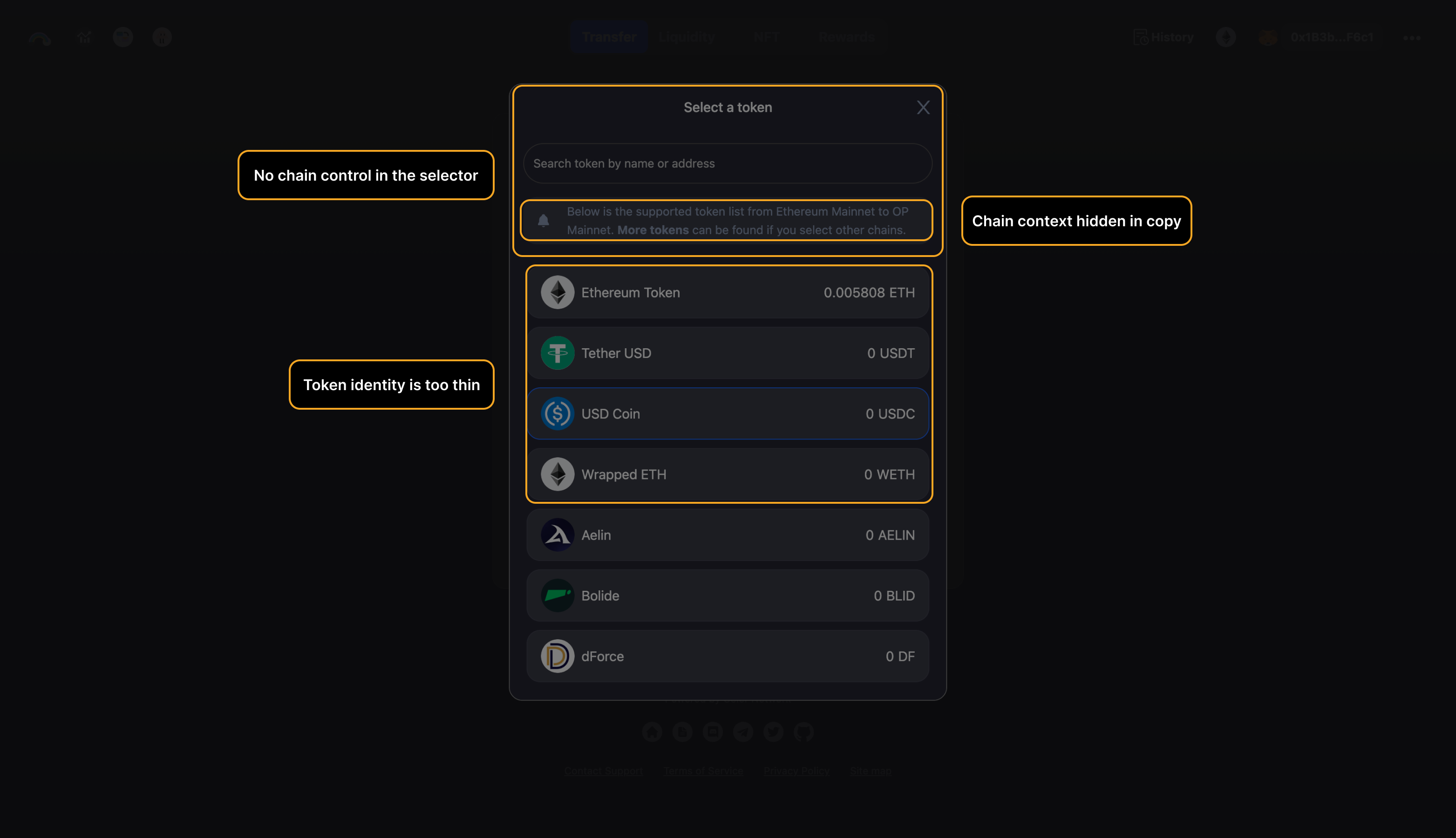

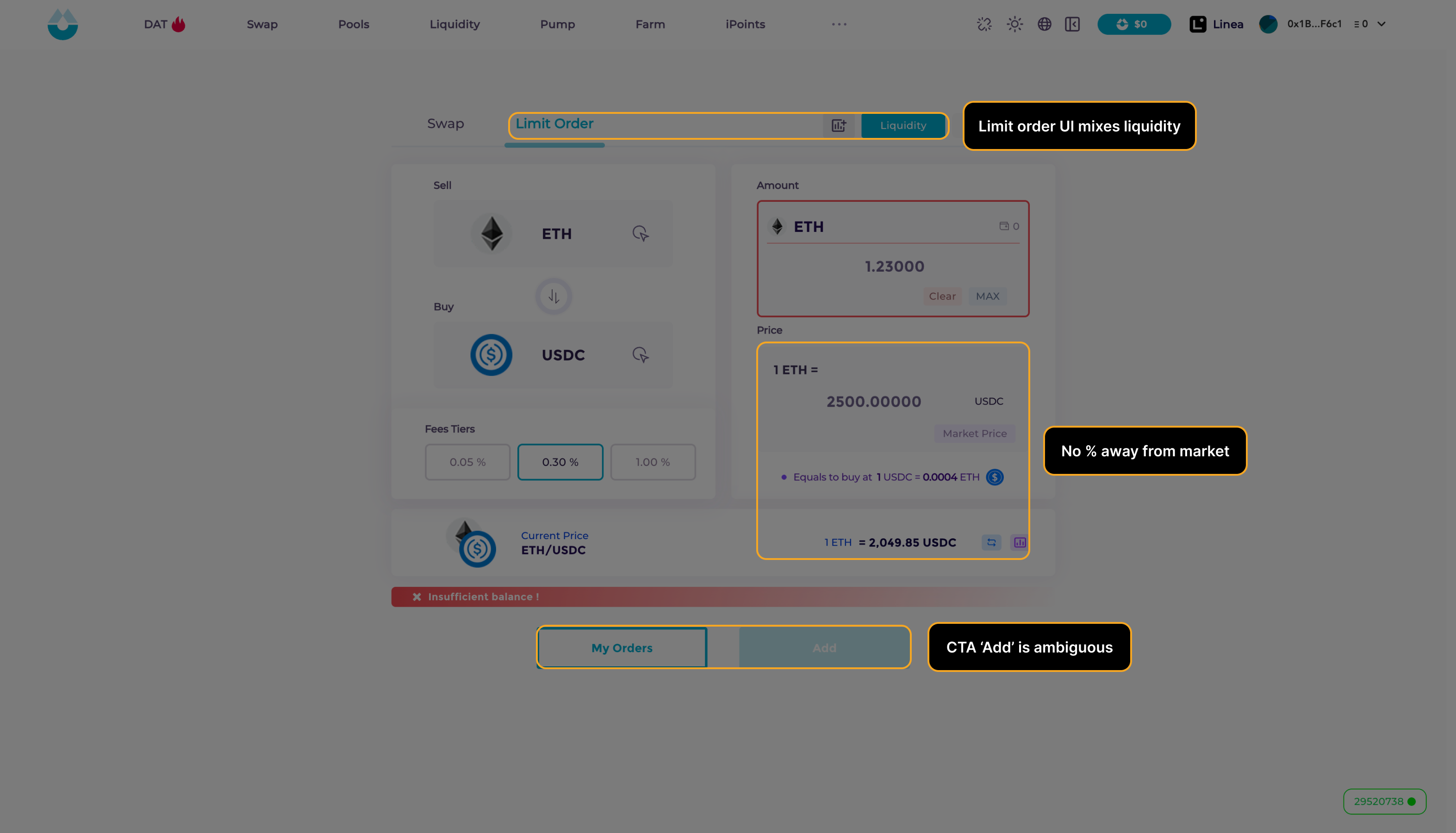

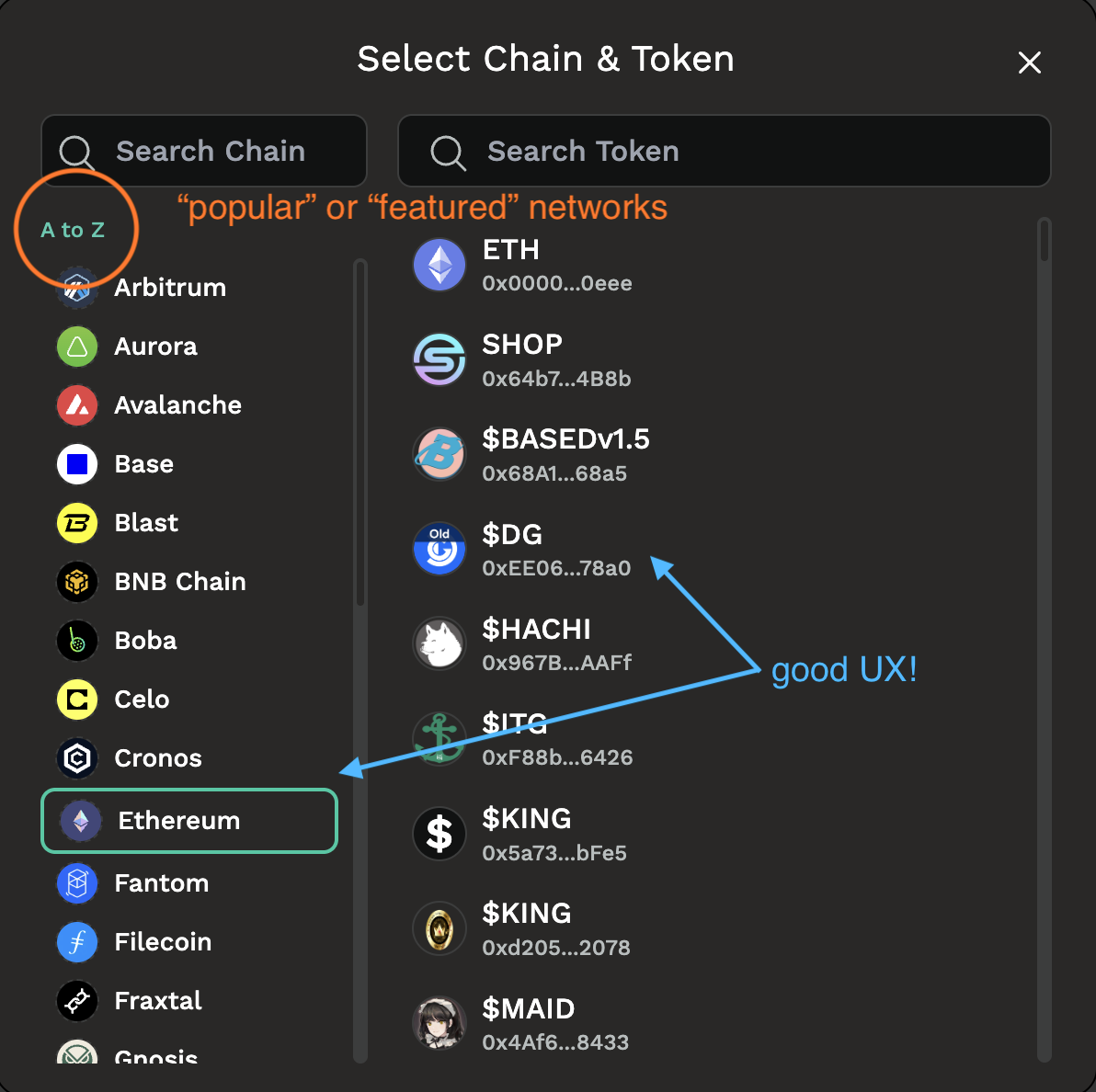

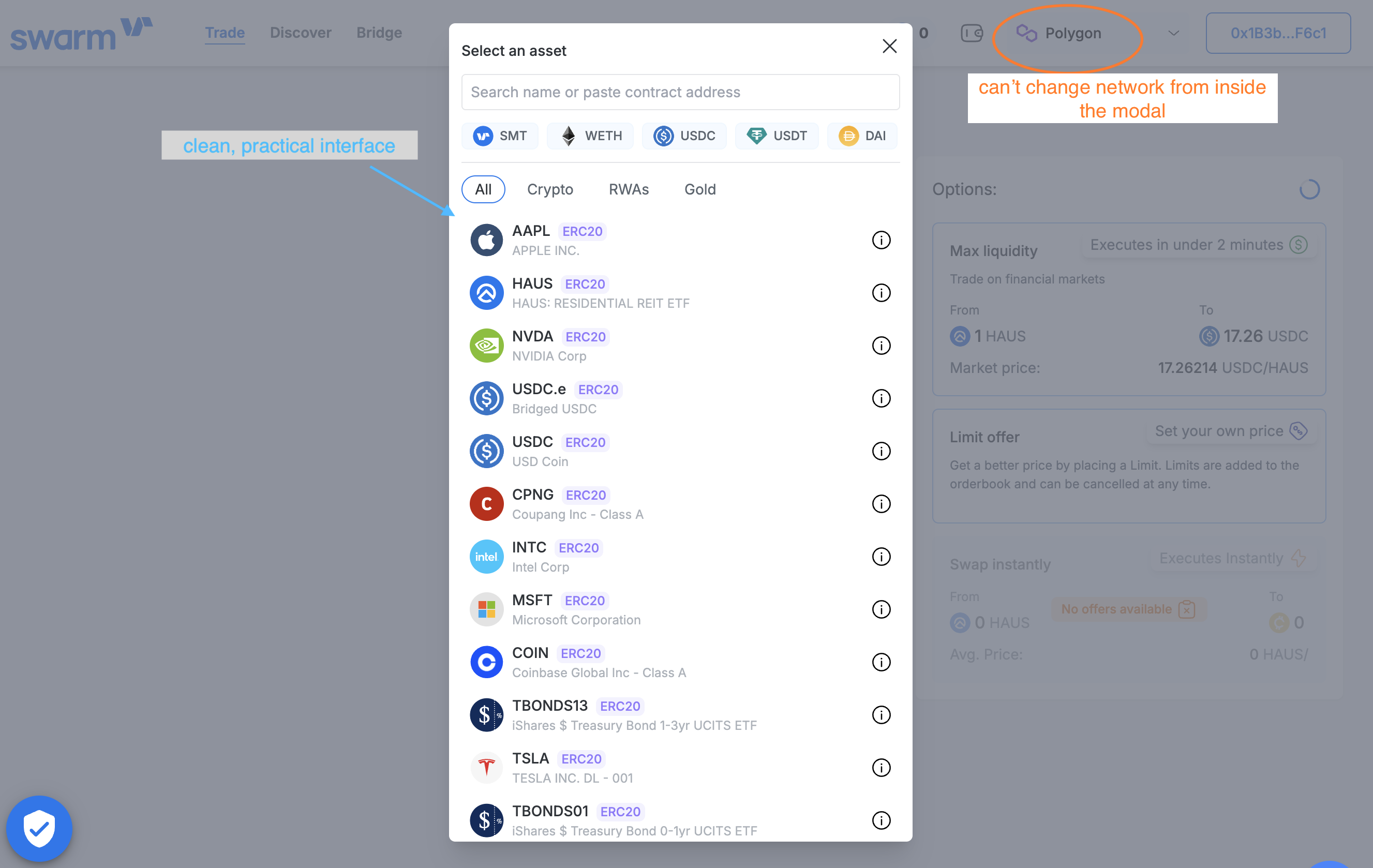

Network or chain selection is not enforced before token selection, creating irreversible mismatches.

Token dropdown available before destination chain is confirmed on bridge flows.

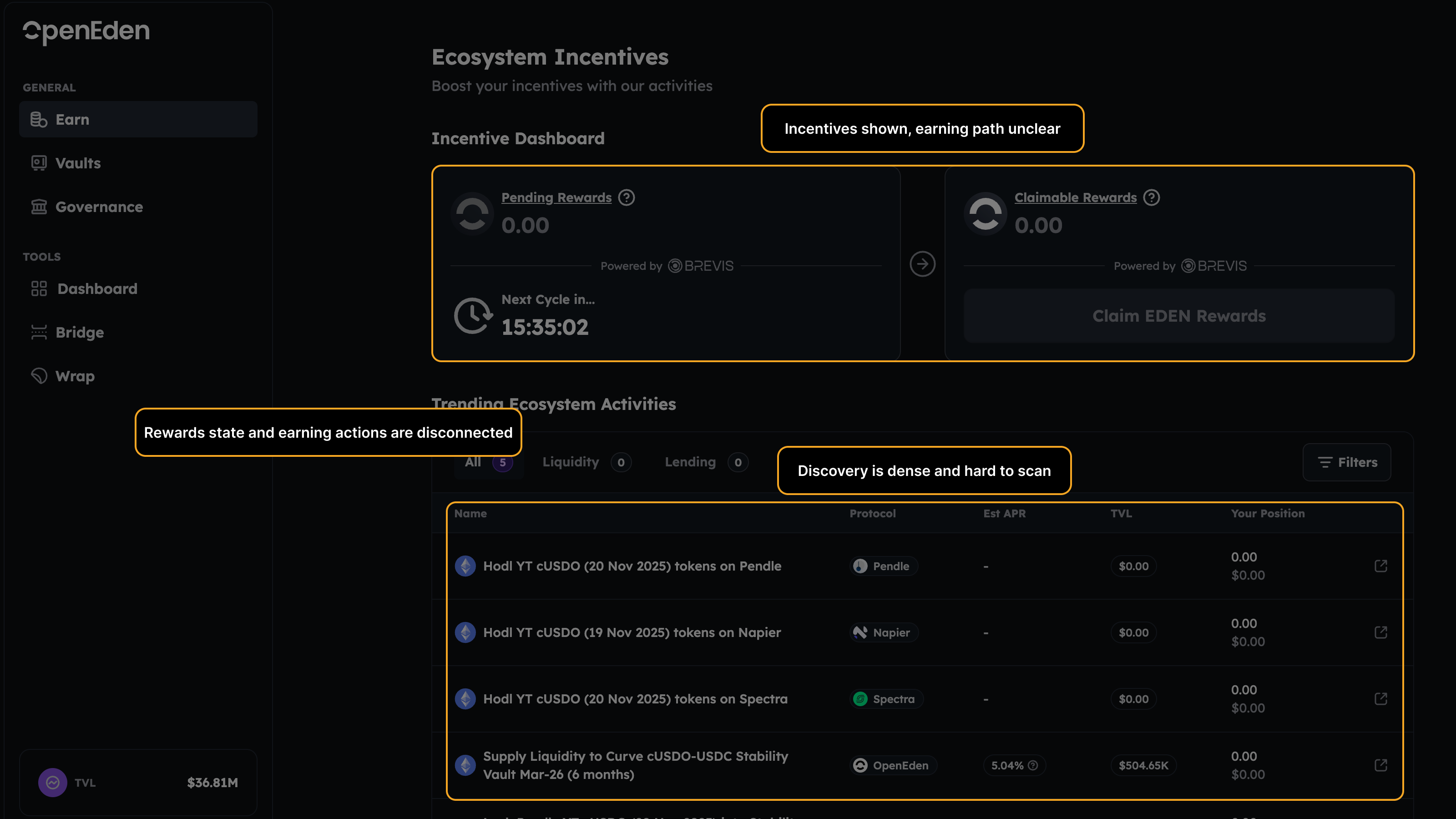

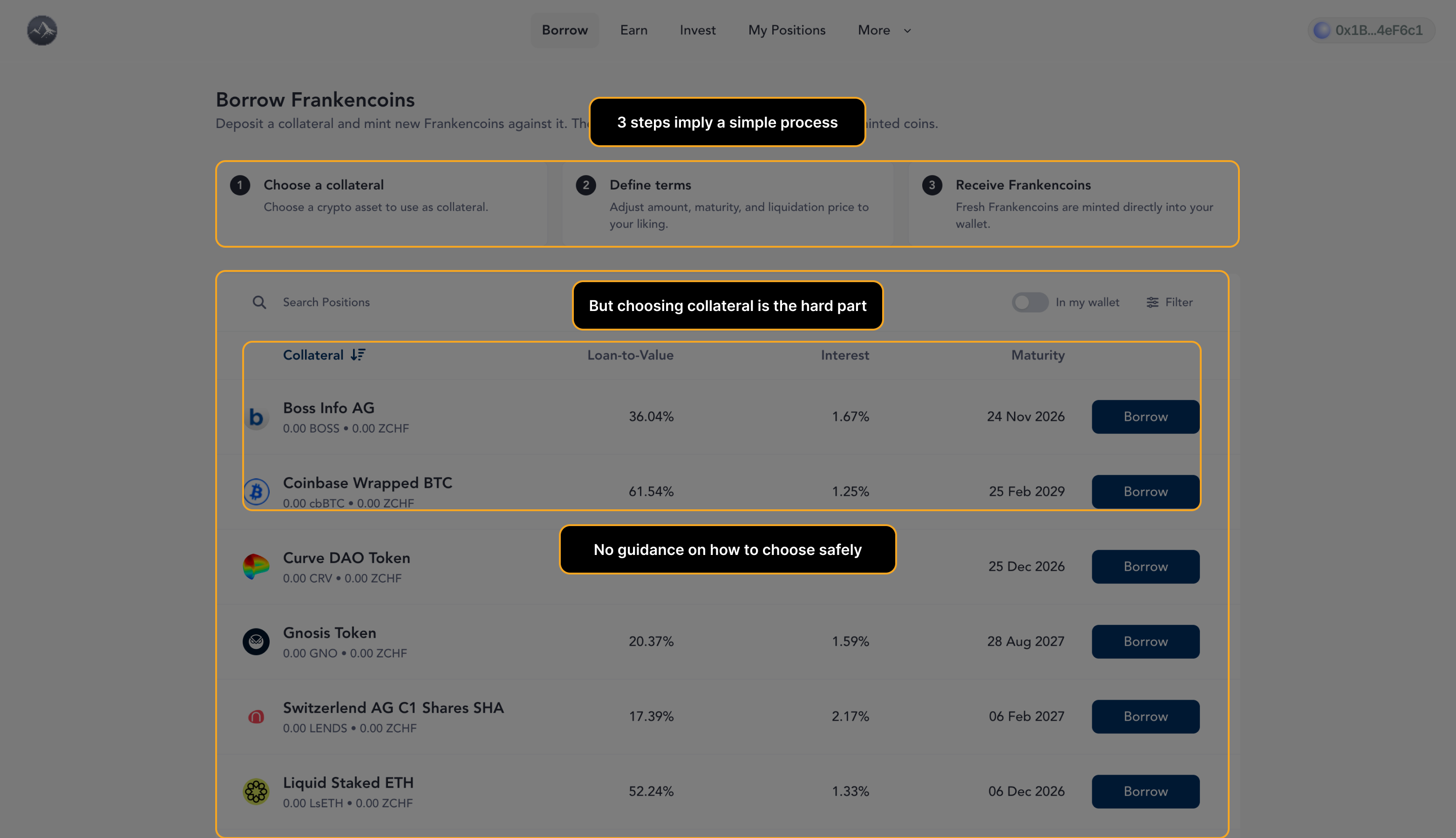

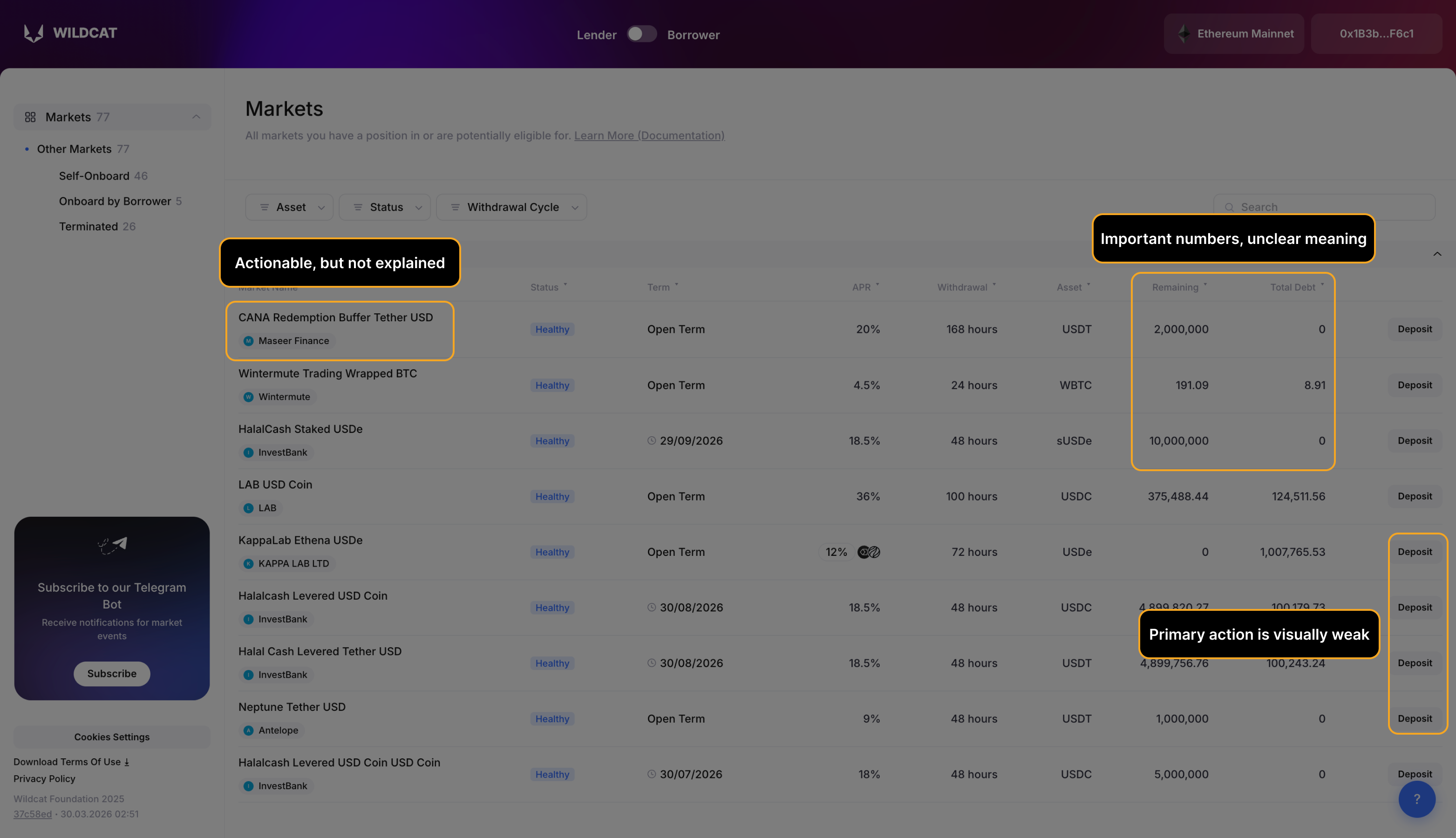

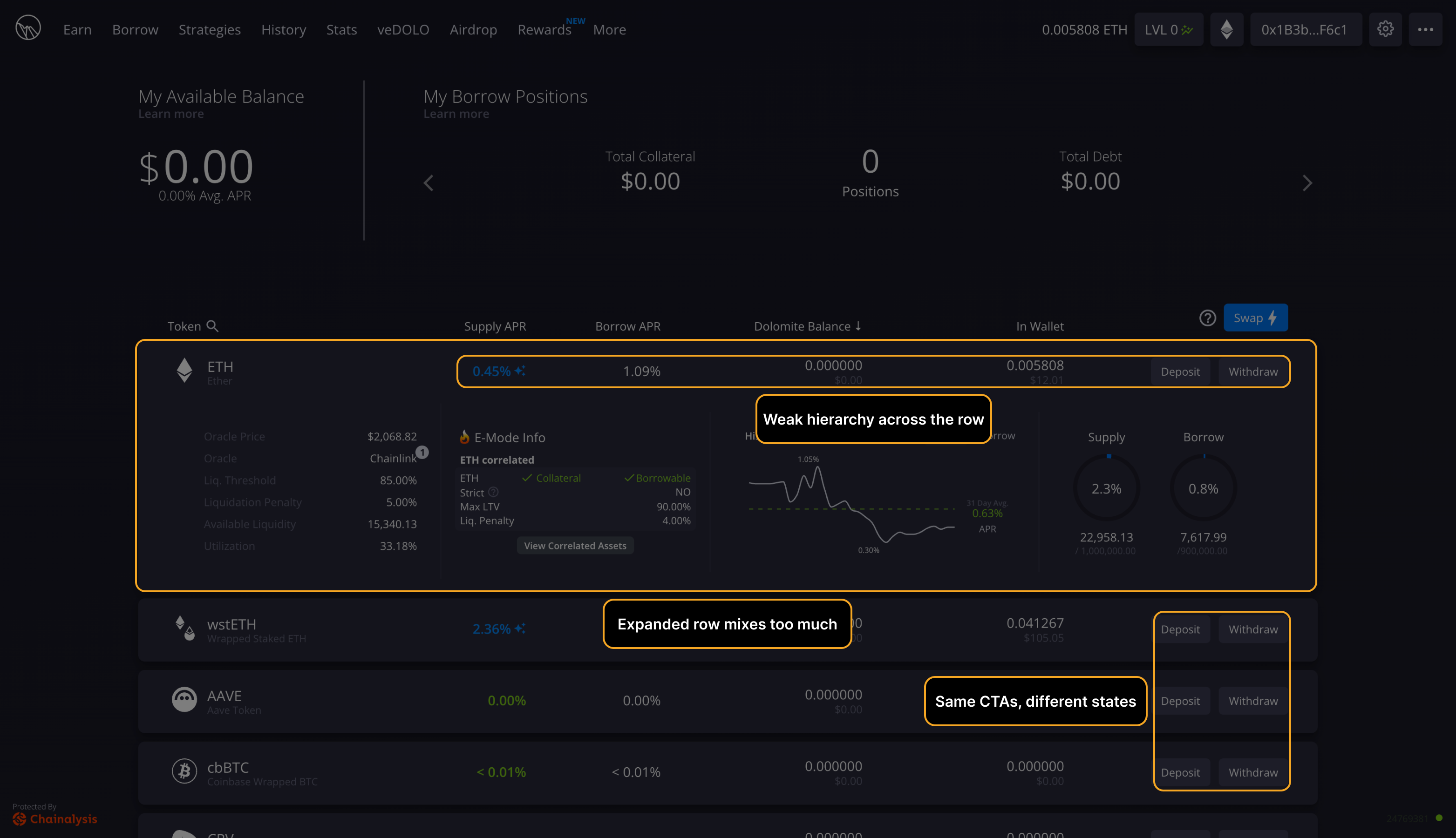

The committing action is embedded within a data table or requires implicit interaction discovery.

Borrow button inside a collateral table row with no visual affordance at scan depth.

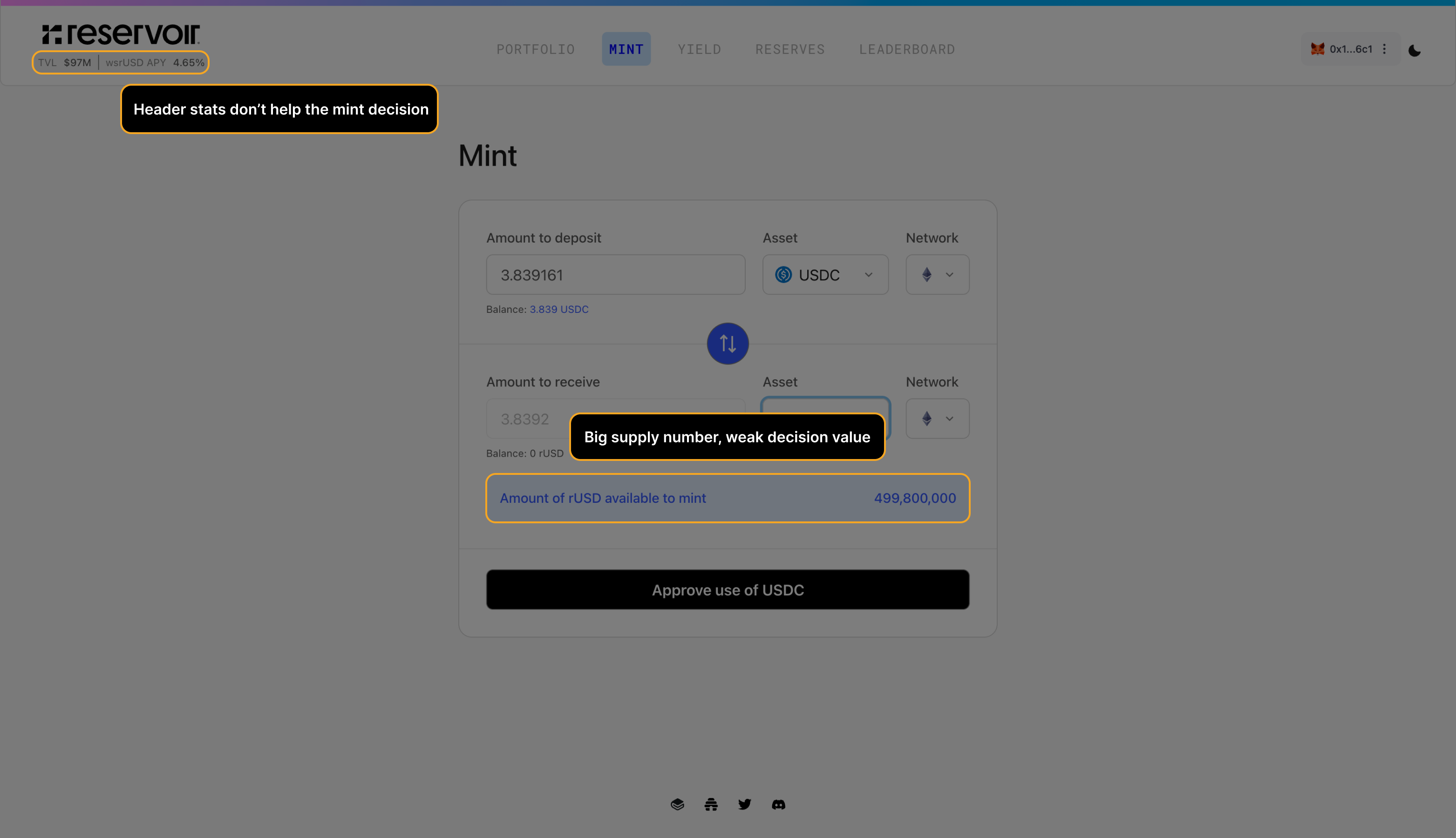

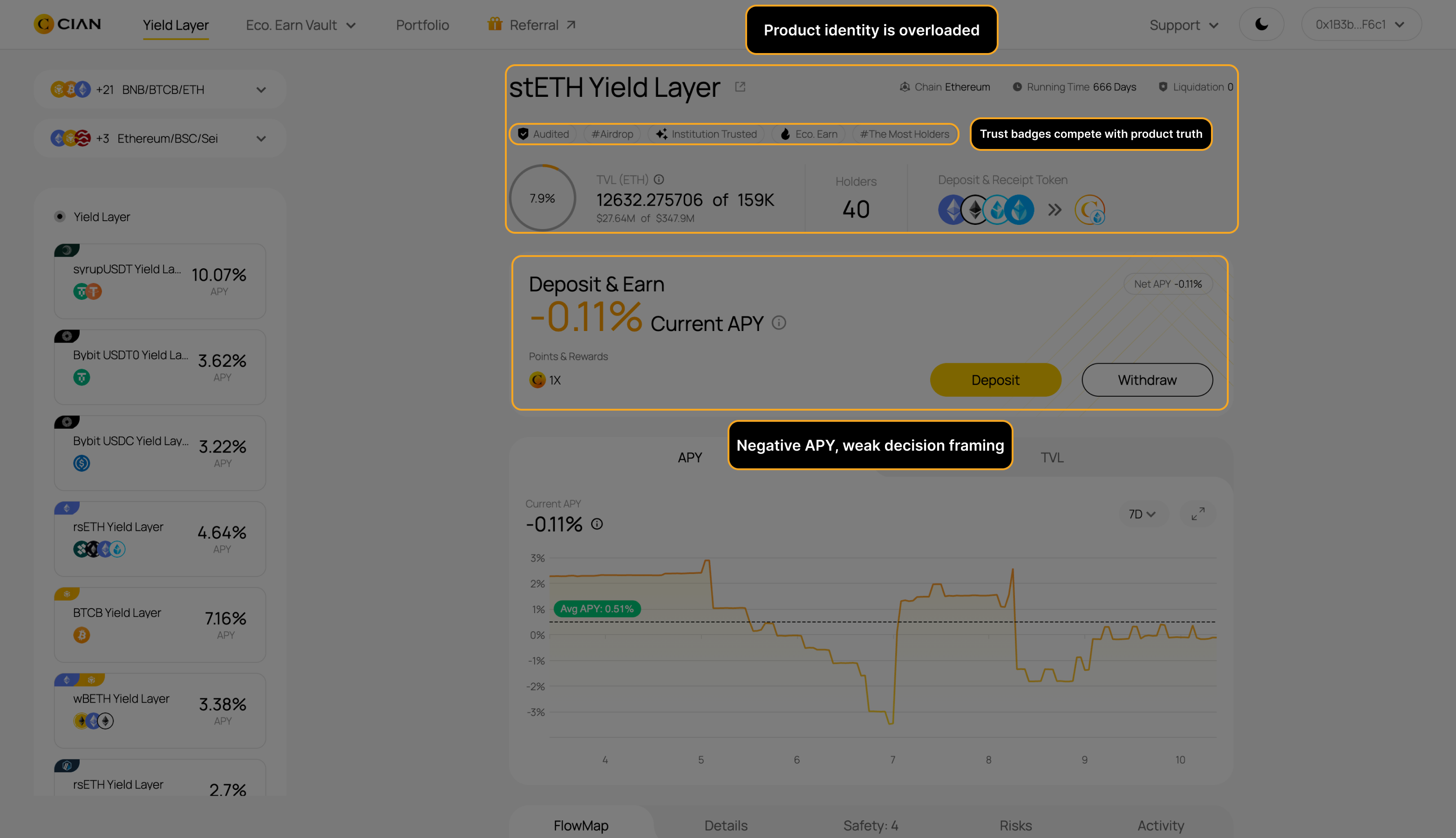

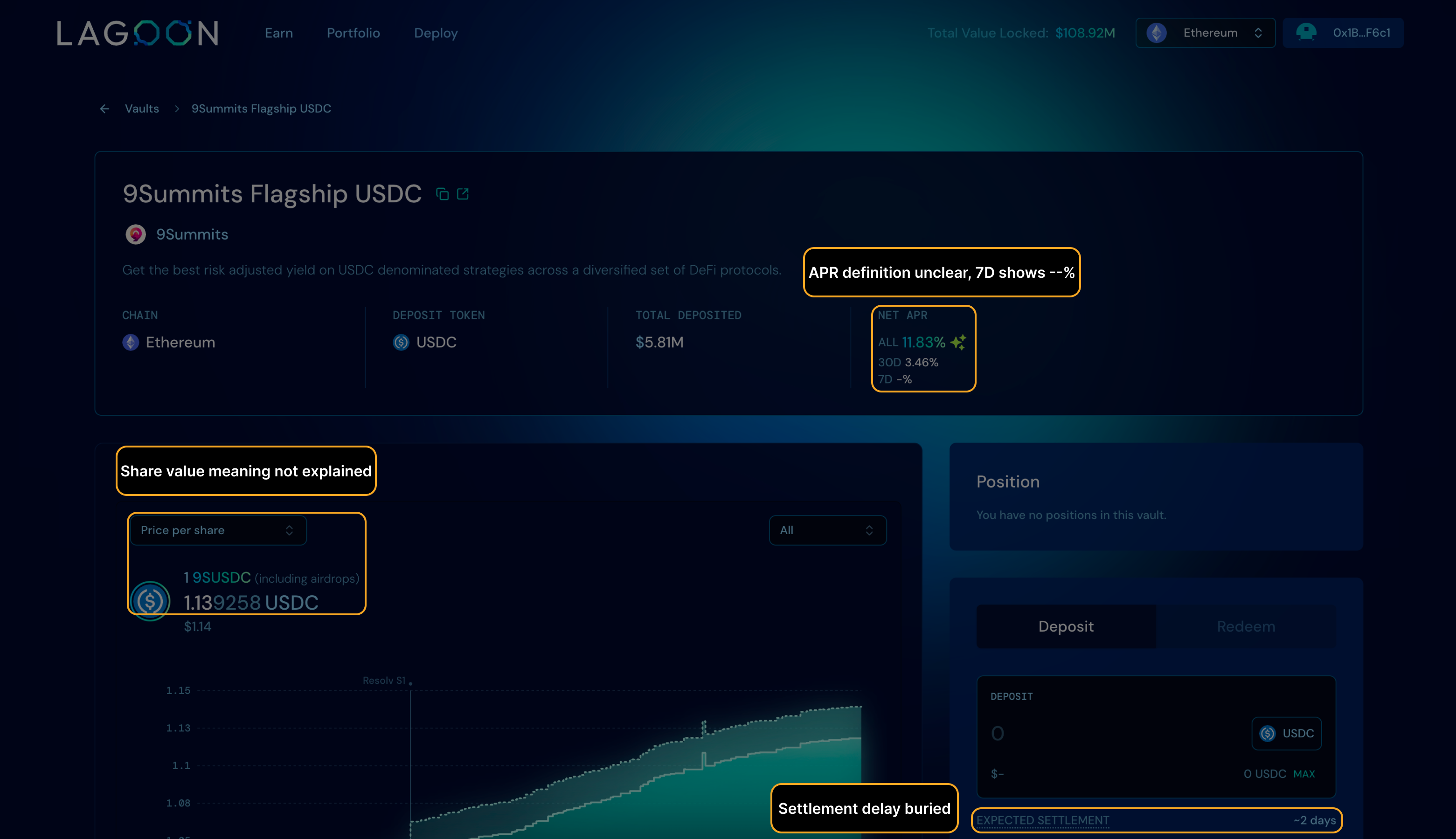

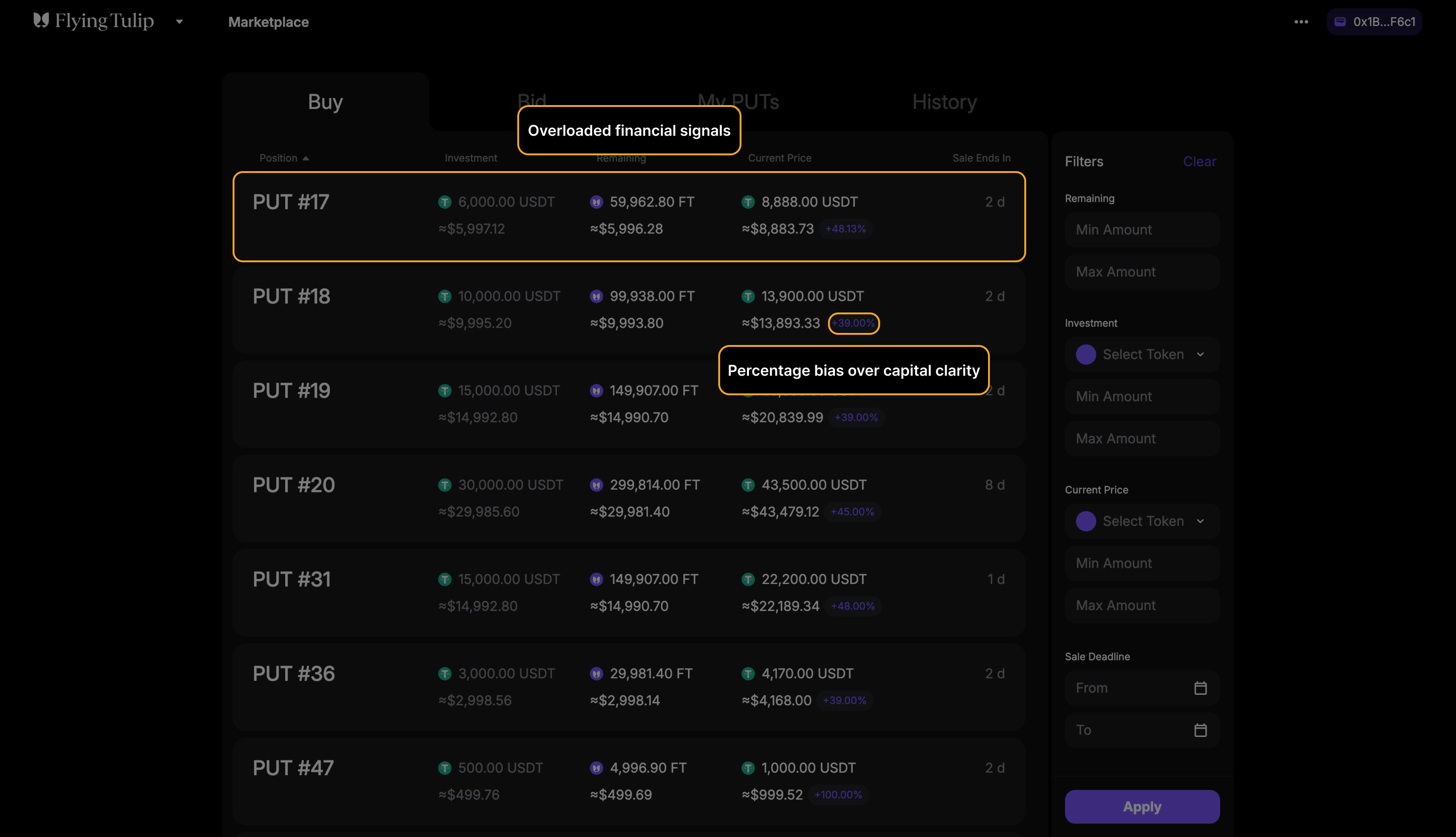

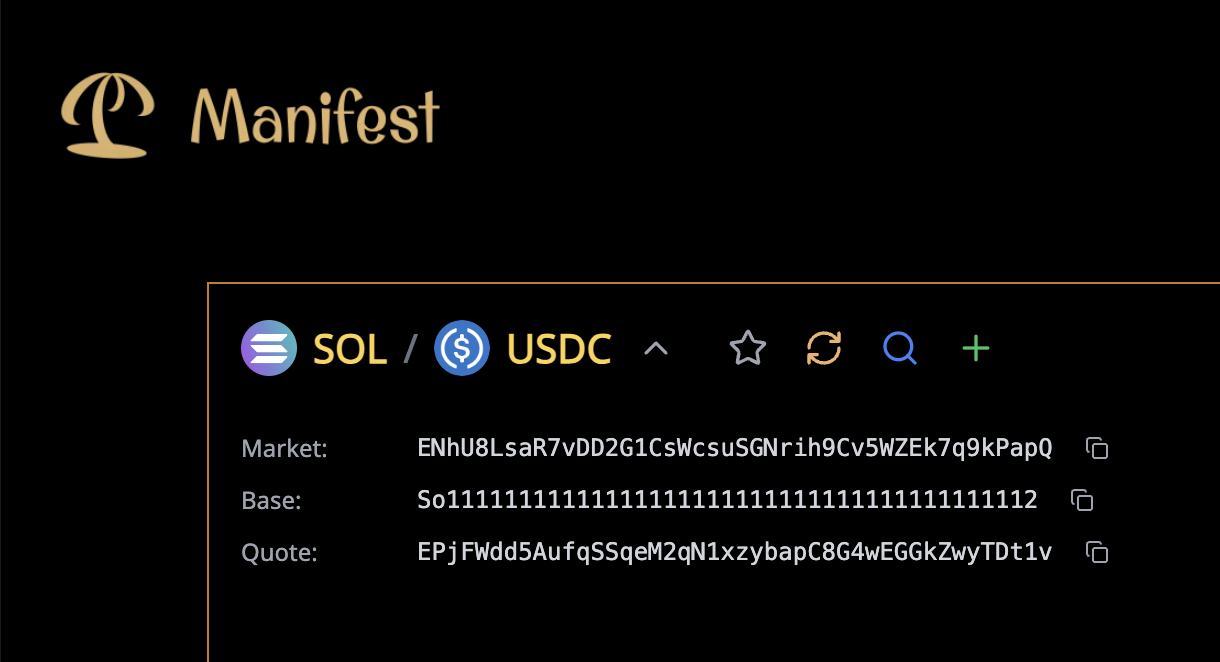

Labels reuse terms across contexts with different meanings, or omit breakdown where precision is required.

"Price impact" and "fee" used interchangeably across swap and liquidity flows.

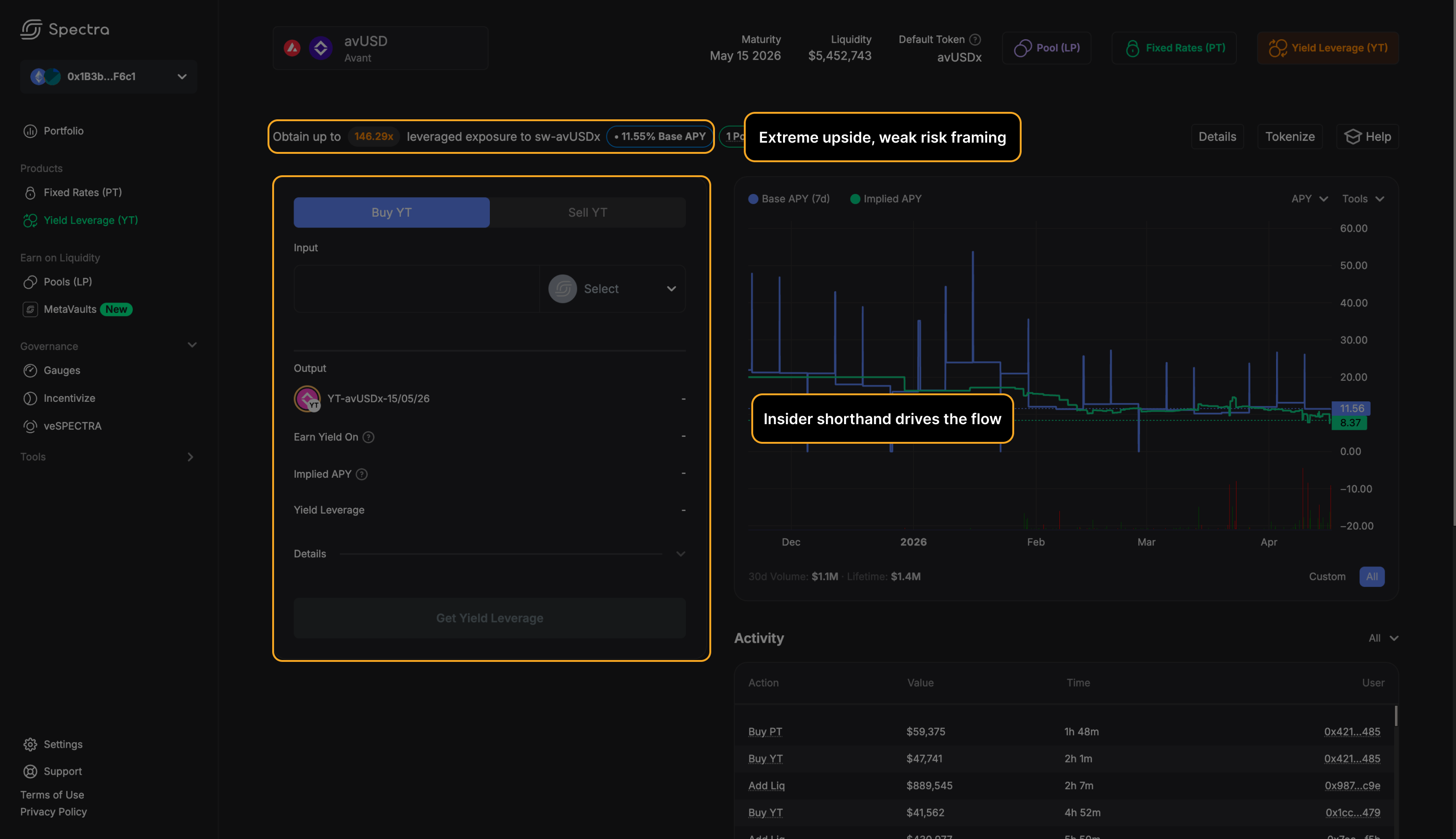

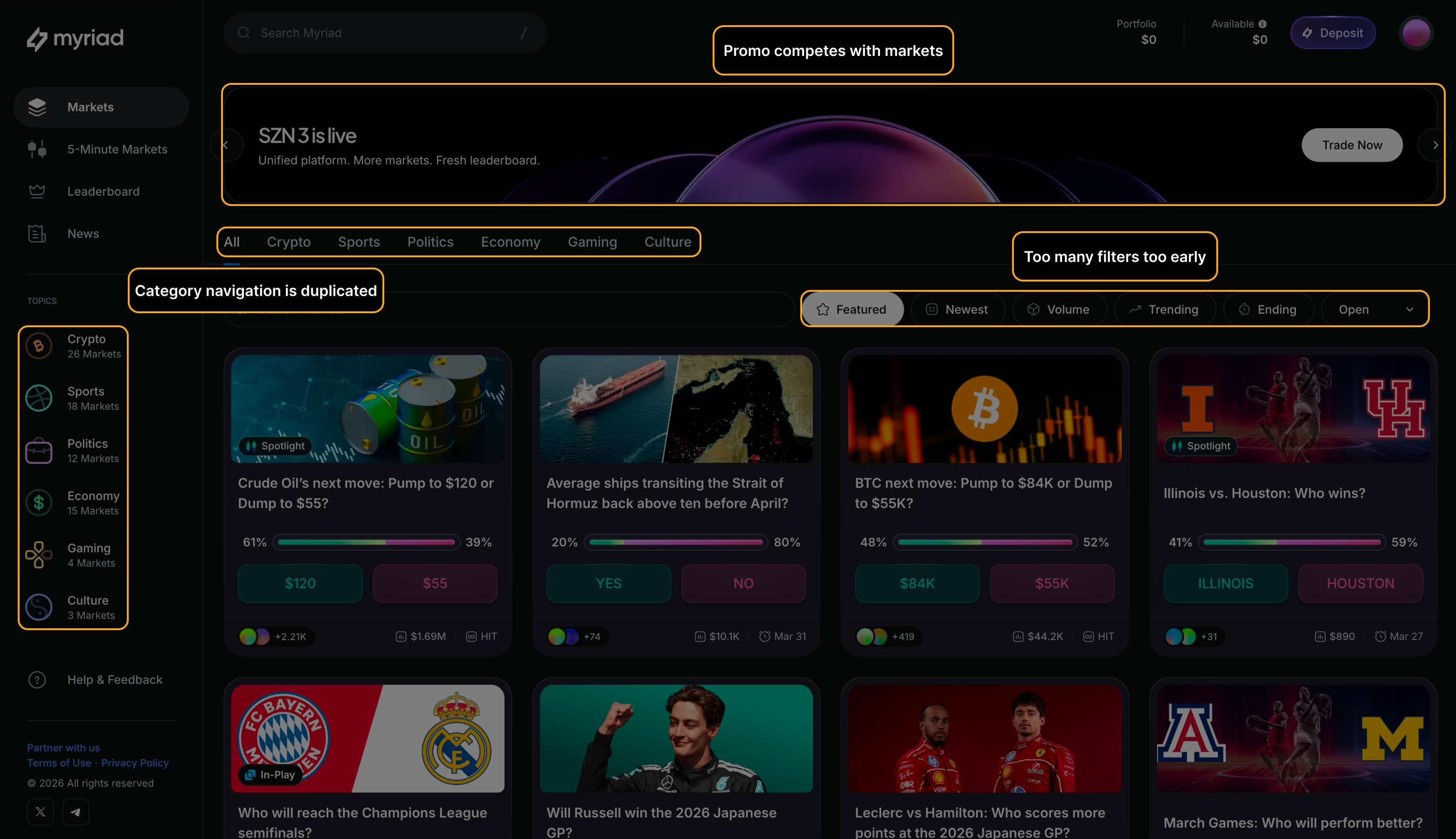

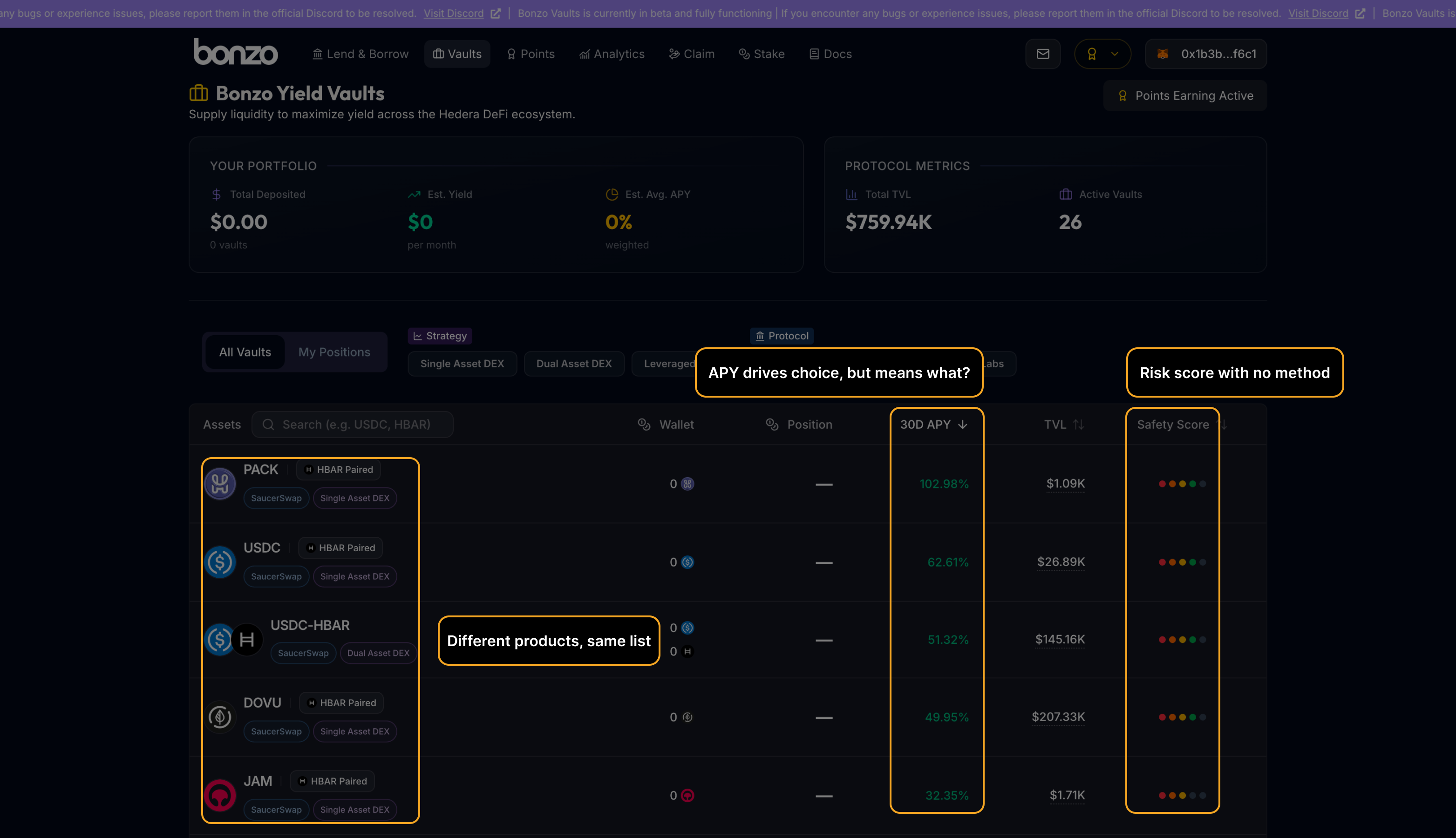

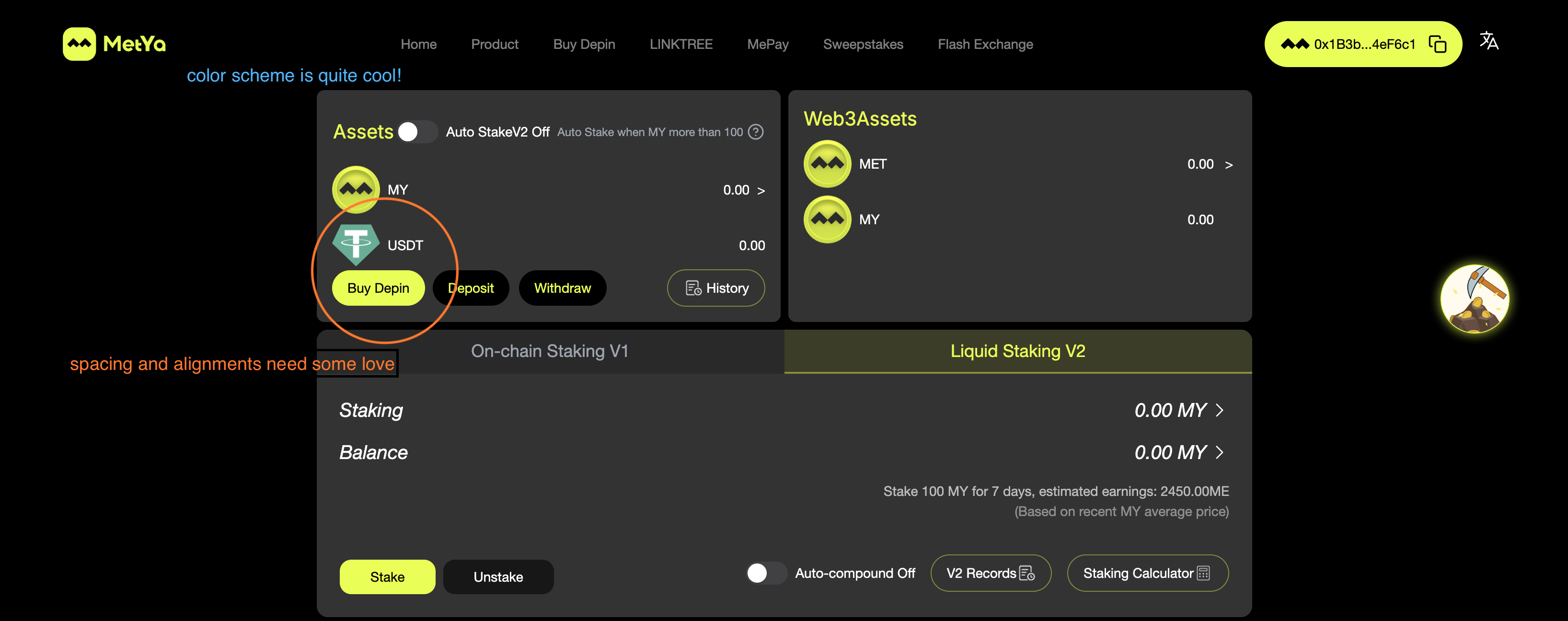

No dominant focal layer exists at the moment of commitment — every element competes at equal weight.

Yield dashboards where APY, TVL, risk rating, and CTA share identical typographic treatment.

15 years in frontend engineering and product — started in fintech building trading systems and payment flows, then optimised conversion points across SaaS products, and now focused on DeFi, where UX friction doesn't just frustrate users, it loses them funds.

I've published 95 public teardowns of real protocols — swap, bridge, borrow, and perps flows. No AI-generated templates or generic checklists. Pattern recognition built from shipping high-stakes interfaces.

£3,000

Fixed. No ranges. 1 protocol, up to 3 flows, 1 week.

Best for: teams with measurable completion issues on core transaction flows.

Not for: early prototypes without traffic.

Request the audit